First announced at this year’s GTC, the latest DRIVE Software release includes enhanced perception and visualization capabilities, as well as many of the features in the recently introduced DRIVE AP2X Level 2+ automated driving platform.

DRIVE Software provides developers with an open platform for autonomous vehicle development comprised of SDKs, powerful tools and AV applications.

DRIVE Software 9.0 includes:

- DriveWorks SDK, DRIVE IX SDK and DRIVE OS

- DRIVE AV and DRIVE IX applications

- Developer tools for compiling, debugging and profiling software

- DRIVE OS v5.1.0.2 Linux (incl. CUDA 10, TRT 5.0 & cuDNN 7.3.1)

- TU104 (Linux and QNX) & GV100 (Linux) support

This release enables a variety of autonomous driving development capabilities, including:

- The ability to add new sensor support through the DriveWorks sensor plugin framework

- The availability of powerful new DNNs

- DRIVE Calibration enhancements for side camera and radar self-calibration

- Robust path perception with ensemble using three DNNs and an optional HD map input

- A new DRIVE IX face identification functionality allowing application developers to build intelligent use cases and person-specific user experiences

- The introduction of NVIDIA DRIVE Hub which provides a unified user interface to launch all DRIVE Software applications using the display inside the car

DriveWorks

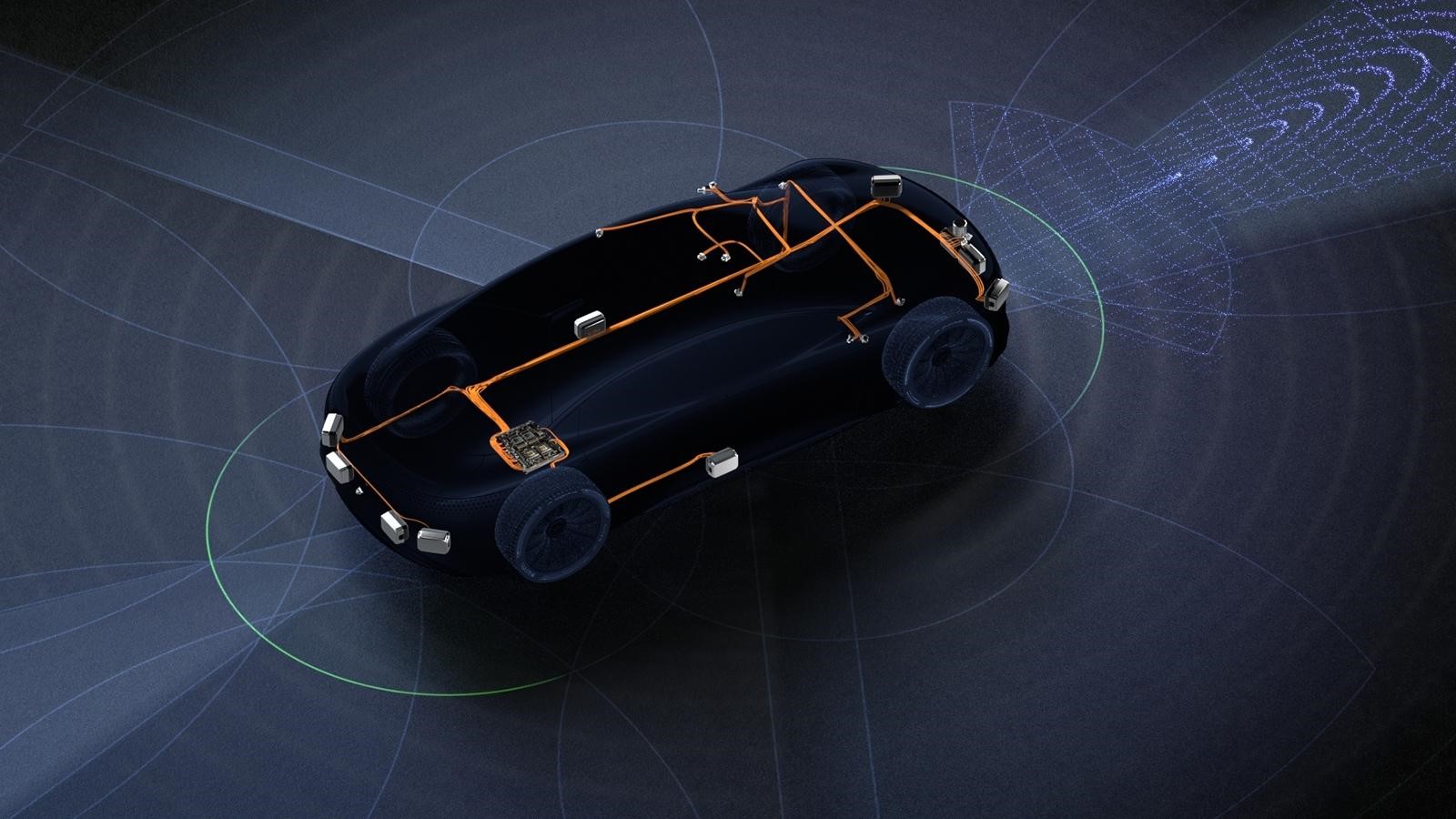

DriveWorks now provides flexible options for interfacing new autonomous vehicle sensors with the sensor abstraction layer (SAL) so that features such as sensor lifecycle management, timestamp synchronization and sensor data replay can be realized with minimal development effort.

DRIVE Software 9.0 introduces a comprehensive sensor plugin framework that enables developers to add a new lidar and IMU sensor that is not natively supported by the DriveWorks SAL. In addition to data decoding support, the framework provides the ability to implement the transport and protocol layers necessary to communicate with the sensor.

A new custom camera feature in DriveWorks enables external developers to add DriveWorks support for any image sensor using the GMSL interface on the DRIVE AGX Developer Kit.

(For more information on the sensor plugin framework, join our “Using Custom Sensors With NVIDIA DriveWorks” webinar on June 19th, 2019.)

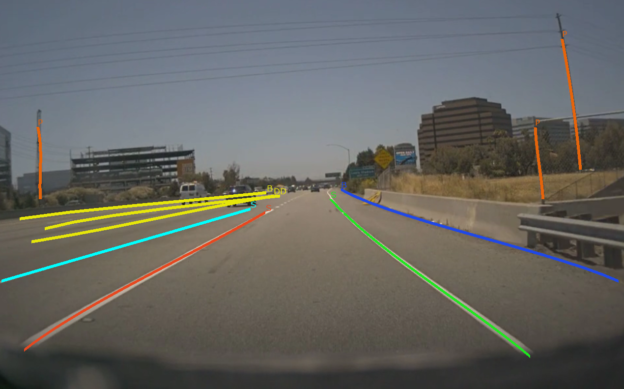

DRIVE Networks delivers multiple new and updated DNNs for obstacle, path and wait condition perception.

Many of these DNNs and applications using them can be seen in action in the DRIVE Labs video series.

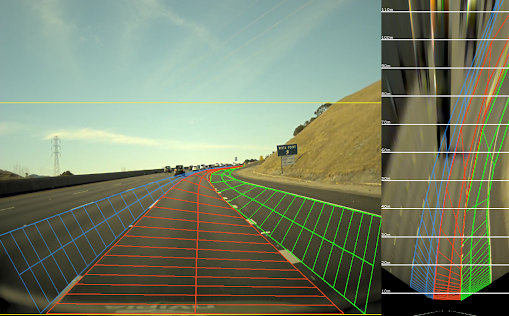

DRIVE Calibration supports the calibration of the vehicle’s camera, radar and IMU sensors. This release enhances the existing calibration by introducing side camera roll/pitch/yaw and height self-calibration as well as radar yaw self-calibration.

DRIVE AV

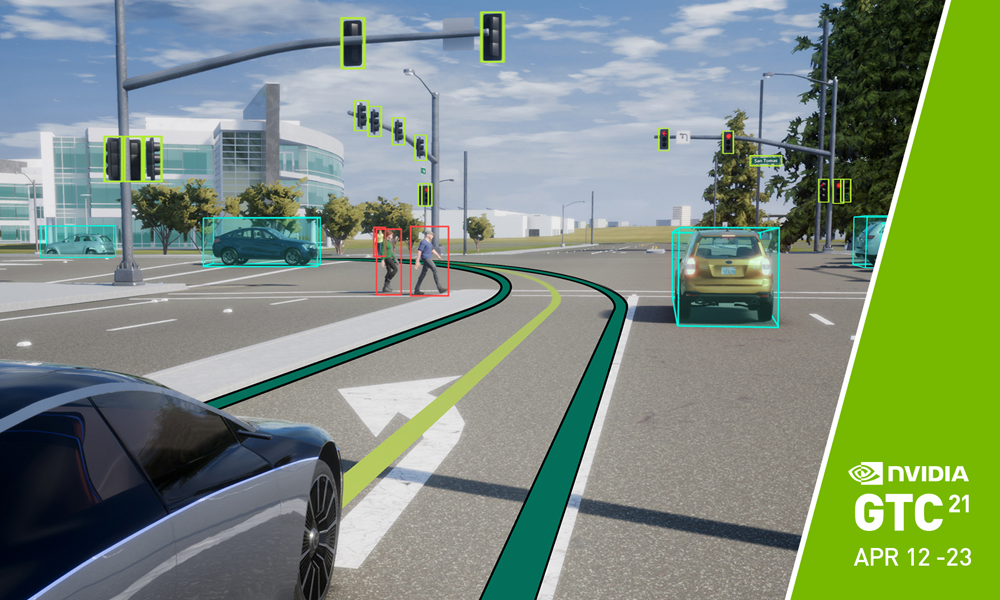

DRIVE Perception enables robust perception of obstacles, paths and wait conditions (such as stop signs and traffic lights) right out of the box with an extensive set of pre-processing, post-processing and fusion-processing modules. Together with DRIVE Networks, these form an end-to-end perception pipeline for autonomous driving that uses data from multiple sensor types (e.g. camera and radar).

New to this release are three features that can be experienced via the DRIVE AV application:

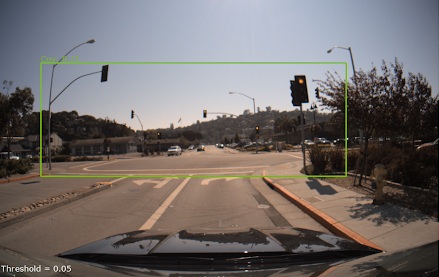

- Front camera intersection perception via WaitNet

- Optimized path perception software with support for diversity and redundancy using detections from three DRIVE DNNs (PilotNet, PathNet, LaneNet) and an optional HDMap input

- DNN-based (ClearSightNet) camera blindness detection using the front camera

Note: To fully leverage DRIVE applications and localization, a DRIVE AGX Pegasus system with Hyperion 7.1 is required.

DRIVE IX

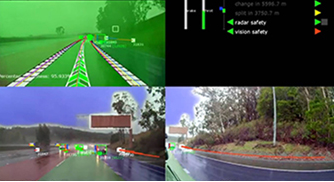

DRIVE IX is an open platform for all in-cabin AI technologies. It integrates vision, voice and graphic user experience and encompasses AI Assistant, AI CoPilot and Visualization.

DRIVE Software 9.0 release adds a new face identification functionality allowing application developers to build user specific experiences. In addition to occupant convenience, DRIVE IX includes visualization features that take complex data from the vehicle’s sensors and generates comprehensive and accurate visuals that keep the occupants informed and builds trust in the AV technology.

Developers also get a unified user interface to launch all DRIVE Software applications using the in-car display through the introduction of NVIDIA DRIVE Hub.

DRIVE Software 9.0 delivers many new features and complex applications to DRIVE AGX Xavier and Pegasus developers. Download it today and visit our DRIVE Developer site for more information.