By: Abhishek Bajpayee

This is the latest post in our NVIDIA DRIVE Labs series, which takes an engineering-focused look at individual autonomous vehicle challenges and how NVIDIA DRIVE addresses them. Catch up on all automotive posts.

Autonomous vehicles rely on cameras to see their surrounding environment. However, environmental factors such as rain, snow, or other blockages can affect camera vision. In addition to being able to make sense of its surroundings, any robust perception system should be able to reason about the validity of the data coming in from sensors. To do so, it’s necessary to detect sensor data invalidity as early as possible in the processing pipeline before data is consumed by downstream modules.

We developed ClearSightNet, a deep neural network (DNN) trained to evaluate cameras’ ability to see clearly and help determine root causes of occlusions, blockages, and reductions in visibility. It’s developed with the following requirements in mind:

- The ability to reason across a large variety of potential causes of camera blindness.

- Output meaningful information that is actionable.

- Lightweight so that it can run on multiple cameras with minimal computational overhead.

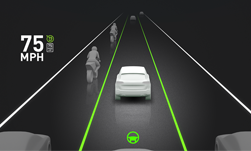

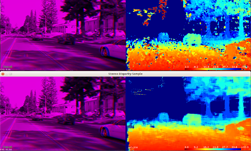

ClearSightNet segments camera images into regions corresponding to two blindness types: occlusion and reduction in visibility.

Occlusion segmentation corresponds to regions of the camera field of view that are either covered by an opaque blockage such as dust, mud, or snow or contain no information, such as pixels saturated due to sun. Perception is generally completely impaired in such regions.

Reduced visibility segmentation corresponds to regions that are not completely blocked but have compromised visibility due to heavy rain, water droplets, glare, fog, etc. Perception is often partially impaired in these regions and should be regarded as having lower confidence.

The network outputs a mask that can be overlayed on an input image for visualization where fully occluded regions are indicated by red, and reduced visibility or partially occluded regions are indicated by green. In addition, ClearSightNet outputs a ratio or percentage which indicates the fraction of the input image that is affected by occlusion or reduced visibility.

This information can be used in multiple ways. For example, the vehicle can choose not to engage autonomous function when blindness is high, alert the user to consider cleaning the camera lens or windshield, or use ClearSightNet output to inform camera perception confidence calculation.

Both current and future versions of ClearSightNet will continue to output both end-to-end analysis of and detailed information about camera blindness to enable a large degree of control on the implementation that ships with vehicles.

In terms of performance, ClearSightNet, calibrated for INT8 inference, currently runs in ~0.7 ms (discrete GPU) and in ~1.3 ms (integrated GPU) per frame on Xavier. ClearSightNet is available in the NVIDIA DRIVE Software 9.0 release. Learn more about DRIVE Networks.