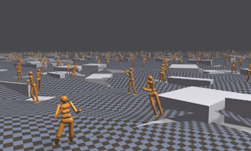

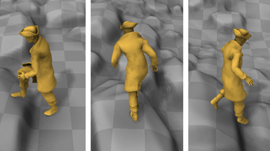

To help virtual characters move more naturally, researchers from the University of Edinburgh and Adobe developed a character control system that uses deep learning to assist characters to run, jump, avoid obstacles, open and enter through doors, pick up and carry objects from just a single model in real-time.

“Our neural architecture internally acquires a state machine for character control by learning different motions and transitions from various tasks,” the researchers stated in their paper. “Our neural network learns to generate precise production-quality animations from high-level commands to achieve various complex behaviors, such as walking towards a chair and sitting, opening a door before moving out from a room and carrying boxes from or under a desk.

What makes the work unique is that a user can seamlessly control the character in real-time from simple control commands.

Using NVIDIA GeForce GPUs with the cuDNN-accelerated TensorFlow deep learning framework, the team trained their model on 16GB of data. The full training process took 70 epochs, around 20 hours on a single GPU. After training, the network size was reduced to around 250MB.

The main components of the model’s architecture are a gating network and a motion prediction network.

The researchers explain that even though the system works reasonably well for objects such as chairs seen during training, the system fails to adapt to different geometry not seen during training. To adapt to a wider range of 3D objects, the team plans to increase training size.

The work can be applied for real-time applications such as computer games and virtual reality systems, the researchers explained.

The research is a follow-up to the team’s previous work first published in 2017. The new paper will be presented at SIGGRAPH Asia later this month.