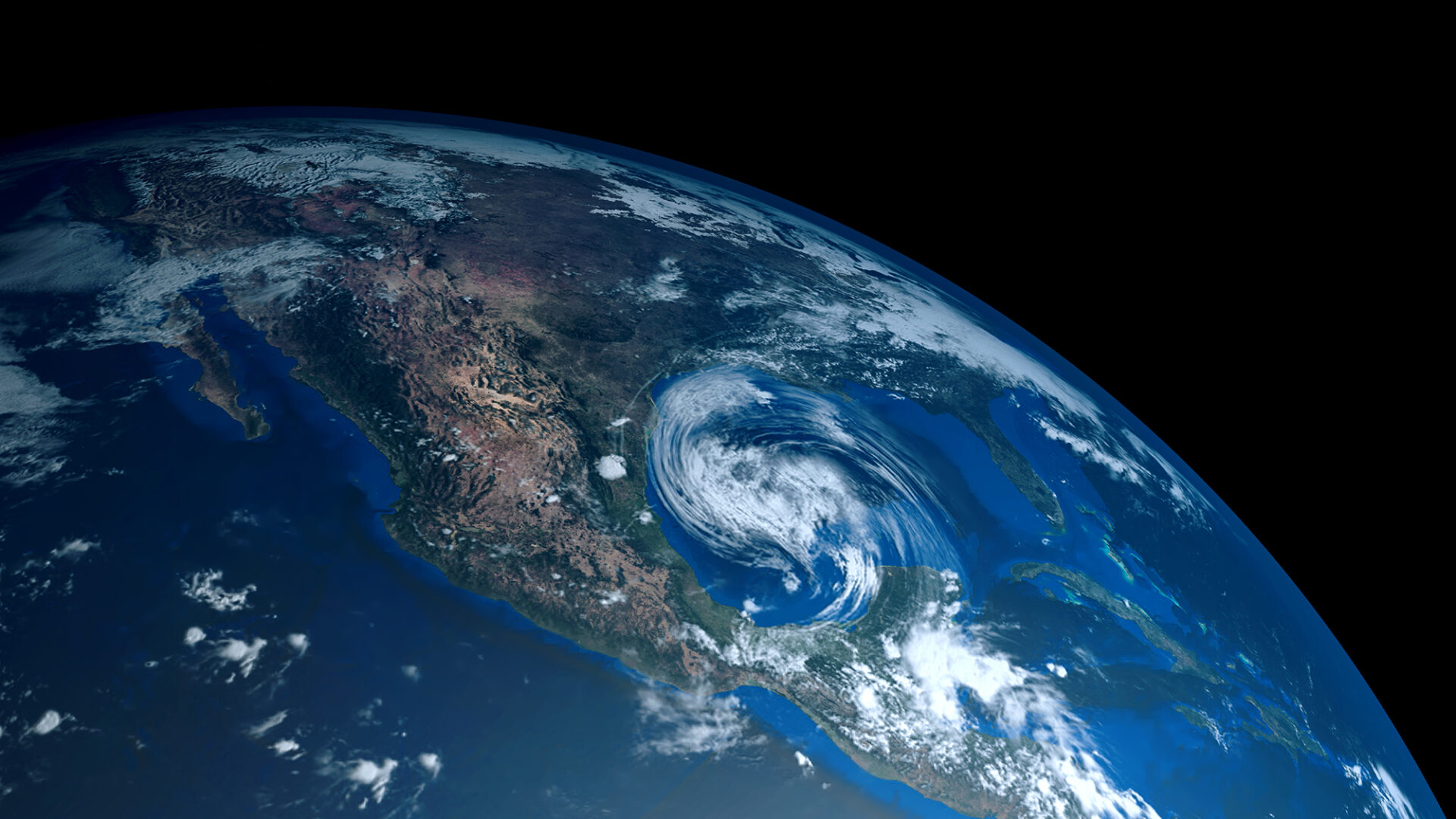

According to the National Centers for Environmental Information (NOAA) In 2018, there have been over seven weather and climate disaster events with losses exceeding $1 billion each in the United States. The most recent, Hurricane Florence, has already claimed over 51 lives.

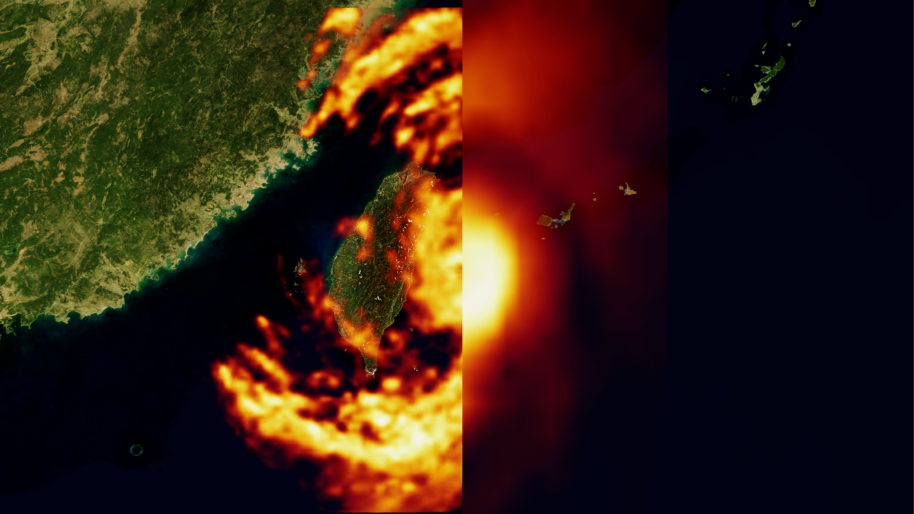

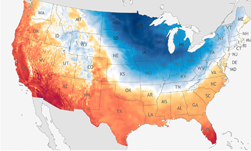

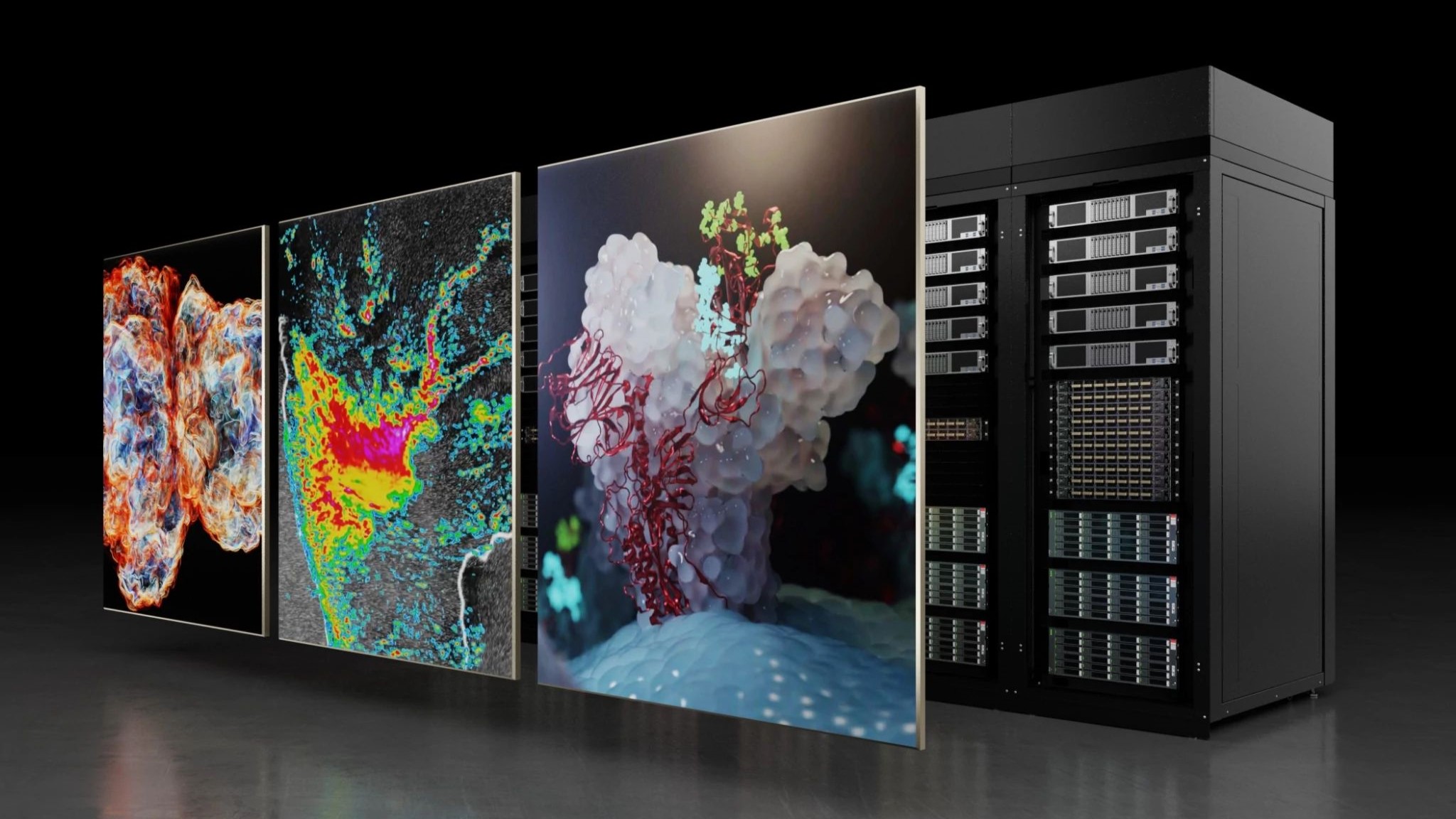

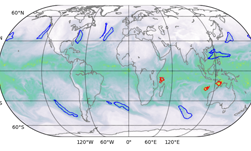

To help identify and predict weather patterns that have the potential to cause significant harm to both infrastructure and human life, a team of scientists from the Lawrence Berkeley National Laboratory in California, the Oak Ridge National Laboratory in Tennessee, and NVIDIA have developed a deep learning system that can identify extreme weather patterns from high-resolution climate simulations. The algorithm has the potential to one day help the public better prepare for extreme weather events.

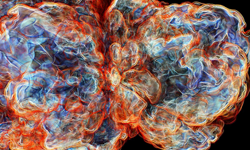

“This collaboration has produced a number of unique accomplishments,” said Prabhat, who leads the Data & Analytics Services team at Berkeley Lab’s National Energy Research Scientific Computing Center. “It is the first example of deep learning architecture that has been able to solve segmentation problems in climate science, and in the field of deep learning, it is the first example of a real application that has broken the exascale barrier,” Prabhat said.

Using the 27,000 NVIDIA Tesla V100 Tensor Core GPUs at the newly installed Summit supercomputer in Tennessee, the researchers developed an exascale-class deep learning application that has broken the exaop barrier. The team achieved a peak performance of 1.13 exaops, the fastest deep learning algorithm reported to date.

The breakthrough has also earned the team a spot on this year’s list of finalists for the Gordon Bell Prize, an award that recognizes outstanding achievements in high-performance computing.

“We have shown that we can apply deep-learning methods for pixel-level segmentation on climate data, and potentially on other scientific domains,” said Prabhat. “More generally, our project has laid the groundwork for exascale deep learning for science, as well as commercial applications.”

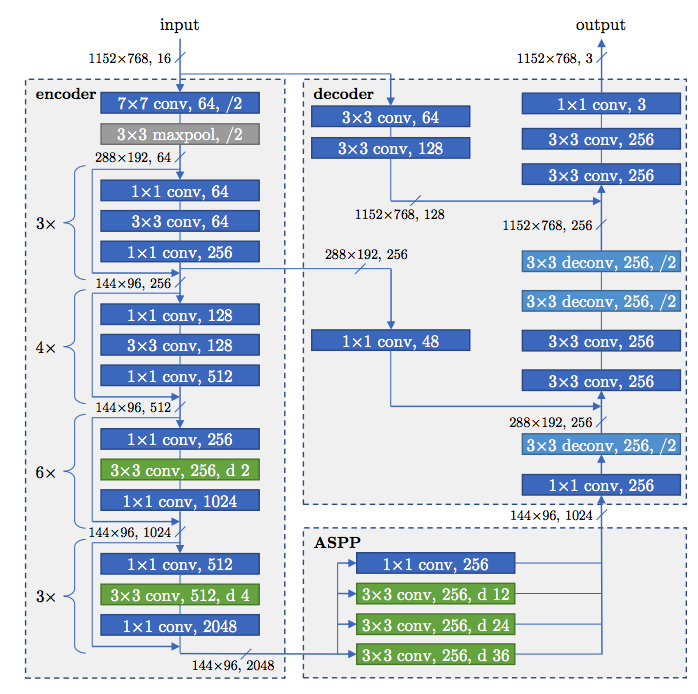

To develop their neural networks, the team first evaluated two networks for their segmentation needs. The first is a modification of the Tiramisu network, which is an extension of the ResNet architecture. The second network is based on the DeepLabv3+ encoder-decoder architecture.

Using an adaption of the two architectures, the team trained their neural networks on the NVIDIA Tesla GPUs, with the cuDNN-accelerated TensorFlow deep learning framework, on over 63,000 high-resolution images.

A paper describing the method was recently published on ArXiv. The work will be presented at SC in Dallas, Texas in November.

Read more>

AI and the Summit GPU-Accelerated Supercomputer Helps Identify Extreme Weather Patterns

Oct 08, 2018

Discuss (0)

Related resources

- GTC session: Harnessing GPUs for Accelerated Air Quality Simulations in the NASA Earth System Model

- GTC session: Predicting El Nino: Machine Learning’s Role in Advancing Complex System Understanding

- GTC session: Huge Ensembles of Weather Extremes using NVIDIA's Fourier Forecasting Neural Network (FourCastNet)

- SDK: RAPIDS Accelerator for Spark

- SDK: IndeX

- SDK: IndeX - Amazon Web Services