NVIDIA SDKs provide an API to the hardware and libraries delivered with the NVIDIA drivers. These APIs allow developers to leverage new features and performance by building their applications on the NVIDIA platform. End users of these applications benefit from frequent updates and incremental performance boosts as drivers are optimized.

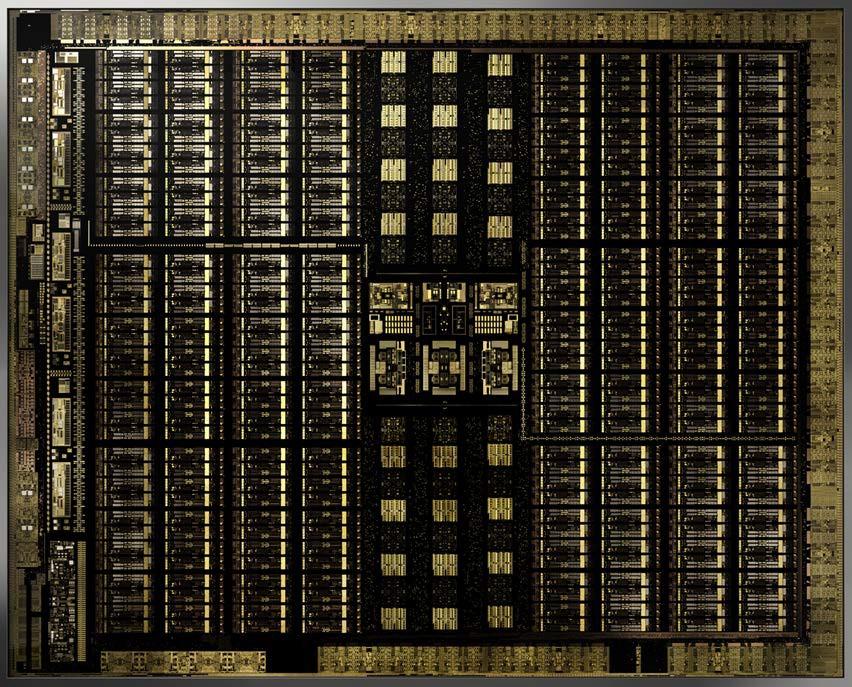

Starting today, the NVIDIA Release 418 Graphics Driver brings massive Turing-powered updates to several of NVIDIA’s flagship SDKs, like OptiX, VRWorks, Video Codec and Optical Flow. Our latest Turing architecture introduces hardware-accelerated ray tracing, AI, advanced shading and simulation with the addition of RT Cores, Tensor Cores and programmable shading technologies. And, with SDK functionality in the 418 driver, developers can simplify their workflow and time to market by tapping into functionality their users already have installed and ready to go.

Reduced Render Times with Turing Tensor Cores and RT Cores

Tensor Cores are dedicated to accelerating Artificial Intelligence calculations, providing a massive performance boost with deep learning applications such as OptiX AI-accelerated denoiser, which is already shipping in many popular applications like Autodesk Arnold, Chaos Group V-Ray, The Foundry’s Modo and much more. Tensor Cores powering the AI denoiser cut down on the number of ray calculations needed to provide a clean image, significantly reducing render times.

As a render progresses, each ray cast delivers its lighting energy to the finished image. All these rays create a speckle effect that converges to a clean image by adding the results of millions or billions of rays. This time-consuming process can be interrupted early through the use of a trained Deep Neural Network (DNN) that can interpret and smooth out the speckled result at a very early stage, massively cutting down on render time.

Now that we have reduced the number of rays required to obtain a clean image, we can accelerate the processing of these rays. To do so, we can leverage RT Cores, which accelerate Bounding Volume Hierarchy (BVH) traversal and ray/triangle intersection testing (ray casting) functions. When rays are cast through a scene (think of them as laser beams), the brunt of the work is figuring out if they hit something or not. And if they do, what has it hit and how does its surface affect energy? Is it dark and absorbing the light, or is it bright and deflecting? Is it rough and diffusing the light, or super smooth and reflective?The RT cores are specifically designed to quickly calculate these ray intersections on the scene, thus further reducing render time by cutting down on calculation needed for the rays that are left over from the AI denoiser.

Turing Accelerated OptiX 6.0

For professional rendering applications on Windows and Linux, NVIDIA offers OptiX. The recent upgrade in OptiX 6.0 brings developers a host of new features:

- OptiX 6.0.0 fully implements RTX acceleration including:

- Support for RT Cores on Turing RTX GPUs

- Separate compilation of shaders for faster startup times and updates

- RTX acceleration is supported on Maxwell and newer GPUs but require Turing GPUs for RT Core acceleration

- Multi-GPU support for scaling performance across GPUs

- Support for scaling texture memory across NVLink connected GPUs

- Triangle API with motion blur and attribute programs

- rtTrace from bindless callable programs

- Turing-specific optimizations for the OptiX AI denoiser

- API to set the stack size by providing the trace depth

- API to take advantage of hardware-accelerated 8-bit mask

Image courtesy of Mads Drøschler

GTC 2019 attendees can learn all about the new OptiX 6.0 features at session S9768 – New Features in OptiX 6.0, and attend other amazing OptiX sessions like our partners from Autodesk session S9778 – Bringing the Arnold Renderer to the GPU.

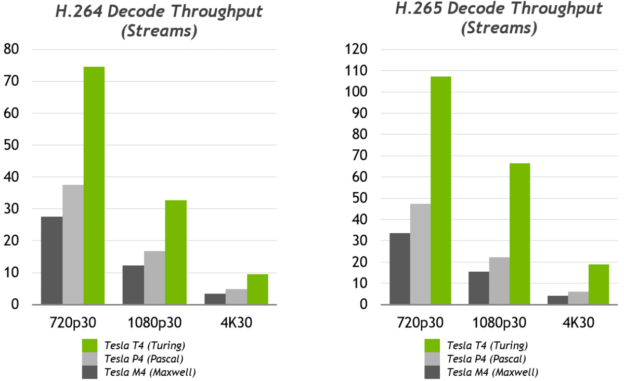

Faster Video Processing with Turing

The Turing architecture brings several enhancements to video encode and decode accelerators (NVDEC and NVENC) such as substantially improved quality of H.264 and HEVC encoding. This is much closer to CPU-based encode (but at much higher speed) and HEVC 4:4:4 decoding support. Additionally, Turing brings up to 3x improvement in the video decoder throughput in NVIDIA Quadro and Tesla professional GPUs.

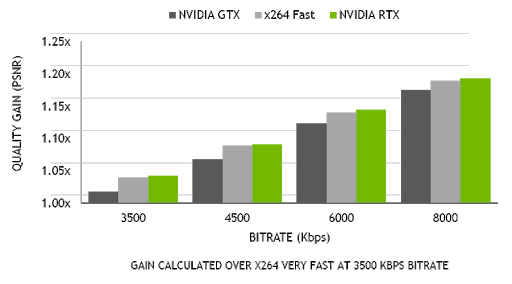

Thanks to support of HEVC B-frames and other significant optimizations in hardware and software/firmware, the new NVENC encoder in Turing provides up to 25% bitrate savings for HEVC and up to 15% bitrate savings for H.264. Turing’s new NVDEC decoder has also been updated to support decoding of HEVC YUV444 10/12b HDR at 30 fps, H.264 8K, and VP9 10/12b HDR.

Turing’s video encoder exceeds the quality of the x264 software-based encoder using the fast encode settings, with dramatically lower CPU utilization. Streaming in 4K is too heavy a workload for encoding on typical CPU setups, but Turing’s encoder makes 4K streaming possible. To dig into the details of this new release, take a look at our Turing H.264 Video Encoding Speed and Quality DevBlog.

Turing-Powered Video Codec SDK 9.0

Developers leverage this dedicated hardware encode and decode through the NVIDIA Video Codec SDK, which includes a complete set of APIs, samples and documentation for hardware accelerators used to encode and decode videos on NVIDIA GPUs, for Windows and Linux.

Our friends at Open Broadcaster Software (OBS) have already integrated the key enhancements in Video Codec SDK 9 and have reported great results. Try out their beta release now!

You can get more information or download Video Codec SDK 9.0 from our developer page. If you are attending GTC 2019, come meet the developers by attending the session S9331 – NVIDIA GPU Video Technologies: Overview, Applications and Optimization Techniques.

Turing with Optical Flow SDK 1.0

The Optical Flow SDK gives developers access to the latest hardware capability of Turing GPUs dedicated to computing the relative motion of pixels between images. This new hardware uses sophisticated algorithms to yield highly accurate flow vectors with robust frame-to-frame intensity variations, and tracks the true object motion faster and more accurately. Most importantly, the optical flow SDK provides flow vectors comparable in accuracy to some of the best-known DL-based methods with 10x-20x higher performance.

Some of the most important applications of optical flow include:

- Object tracking within video frames

- Interpolating and extrapolating video frames

- Motion prediction and action recognition

The Optical Flow SDK, which is available now, requires an NVIDIA Turing GPU and will be covered in detail at GTC 2019. Check out the session on NVIDIA GPU Video Technologies: Overview, Applications and Optimization Techniques. Developers can also get a deep dive into how to integrate the SDK by reading An Introduction to the NVIDIA Optical Flow SDK.

Shading Advancements of Turing

The Turing architecture also introduces new Streaming Multiprocessor (SM) enhancements including four new advanced shading technologies: mesh shading, texture-space shading, variable rate shading and multi-view rendering.

Mesh shading advances NVIDIA’s geometry processing architecture by offering a new shader model for the vertex, tessellation, and geometry shading stages of the graphics pipeline. This supports more flexible and efficient approaches for computation of geometry. With texture-space shading, objects are shaded in a private coordinate space (a texture space) that is saved to memory, and pixel shaders sample from that space rather than evaluating results directly. Variable rate shading (VRS) allows developers to control shading rate dynamically, shading as little as once per sixteen pixels or as often as eight times per pixel. And lastly, multi-view rendering (MVR) extends Pascal’s Single Pass Stereo (SPS) by rendering multiple views in a one pass, even if the views are based on totally different origin positions or view directions. Both VRS and MVR are accessible to developers through the recently updated VRWorks Graphics SDK.

VRWorks Graphics 3.1

For professional enterprise ISV application developers and game developers, VRWorks Graphics latest features bring a new level of visual fidelity, performance and responsiveness to virtual reality. This new release brings enhancements in OGL and Vulkan to help developers leverage the most out of their Turing GPUs as well as extended documentation and a few stability specific enhancements. VRWorks Graphics 3.1 is available through the NVIDIA developer program.

If you are attending GTC 2019, be sure to see the session on Advances in Real-Time Automotive Visualisation. Chris O’Connor from ZeroLight will provide an overview of new techniques developed by ZeroLight and discuss the challenges to achieving state-of-the-art graphical quality in virtual reality. He will also explain how the team combined eye tracking with a technique called foveated rendering, which can be used to craft more lifelike VR experiences. The Vive Pro Eye takes advantage of foveated rendering through NVIDIA’s VRWorks Variable Rate Shading (VRS), tailoring the quality of a rendered image to where the user’s eyes are focused. For more information, check out our blog on how NVIDIA RTX and ZeroLight Push State of the Art in VR.

Learn more about these new technologies at GTC 2019, where there are hundreds of sessions that will help you and your customers get the most out of the new NVIDIA Turing architecture.