The recent unveiling of Quake 2 RTX was a watershed moment for real-time computer graphics: it is the first fully path-traced game. Path tracing is an advanced rendering technique that uses ray tracing to simulate the physics of light in a scene. Quake 2 RTX shows off path tracing’s benefits, with complex lighting from hundreds of lights bringing breath-taking beauty to the twenty-one-year-old Quake 2 classic game. This article describes inventions recently discovered by a team of NVIDIA researchers that aim to stretch the capabilities of real-time path tracing to highly complex scenes with thousands of dynamic light sources

Path tracing is used ubiquitously for computer-generated movies and visual effects, and it delivers the photorealism that viewers have come to expect. Its advantage over other approaches: it’s a single unified algorithm that accurately simulates the behavior of light in almost all circumstances. In contrast, other earlier lighting techniques are specialized to individual effects (e.g., “shadows cast by smoke”.)

One example of its generality is that path tracing supports a wide variety of types of light sources—from the sun and skylight, to spotlights, to volumetric lights such as fire, to broad diffuse area light sources. All of these have very different visual appearances, but are all handled in the same way when path tracing is used. Furthermore, path tracing supports a wide variety of types of reflection from surfaces—from shiny glossy surfaces like metal, to diffuse surfaces like matte paint, to specular surfaces like water and glass. This flexibility means that path tracing scales gracefully to highly complex content.

Path tracing also allows artists and content creators to light their CG scenes using the same expectations of how lighting works in the real world, supporting scenes with thousands to millions of individual light sources. In contrast most current games only support shadows from a small number of dynamic light sources; additional lights either must be static or cannot cast shadows. Quake 2 RTX’s path tracing pushes that number up to a few hundred lights. As such, path tracing reduces the artist and engineering time (and therefore cost) required to create high-quality content.

And now, we have NVIDIA’s Turing RTX GPUs–the first GPUs with ray tracing hardware acceleration, ushering in the era of game ray/path tracing and hardware-accelerated offline path tracing. With their launch, path tracing is available to real-time graphics. What’s left to do?

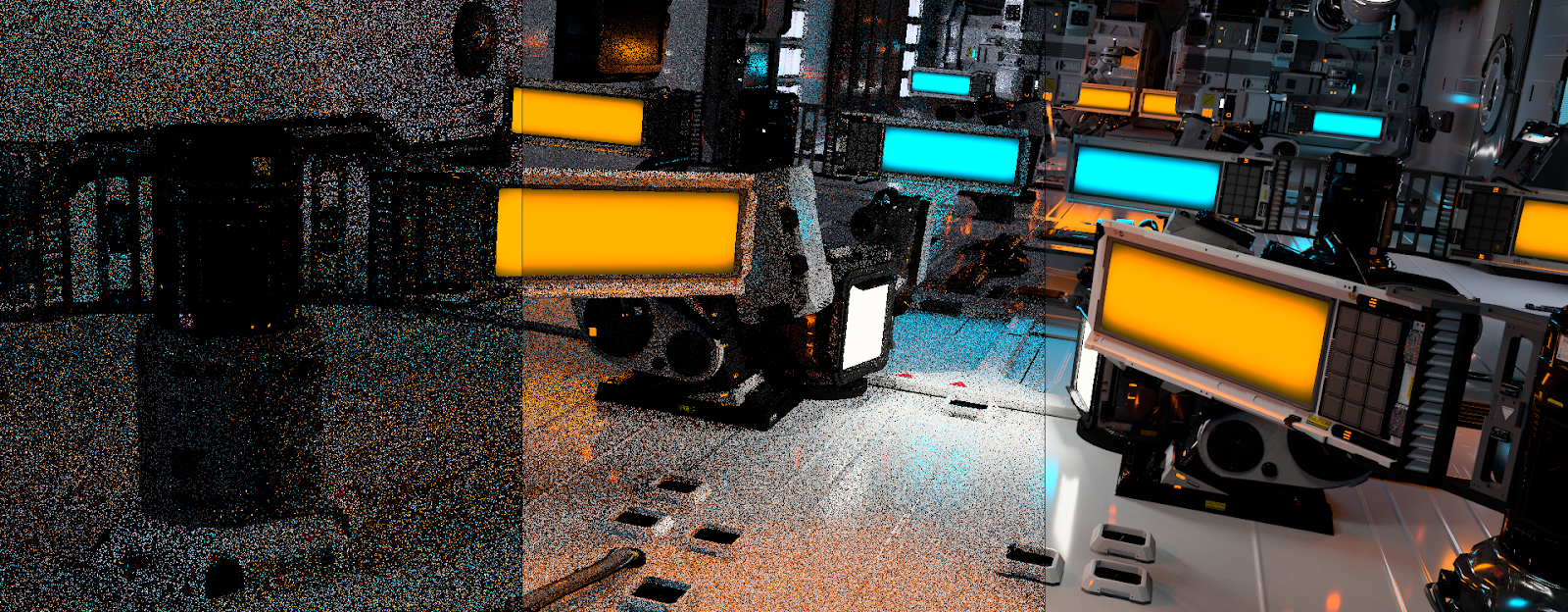

One problem: real-time renderers can’t afford to trace nearly as many rays as offline film-quality renderers, and path tracing typically requires many rays per pixel. RTX games shoot a handful of rays per pixel in the milliseconds available per frame; movies take minutes or hours to shoot thousands of rays. With low ray counts, path tracing gives characteristically grainy images. Essentially, each pixel has a different slice of information about the scene’s lighting. Ray traced games use sophisticated denoisers to remove this graininess, but path tracing complex dynamic content with a small number of rays presents additional denoising challenges.

Two key challenges to scaling real-time path tracing to more complex content are:

- Tracing the right rays: it’s important to choose each ray carefully, so that it gives as much new information as possible about the scene lighting.

- Denoising the image: even with well-chosen rays, the resulting image will be noisy. Reusing lighting information from previous frames and applying image processing algorithms to eliminate the remaining noise is also required. For this project, the researchers set a goal of using a single, unified denoising algorithm.

With path tracing, rays need to find their way to lights in the scene in order to model their illumination. It’s hard to choose the right rays for lighting in anything but the simplest scenes. Choosing the right rays is really hard in a scene with thousands of light sources. It’s really really hard in a scene with thousands of moving light sources.

Therefore, the researchers decided to tackle a scene with thousands of moving light sources.

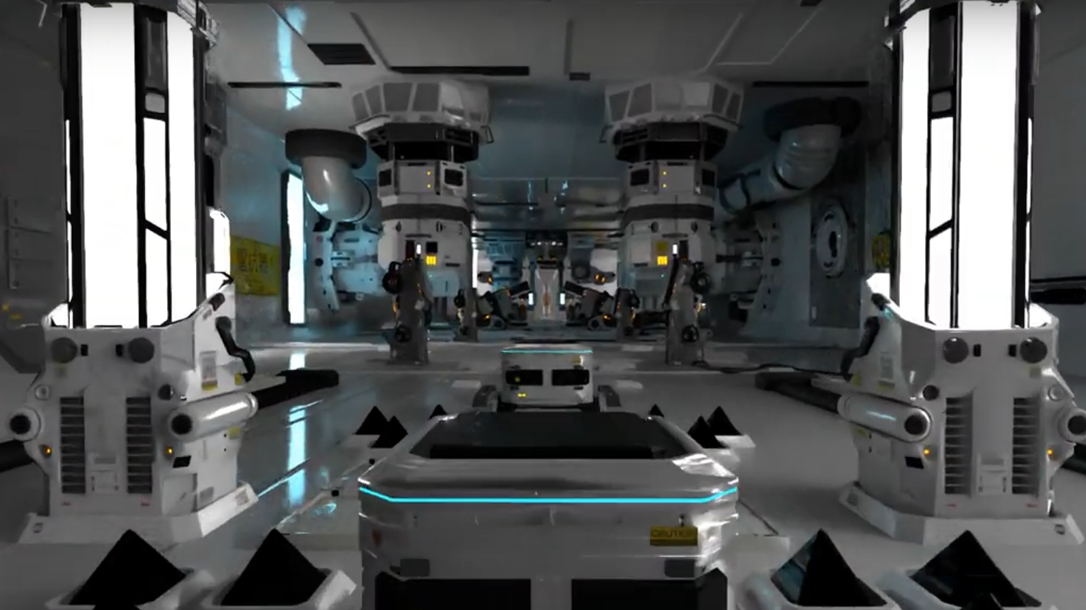

They set out to render scenes from a short film that had previously only ever been rendered using offline renderers. The short film, Zero Day, shown above, was created by artist Mike Winkelmann. It holds many challenges for real-time physically-based rendering. The scenes in Zero Day are lit by 7,203 – 10,361 moving emissive triangles; there is a lot of fast-moving geometry (so the lighting changes a lot from frame to frame, which makes it hard to reuse information from previous frames); and there is a wide variety of material types.

This scene would be unimaginable to render accurately using rasterization-based lighting—rasterization just isn’t up to the task of computing visibility from so many moving lights.

The team published a paper based on their initial discoveries—retargeting an offline light importance sampling rendering solution to fit real-time rendering constraints. They showed that building a 2-level bounding volume hierarchy (BVH) over the lights (a “light BVH”) was an effective way to choose light sources to trace rays to. This paper was published earlier this month at the High Performance Graphics 2019 conference in Strasbourg, France.

This light sampling algorithm was the first step, but the team found they needed further sampling improvements and denoising proved challenging. The combination of shadows and reflections from 1,000s of fast-moving lights with shiny materials exceeded the capabilities of current real-time denoising algorithms. The team dug in and reinvented ray sampling algorithms and deep-learning image denoisers. Many ideas were tried; some worked, and some did not. In the end, they made breakthroughs in both ray sampling and denoising.

NVIDIA researchers revealed a new demo in the Open Problems in Real-Time Rendering SIGGRAPH course Tuesday July 30, 2019 in Los Angeles, rendering two scenes from the short film interactively with both direct lighting (4 paths per pixel, 9 rays per pixel) and 1-bounce path tracing (4 paths per pixel, 17 rays per pixel). The demo sets a new standard for interactive path tracing, and further inventions are rapidly improving performance and quality.

To the best of our knowledge, this is the first time these scenes have been rendered interactively, and the researchers invite others to join them in re-inventing physically-based lighting for real-time rendering. To that end, they will soon release these two scenes in a real-time compatible file format.