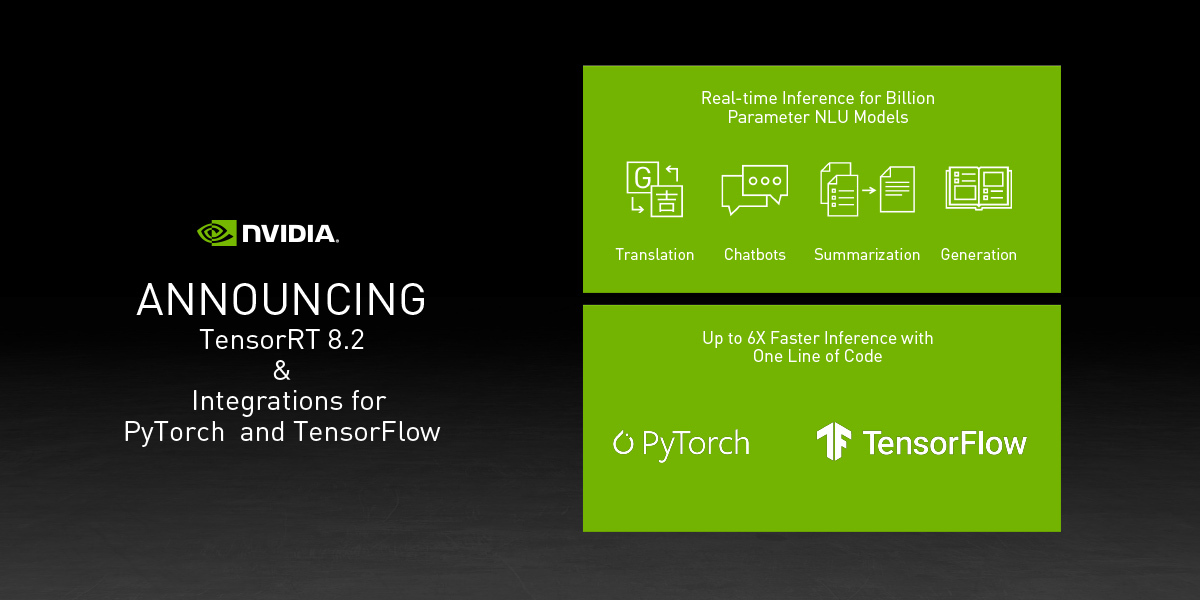

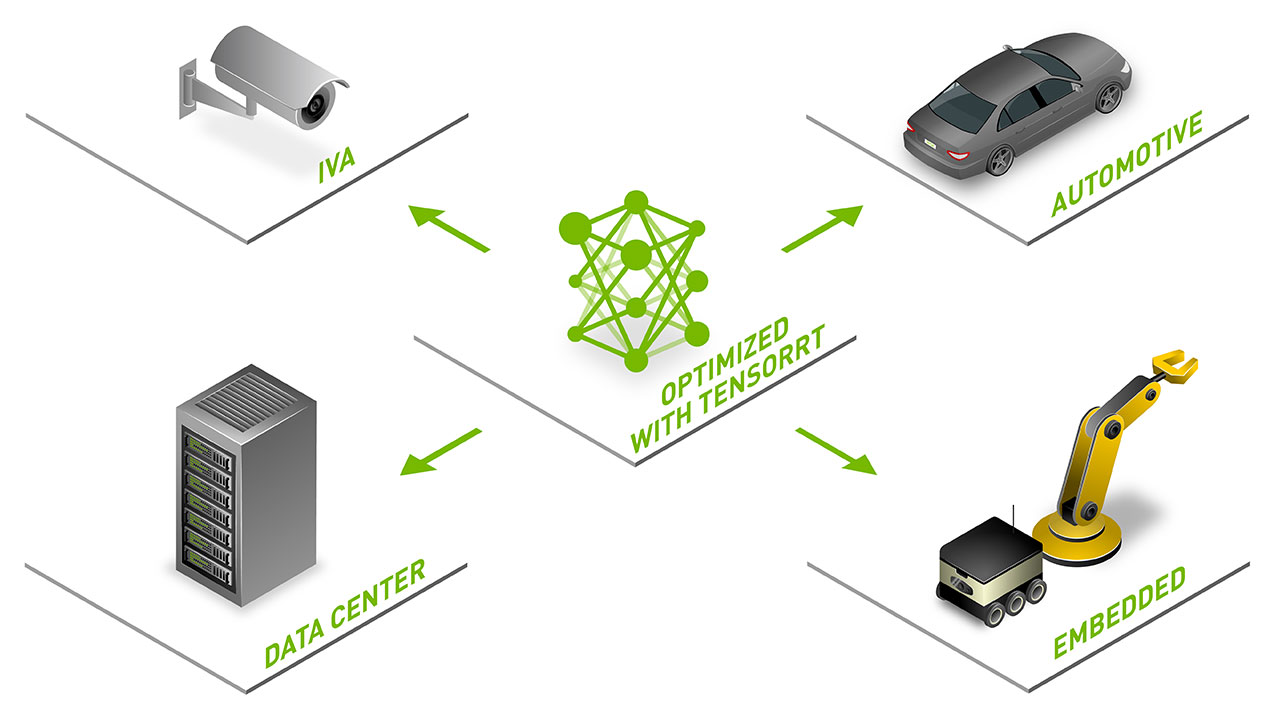

AT GTC Japan, NVIDIA announced the latest version of the TensorRT’s high-performance deep learning inference optimizer and runtime. Today we are releasing the TensorRT 5 Release Candidate. TensorRT 5 supports the new Turing architecture, provides new optimizations, and INT8 APIs achieving up to 40x faster inference over CPU-only platforms. This latest version also dramatically speeds up inference of recommenders, neural machine translation, speech, and natural language processing apps.

TensorRT 5 Highlights:

- Speeds up inference by 40x over CPUs for models such as translation using mixed precision on Turing Tensor Cores

- Optimizes inference models with new INT8 APIs

- Supports Xavier-based NVIDIA Drive platforms and the NVIDIA DLA accelerator for FP16

TensorRT 5 RC is available now to all members of the NVIDIA Developer Program.

Learn more>