By Abhinav Ayalur, Isaac Wilcove, Lynn Dang, Ricky Avina

The alarm is ringing. You smell smoke and see people running for the exit, but you don’t do the same. Why? Because you’re the fire marshal. As the fire circles, you have the responsibility of making sure everyone gets out safely before you can save yourself.

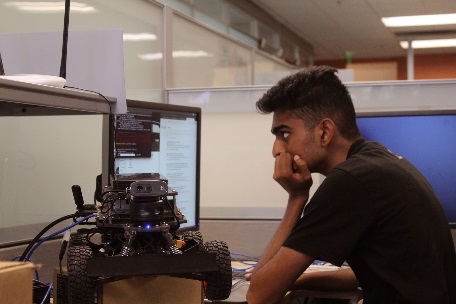

Recently, four NVIDIA Jetson high school interns used neural networks to create two high-speed RACECARs that can do the job of a fire marshal. The RACECARs do many tasks at once at speeds of upwards of 5 Hz including steering, human detection, and navigation. Using Jetson TX1 and TX2s, the robots are able to process these tasks in parallel. At speeds of up to 30 mph, the RACECARs can analyze the environment around them faster and safer than a human can.

Multiple Networks. Two Cars. One Goal.

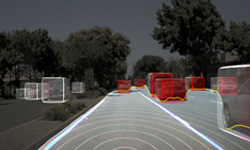

The RACECARs, named RaceX (left) and Epoch (right), have several sensors including an inertial measurement unit (IMU), a camera, and a LiDAR. The interns were able to use these sensors to fuel a custom multi-neural-network system that steers the car and detects people. These networks are brought together under a navigation structure that tells the car where to go and how to get there. All together, the whole system is autonomous.

The RACECARs, named RaceX (left) and Epoch (right), have several sensors including an inertial measurement unit (IMU), a camera, and a LiDAR. The interns were able to use these sensors to fuel a custom multi-neural-network system that steers the car and detects people. These networks are brought together under a navigation structure that tells the car where to go and how to get there. All together, the whole system is autonomous.

The networks were trained using a Tesla P40 GPUand Quadro P5000 GPUs and deployed on a Jetson TX1 and TX2 for Epoch and RaceX, respectively. The networks use Jetson to run powerful tasks at more than 30 times the speed of a CPU. Not only that, because of its parallel processing they were able to run all four of their networks simultaneously — one big person detection neural network, and 3 smaller steering neural networks that work in tandem.

The people detection network was trained using the DetectNet framework in Caffe and deployed with TensorRT and cuDNN optimization. The training data consisted of more than 500 images of people, legs, and clothing, all fed into NVIDIA’s DIGITS platform.

The steering networks were written in the Keras language and trained on top of TensorFlow. The data was collected by driving around buildings manually and logging sensor inputs as well as the controller input for training. With the controller as the labels and the sensors as the inputs, the final model gave an estimate on what it thought was the best controller input in the current situation when given sensor data as an input.

The premise behind the steering networks is to use inputs of different shapes to get a single output, in this case a turn value for steering. Using a small network for LiDAR scans, a medium sized network for images from the camera, and a small concatenation network that combines the output data of the previous two networks into a single input, the car was able to drive autonomously. The output of this final concatenation network is the turn value for the motors. The Jetson allows the team to allocate enough GPU memory to process these networks in near parallel fast enough to run at upwards of 5 Hz.

The four networks work together by reading from shared sensor buffers that collect and format real-time data scans directly from the sensors to create a coherent structure that lets the robot make decisions and alert users if anything goes wrong.

The four networks work together by reading from shared sensor buffers that collect and format real-time data scans directly from the sensors to create a coherent structure that lets the robot make decisions and alert users if anything goes wrong.

Watch the RC Car in action.

Speed is the Name, Efficiency is the Game

Looking at the big picture, the expandable nature of the platform makes it future-proof and easy to use. Along with its fast nature and ability to scope out the environment, collecting data and training networks from new locations is simple. Thanks to custom auto-labeling tools, anyone can easily generate thousands of data instances in less than half an hour by driving the car around the building. The data collected can then be converted into a model by using more custom scripts that do all the hard work of sorting, formatting, and training automatically.

“Our principal task is optimization of time in terms of the evacuation of office personnel. We’ve made substantial headway in honing our network architecture for proper concurrent processing, which increases the efficiency of processing data,” says the team.

“Our principal task is optimization of time in terms of the evacuation of office personnel. We’ve made substantial headway in honing our network architecture for proper concurrent processing, which increases the efficiency of processing data,” says the team.The Process: Next Steps

The process of developing this robot isn’t over yet. For a product to be ready for deployment in the real world, bugs have to be audited, the car must be extremely reliable, and the UI/UX for the emergency responders must be greatly overhauled.

By open-sourcing their code, the team hopes to work out lingering bugs by receiving feedback from the community along with getting suggestions on improving their architecture. In the end, the purpose of this project is to save lives, and the team realizes that to do so they need to make sure even the tiniest deficiencies are fixed.

Visit the GitHub repo here.

Learn more about the Jetson platform >

Feel free to download the source code, explore the resources, and contribute to the Jetson community!