SIGGRAPH 2019 gets off to a great start next Sunday (July 28th), as NVIDIA hosts a series of talks about deep learning for content creation and real-time rendering.

The three hour series will be packed with all-new insights and information. It’s a great opportunity to connect with and learn from leading engineers in the deep learning space. We hope you can join us at the talk – details are below!

NVIDIA Deep Learning Sessions at SIGGRAPH 2019

Deep Learning for Content Creation and Real-Time Rendering

Sunday, July 28, 2019

Room 501AB

2:00pm – 5:15pm

Introduction

Speaker: Don Britain

Deep learning continues to gather momentum as a critical tool in content creation for both real-time and offline applications. Join NVIDIA’s research team to learn about some of the latest applications of deep learning to the creation of realistic environments and lifelike character behavior. Speakers will discuss deep learning technology and their applications to pipelines for film, games, and simulation.

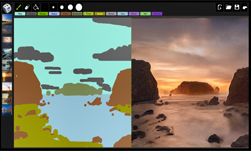

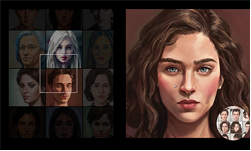

A Style-Based Generator Architecture for Generative Adversarial Networks

Speaker: Ming-Yu Liu

The speaker proposes an alternative generator architecture for generative adversarial networks, borrowing from style transfer literature. The new architecture leads to an automatically learned, unsupervised separation of high-level attributes (e.g., pose and identity when trained on human faces) and stochastic variation in the generated images (e.g., freckles, hair), and it enables intuitive, scale-specific control of the synthesis. The new generator improves the state-of-the-art in terms of traditional distribution quality metrics, leads to demonstrably better interpolation properties, and also better disentangles the latent factors of variation. To quantify interpolation quality and disentanglement, the speaker will propose two new, automated methods that are applicable to any generator architecture. Finally, the speaker introduces a new, highly varied and high-quality dataset of human faces.

The Adjoint Method Applied to Deep Learning

Speaker: Jos Stam

In this talk the speaker will present the adjoint method –- a general technique of computing gradients of a function or a simulation. This method has applications in many fields such as optimization and machine learning. One example is the popular backpropagation procedure in deep learning. Both the theory behind the technique and the practical implementation details will be provided. An adjointed version of the speaker’s well known 100 lines of C-code fluid solver will be presented. Some examples of controlling rigid body simulations will also be shown. Novel applications of the continuous adjoint method in deep learning will also be mentioned in this talk.

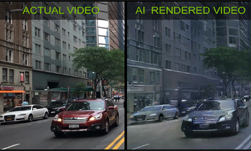

Deep Learning – Practical Considerations on the Workstation

Speaker: Chris Hebert

You may already use NVIDIA’s cuDNN library to accelerate your deep neural network inference, but are you getting the most out of it to truly unleash the tremendous performance of NVIDIA’s newest GPU architectures, Volta and Turing? In this talk, the speaker will discuss how to avoid the most common pitfalls in porting your CPU-based inference to the GPU and demonstrate best practices in a step-by-step optimization of an example network, including how to perform graph surgery to minimize computation and maximize memory throughput. Learn how to deploy your deep neural network inference in both the fastest and most memory-efficient way, using cuDNN and Tensor Cores, NVIDIA’s revolutionary technology that delivers groundbreaking performance in FP16, INT8 and INT4 inference on Volta and Turing.The speaker will also examine methods for optimization within a streamlined workflow when going directly from traditional frameworks such as TensorFlow to WinML via ONNX.

Deep Learning for Animation

Speaker: Simon Yuen

The speaker will dive into the inception of using deep learning for synthesizing animation for human motion at Nvidia. The speaker will then describe what he has learned, the pros and cons of different techniques, and where he believes this technology might be heading towards into the future.

For a complete NVIDIA at Siggraph schedule and the most recent updates please refer to our Siggraph 2019 schedule page.