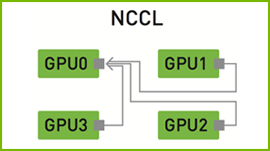

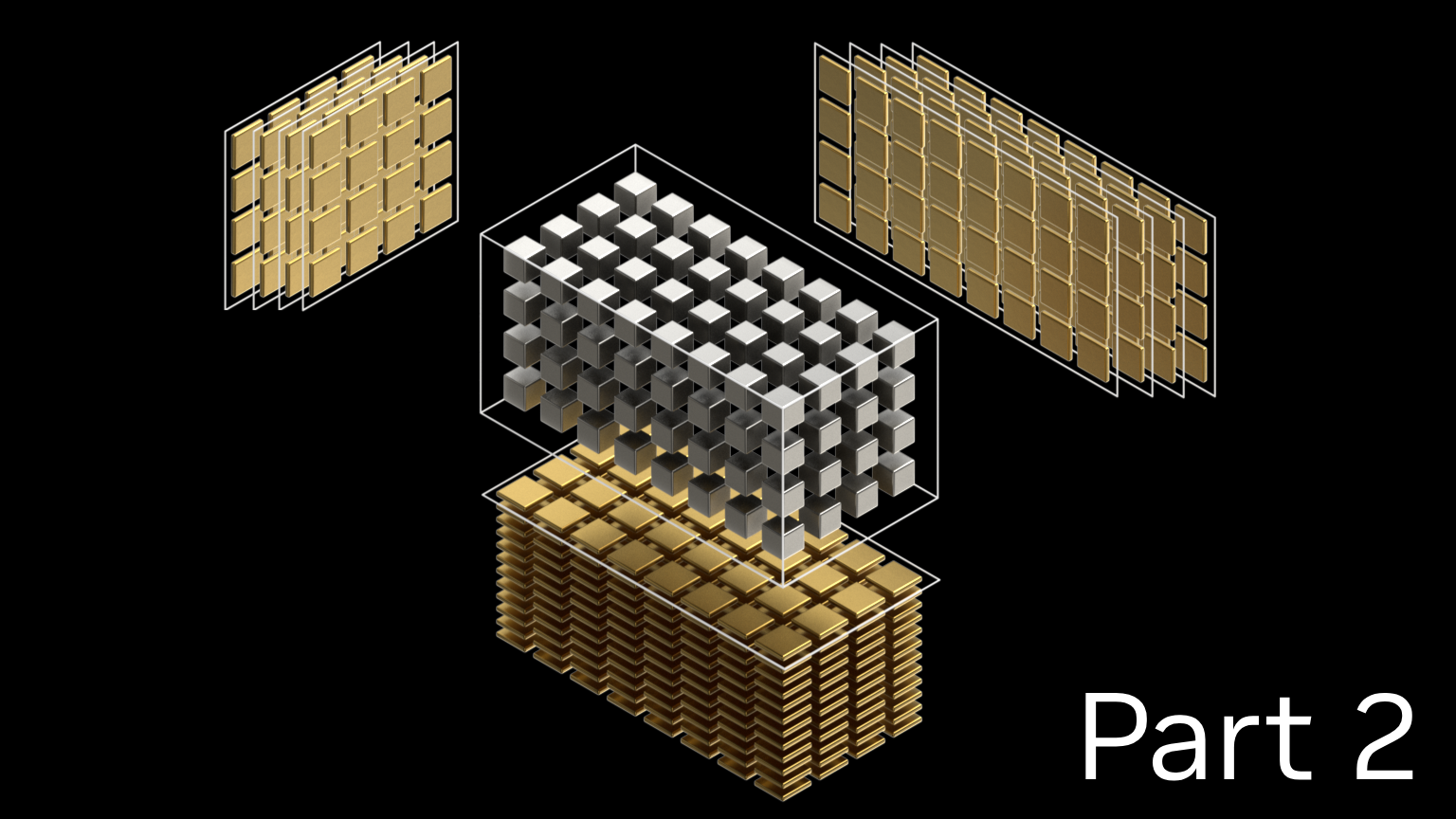

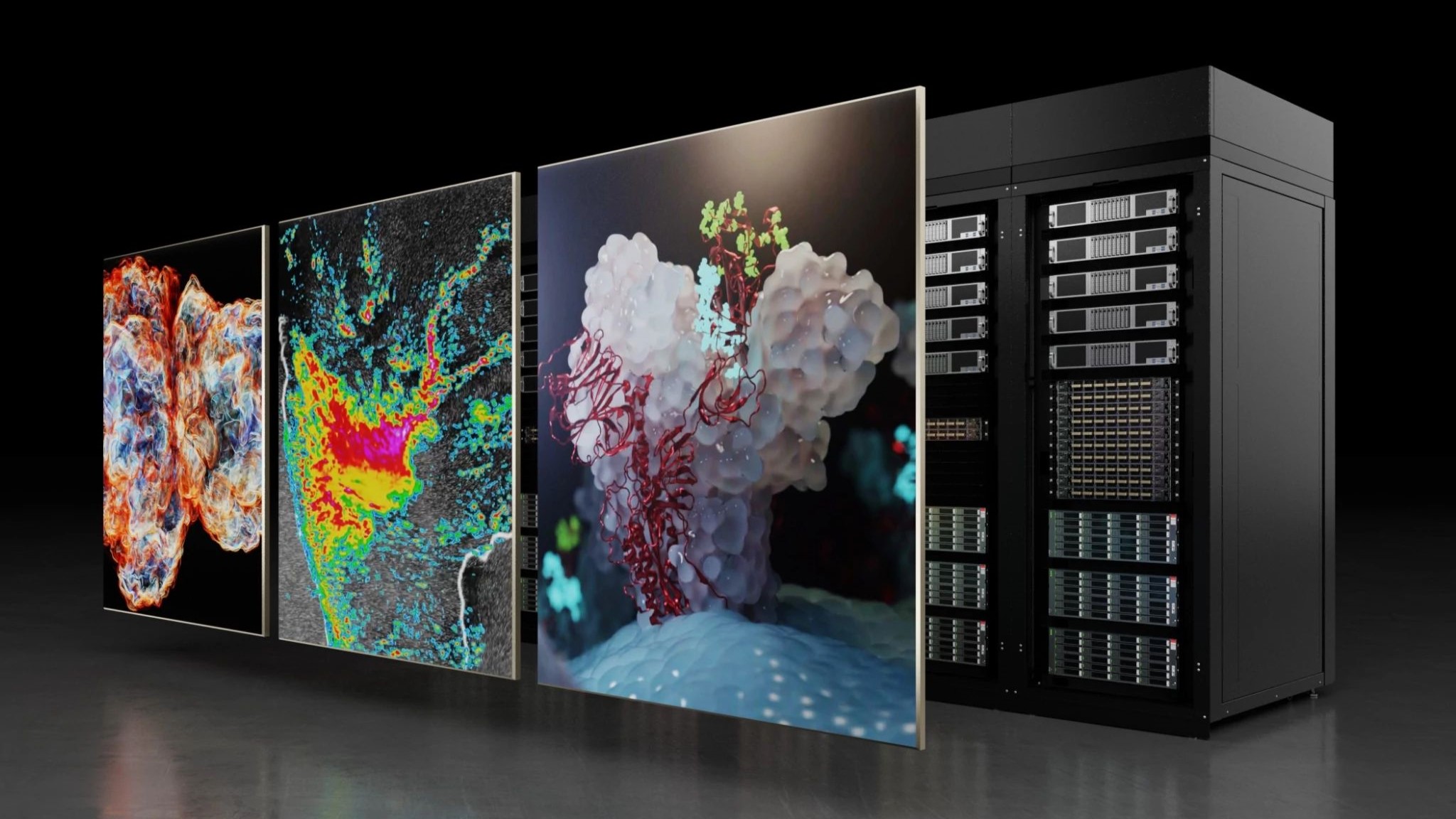

IBM Research unveiled a “Distributed Deep Learning” (DDL) library that enables cuDNN-accelerated deep learning frameworks like TensorFlow, Caffe, Torch and Chainer to scale to tens of IBM servers leveraging hundreds of GPUs.

“With the DDL library, it took us just 7 hours to train ImageNet-22K using ResNet-101 on 64 IBM Power Systems servers that have a total of 256 NVIDIA P100 GPU accelerators in them,” mentioned Sumit Gupta, VP, HPC, AI & Machine Learning at IBM Cognitive Systems. “16 days down to 7 hours changes the workflow of data scientists. That’s a 58x speedup!”

According to the researcher’s paper, the team achieved deep learning records in image recognition accuracy and training times when using the new library and 256 GPUs.

A technical preview of DDL is available in version 4 of IBM’s PowerAI enterprise deep learning software, which makes this cluster scaling feature available to any organization using deep learning for training their AI models.

Read more >

Scaling TensorFlow and Caffe to 256 GPUs

Aug 08, 2017

Discuss (0)

Related resources

- GTC session: Optimizing and Scaling LLMs With TensorRT-LLM for Text Generation

- NGC Containers: Caffe2

- NGC Containers: NVCaffe

- NGC Containers: NVIDIA NPN Workshop: Scaling Data Loading with DALI

- SDK: NVIDIA PyTorch

- SDK: FasterTransformer