AI has been trained to drive cars, recognize faces, operate heavy machinery, and even detect disease, but can it act like a dog?

Researchers at the University of Washington, in partnership with the Allen Institute for AI, developed a deep learning system that can act and take actions like a dog.

The idea of training a machine to act as a visually intelligent agent, or in this case a dog, is an extremely challenging problem and a hard task to define, the researchers said.

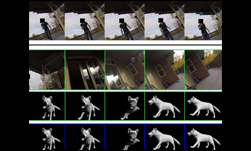

For the training, the researchers used an Alaskan Malamute named Kelp. They fitted her with a GoPro camera and six inertial measurement sensors on the legs, tail, and trunk. The researchers recorded hours of activities such as walking, following, fetching, interacting with other dogs, and tracking objects, in more than 50 different locations.

Using NVIDIA GeForce GTX 1080 GPUs, TITAN X GPUs, and the cuDNN-accelerated PyTorch deep learning framework, the researchers trained a neural network on hours of visual and sensory information obtained by Kelp, the dog.

“Dogs have a much simpler action space than, say, a human, making the task more tractable. However, they clearly demonstrate visual intelligence, recognizing food, obstacles, other humans and animals, and reacting to those inputs. Yet their goals and motivations are often unknown,” the researchers stated in their research paper.

The team said their system was able to make predictions about future movements of a dog and at the same time plan movements similar to the dog.

“Our evaluations show interesting and promising results. Our models can predict how the dog moves in various scenarios (act like a dog) and how she decides to move from one state to another (plan like a dog),” the researchers said.

In future applications, the team says they will collect more data from multiple dogs, as well as finding ways to capture sound, touch, and smell.

Read more >

Related resources

- GTC session: Empower Large-Scale AI Workloads With Google Cloud AI Hypercomputer Supercomputing Architecture (Presented by Google Cloud)

- GTC session: Reward Fine-Tuning for Faster and More Accurate Unsupervised Object Discovery

- GTC session: Live from GTC: A Conversation on the Latest in AI Research

- NGC Containers: MATLAB

- Webinar: Isaac Developer Meetup #2 - Build AI-Powered Robots with NVIDIA Isaac Replicator and NVIDIA TAO

- Webinar: Move Enterprise AI Use Cases From Development to Production With Full-Stack AI Inferencing