By Igor Tryndin, Xiaolin Lin, Eric Yuan, HaeJong Seo

As autonomous driving technology continues to advance, performance and functionality enhancements via software-defined AI are key to implementing the latest capabilities for safer, more efficient operation.

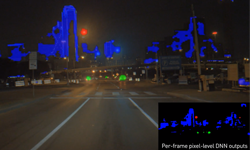

In the latest DRIVE Labs episode, we show how software-defined AI techniques can be used to significantly improve performance and functionality of our light source perception deep neural network (DNN) in a matter of weeks.

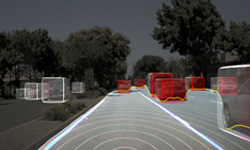

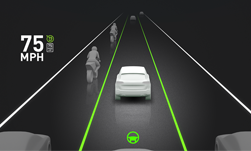

The first version of our light source perception DNN was designed to differentiate between vehicles with active lighting (e.g. head and tail lights on) versus vehicles with inactive lighting, and use these results to provide an input signal to automatically control low/high beam switching.

Thus, the first generation of this DNN provided functionality that is similar to commercial fixed-function automatic high beam control modules designed specifically for this one task.

However, a key difference between the fixed-function design approach and the software-defined design of our light source perception DNN is that we are able to rapidly increase and improve the functionality of our features through software updates, with no changes needed to the underlying hardware and platform.

New and Improved DNN

In the latest version of our light source perception DNN, we used software-defined AI techniques to notably improve and expand the DNN’s capabilities. These latest capabilities include:

- Headlight and taillight detection with increased range: detecting the light sources (i.e. headlights and taillights) from vehicles, such as cars, trucks, buses, lorries, motorcycles, etc. The detection range for vehicle lights has also been increased to above 700m to align with laser-based headlights operation.

- Street light detection: detecting illumination from street lights as a separate light source class.

- Other lights: detecting illumination that does not come from street lights or vehicle lights as a separate “other lights class”. This class would identify illumination from building lights, billboard lights, and other similar light sources.

These AI-based software-defined capabilities can significantly improve the performance and expand the functionality of in-car perception modules. For example, knowing that the scene contains illumination from several street lights could signal to the system that the road ahead is lit by street lamps and the ego car should switch its headlights to low beam mode. In some parts of the world, such behavior might even be required by regulations.

AI-Powered Infrastructure

A key aspect that enables rapid software-defined AI improvements is creating a comprehensive labeled data training set that can scale to future use cases. The labeling guidelines for the dataset are designed to be future-proof by capturing more information than is needed for just a single fixed-function feature. By rearranging and reprocessing data labels, an improved training dataset can be produced to expand and improve the DNN’s capabilities without costly relabeling efforts.

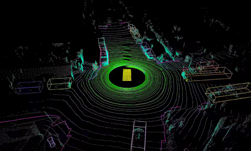

Fast and efficient development and iteration cycles of reshaping a DNN training dataset, re-designing a DNN model and loss functions, and finding optimal hyperparameters are enabled by the DRIVE infrastructure.

With this AI-powered infrastructure, many DNN training experiments or extensive hyperparameter searches can be launched efficiently in parallel. The high parallelism is achieved with multiple GPU nodes that support high data throughput, as well as tools for efficient management of large datasets.

The rapid development and iteration cycle of our DNN-based light source perception capabilities demonstrates the advantage of the software-defined AI-enabled approach. In just a few weeks, we were able to make significant advancements in the functionality and performance of our light source perception DNN, including an increase in the number of light source perception classes, as well as a 4x increase in the detection range. In a production system, these software-defined improvements could be quickly deployed to a consumer vehicle through an over-the-air software update.

Future improvements in our light source perception work will include features such as detection of flashing lights on emergency and service vehicles, and detection of specialized lighting, such as illumination used in construction zones.