This week, OpenAI released Jukebox, a neural network that generates music with rudimentary singing, in a variety of genres and artist styles.

“Provided with genre, artist, and lyrics as input, Jukebox outputs a new music sample produced from scratch,” the company stated in their post, Jukebox.

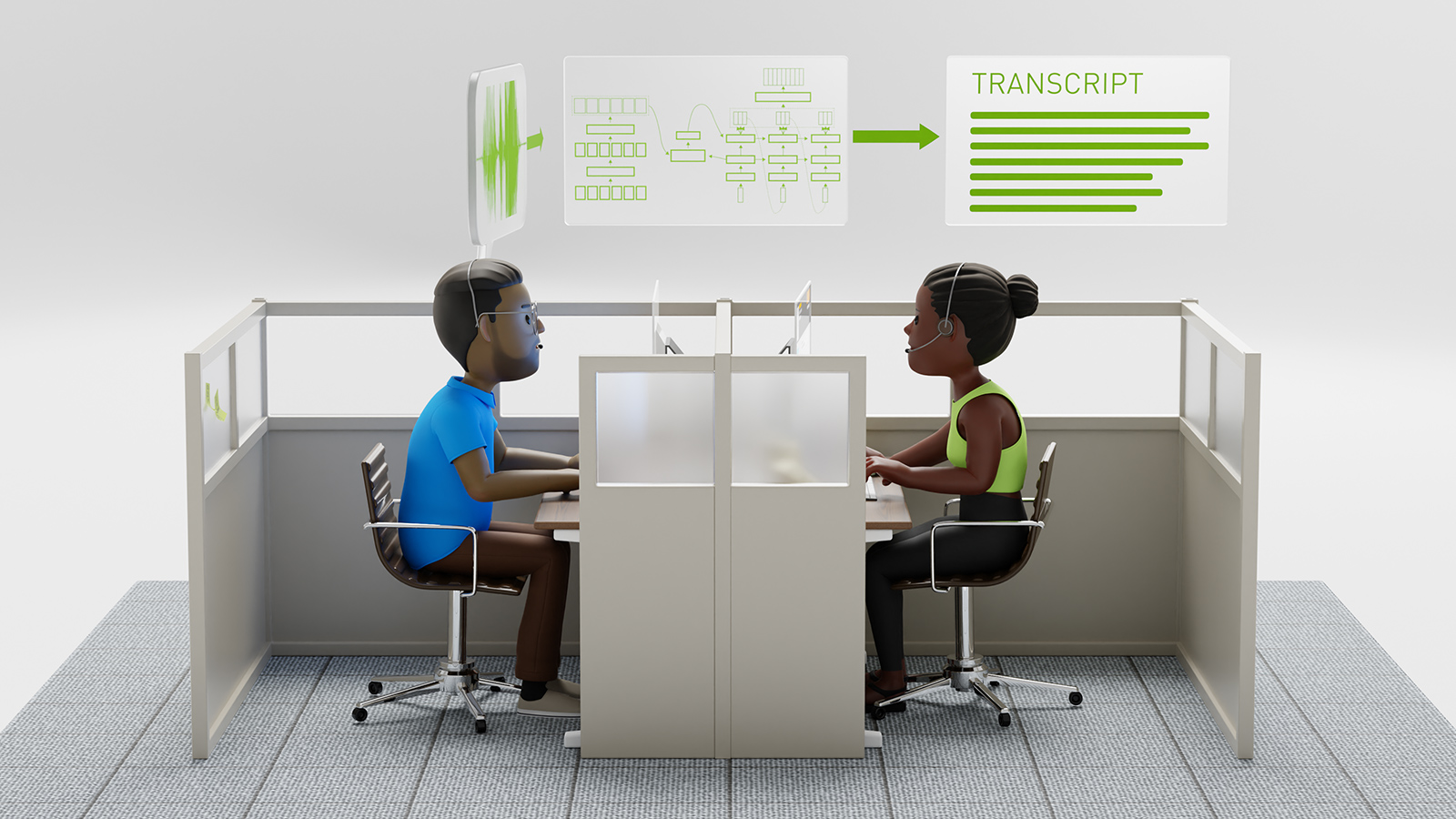

Generating CD-quality music is a challenging problem to solve, as a typical song has over 10 million timesteps. Because of that, an AI model must deal with many long-range dependencies to re-create the sound. The OpenAI team solves this using an autoencoder that compresses raw audio to a lower-dimensional space by discarding irrelevant bits of information.

The compression allows the researchers to train the model and generate audio in the compressed space, and later upsample it back to the raw audio space.

Here’s a Jukebox song generated in the style of Elvis Presley, complete with lyrics.

From dust we came with humble start;

From dirt to lipid to cell to heart.

With my toe sis with my oh sis with time,

At last we woke up with a mind.

From dust we came with friendly help;

From dirt to tube to chip to rack.

With S. G. D. with recurrence with compute,

At last we woke up with a soul.

We came to exist, and we know no limits;

With a heart that never sleeps, let us live!

To complete our life with this team

We'll sing to life; Sing to the end of time!

Our story has not ended.The new model builds on the company’s previous work on MuseNet, which explored synthesizing music based on large amounts of MIDI data. “Now in raw audio, our models must learn to tackle high diversity as well as very long-range structure, and the raw audio domain is particularly unforgiving of errors, in short, medium, or long-term timing,” the organization stated.

To train the model, the team crawled the web to curate a new dataset of 1.2 million songs, 600,000 of them in English, paired with corresponding lyrics and metadata. The metadata included genre, artist, and year of the songs.

To increase the size of the dataset, the team performed data augmentation by randomly downmixing the right and left channels to produce mono audio.

Training details:

- In total, the VQ-VAE model has two million parameters and is trained on 9-second audio clips on 256 NVIDIA V100 GPUs for three days.

- The upsampling portion is composed of one billion parameters and was trained on 128 V100 GPUs for two weeks.

- The top-level position has five billion parameters and is trained on 512 NVIDIA V100 GPUs for four weeks.

- For the lyrics, the model is trained on 512 NVIDIA V100 GPUs for two weeks.

OpenAI has standardized its deep learning frameworks on PyTorch, and this project continues that pattern.

Inference is also performed on the NVIDIA V100 GPU. With one GPU, it takes around three hours to fully sample 20 seconds of music.

The following Jukebox song mirrors the style of Frank Sinatra.

Ohh, it's hot tub time!

It's Christmas time, and you know what that means,

It's hot tub time!

Some people like to go skiing in the snow,

But this is much better than that,

So grab your bathrobe and meet me by the door,

Ohh, it's hot tub time!

It's Christmas time, and you know what that means,

It's hot tub time!The code and model are available in the openai/jukebox GitHub repo. The company has also published a whitepaper, Jukebox: A Generative Model for Music (PDF).