This week at the annual Computer Vision and Pattern Recognition Conference in Salt Lake City, Utah, NVIDIA researchers won several awards and competitions for their work in furthering AI technologies.

The research teams, led by Jan Kautz, Bryan Catanzaro, and John Zedlewski, were awarded for the following competitions:

Robust Vision Challenge – Optical Flow Category: 1st Place

The focus of this challenge is on fostering vision systems that are robust and consequently perform well on a variety of datasets, the organizers said.

The team comprised of Deqing Sun, Xiaodong Yang, Ming-Yu Liu, and Jan Kautz, secured the top spot for their work on PWC-Net, a compact, yet effective CNN model for optical flow.

“PWC-Net has been designed according to simple and well-established principles: pyramidal processing, warping, and the use of a cost volume,” the team said. “Cast in a learnable feature pyramid, PWC-Net uses the current optical flow estimate to warp the CNN features of the second image. It then uses the warped features and features of the first image to construct the cost volume, which is processed by a CNN to estimate the optical flow.”

The team says PWCNet is 17 times smaller in size and easier to train than the recent FlowNet2 model.

Workshop on Autonomous Driving (WAD) – Task 3: Domain Adaptation of Semantic Segmentation: 1st Place

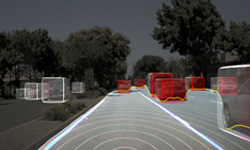

Domain adaptation concerns adapting a visual recognized trained in one domain to a different domain. These two domains can differ in weather conditions (sunny vs rainy), time of the day, and geographic locations (e.g., US vs EU), which makes it challenging for models trained in one domain to work well in another. As a self-driving car is expected to often work across various domains, domain adaptation is considered an important topic.

The NVIDIA team, comprised of Aysegul Dundar, Zhiding Yu, Ming-Yu Liu, Ting-Chun Wang, John Zedlewski, and Jan Kautz, seeks to adapt a semantic segmentation model trained using images captured in California to segment images captured from Beijing. The NVIDIA team combines techniques from image-to-image translation to iterative self-training to achieve good domain adaptation performance.

Workshop on Autonomous Driving (WAD) – Task 4: Instance-level Video Segmentation: 3rd Place

The goal of instance segmentation is to label each entity in a scene separately. The output is a labeling for every pixel in the video that describes which instance that pixel belongs to, differentiating not just between types of objects (cars, trees, buildings), but also between individual objects. This task has many applications in computer vision, but remains challenging, partially because of a lack of finely detailed ground truth labels with which to train models. The WAD challenge contains videos with many labeled instances per frame.

The NVIDIA entry, from Matthieu Le, Fitsum Reda, Karan Sapra, and Andrew Tao used a Mask R-CNN based neural network, fine tuned on the WAD instance level dataset, trained on NVIDIA’s SaturnV cluster built of Tesla V100 GPUs.

CVPR 2018 “Best Paper Honorable Mention Award”

Yesterday, an NVIDIA team won the “Best Paper Honorable Mention Award” for their research paper “SPLATNet: Sparse Lattice Networks for Point Cloud Processing.”

The research describes a new deep learning-based architecture that processes point clouds without any pre-processing. Point clouds are defined by X, Y and Z coordinates and are intended to represent a 3D object or a scene. They are generally created by remote sensing or photogrammetry, which is the science of taking measurements from photographs.

The CVPR conference continues through Friday, June 22 at the Salt Palace Convention Center in Salt Lake City, Utah.

Read more>