NVIDIA DRIVE Software 10.0 Delivers New Intelligent Perception for Autonomous Vehicle Development

DRIVE Software 10.0 brings advanced perception capabilities and new sensor functionalities to the NVIDIA autonomous vehicle software stack.

DRIVE Software is open, allowing developers to integrate their own applications, and includes an array of redundant and diverse deep neural networks (DNNs) that power surround perception, localization, path planning and more.

With this latest release, developers have more DNNs, sensor plugins and applications at their fingertips for Level 2+ driving and beyond.

DRIVE Software 10.0 is now available to developers for download here.

Meet the Latest DNN Capabilities

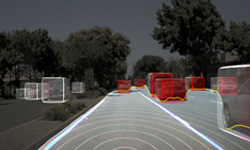

The DNNs in DRIVE Software cover everything from reading signs to identifying intersections to detecting driving paths, achieving comprehensive 360-degree perception.

DRIVE Software 10.0 includes enhanced intersection detection. The WaitNet DNN perceives intersections based on context. Starting with this release, WaitNet can perceive multiple intersections at a time. Plus, it supports traffic light and traffic sign detection and classification.

In addition to perceiving other cars and pedestrians on the road, DriveNet now includes temporal modeling. This allows the neural net to predict time to collision with objects on the road to help determine when to safely brake or accelerate.

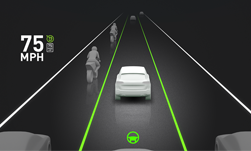

Finally, other DNNs have added features for even greater highway driving capability. PathNet now extends to highway on-ramps and off-ramps, while MapNet supports detection of lane edges for more robust lane centering. The new AutoHighBeamNet generates control signals for the vehicle’s high beam lights to increase night time visibility while avoiding glare to other road users.

Making Sense of Vehicle Sensors

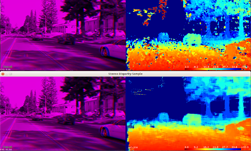

DRIVE Software 10.0 makes it easier to integrate new sensors into the DriveWorks sensor abstraction layer (SAL), with plugin support for additional sensor types.

The DriveWorks sensor plugin framework enables the incorporation of new AV sensors with the SAL, minimizing the development effort for features such as sensor lifecycle management, timestamp synchronization, and sensor data replay. The Sensor Plugin Framework now supports radar, GNSS and CAN sensors in addition to the lidar, IMU and GMSL camera plugin support.

The DriveWorks SDK also includes an ever-expanding collection of accelerated algorithmic modules for computer vision that are compatible with the SAL. DriveWorks modules are designed to be modular, open and easy to integrate, abstracting away the details of the underlying DRIVE AGX hardware engines and sensor specifics.

This release includes new image processing modules for scaling and pose estimation as well as hardware acceleration support for pyramid filtering and stereo modules to develop robust camera pipelines.

Look at the World From Every Angle

With DRIVE Software 10.0, autonomous vehicles can gain an even greater understanding of their surrounding world, as well as their exact location in it.

DRIVE Software 10.0 is the first to open up our Localization API. Using high-definition maps and road landmarks, localization makes it possible for autonomous vehicles to pinpoint their location within centimeters. This precision enables cars to detect when a lane is forking or merging, plan lane changes and determine lane paths even when markings aren’t clear.

These are just a few of the latest features arriving with DRIVE Software 10.0. You can learn more here, and download the release here.