At the GPU Technology Conference, NVIDIA announced new updates and software available to download for members of the NVIDIA Developer Program.

CUDA Toolkit

CUDA 9.2 includes updates to libraries, a new library for accelerating custom linear-algebra algorithms, and lower kernel launch latency. With CUDA 9.2, you can:

- Speed up RNNs and CNNs through cuBLAS optimizations

- Speed up FFT of prime size matrices by through Bluestein kernels in cuFFT

- Accelerate custom linear algebra algorithms with CUTLASS 1.0

- Launch CUDA kernels up to faster than CUDA 9 with new optimizations to the CUDA runtime

Additionally, CUDA 9.2 includes bug fixes and supports new operating systems and popular development tools.

Learn more >

NV Deep Learning SDK

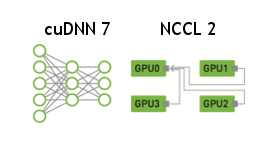

cuDNN

Deep learning frameworks using cuDNN 7 and later, can leverage new features and performance of the Volta architecture to deliver up to 3x faster training performance compared to Pascal GPUs.

cuDNN 7.1 highlights include:

- Automatically select the best RNN implementation with RNN search API

- Perform grouped convolutions of any supported convolution algorithm for models such as ResNeXt and Xception

- Train speech recognition and language models with projection layer for bi-directional RNNs

- Better error reporting with logging support for all APIs

NCCL

NVIDIA Collective Communications Library (NCCL) offers multi-GPU and multi-node collective communication primitives for HPC and deep learning applications used by leading deep learning frameworks such as Caffe2, Microsoft Cognitive Toolkit, MXNet, PyTorch and TensorFlow.

NCCL 2.2 delivers faster multi-GPU training of deep neural networks on such as ResNet50 and other larger networks, with aggregated inter-GPU reduction operations. NCCL 2.2 will be available in May.

Learn more >

TensorRT

Today we announced TensorRT 4 with capabilities for accelerating popular inference applications such as speech, recommendation systems, natural language processing (NLP) and neural machine translation.

With TensorRT 4, you also get an easy import path for popular deep learning frameworks such as Caffe 2, MxNet, CNTK, PyTorch, Chainer through the ONNX format.

- 45x higher throughput vs. CPU with new layers for Multilayer Perceptrons (MLP) and Recurrent Neural Networks (RNN)

- 50x faster inference performance on V100 vs. CPU-only for ONNX models imported with ONNX parser in TensorRT

- Support for NVIDIA DRIVE Xavier – AI Computer for Autonomous Vehicles

- 3x Inference speedup for FP16 custom layers with APIs for running on Volta Tensor Cores

Frameworks

TensorFlow

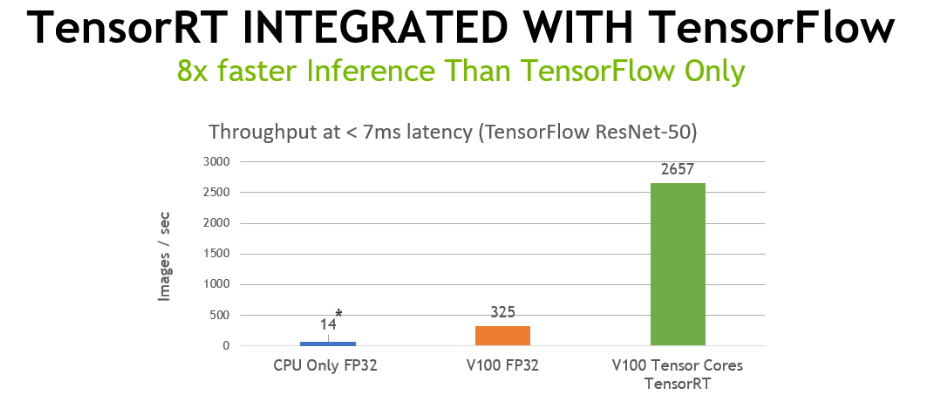

TensorFlow announced integration with NVIDIA’s TensorRT programmable inference accelerator solution. With TensorFlow 1.7, developers get the full power of NVIDIA GPUs while TensorRT developers get an easy way to use TensorRT within TensorFlow. TensorFlow integrated with TensorRT performs deep learning inference 8x faster under 7ms compared to inference in TensorFlow-only on GPUs.

Read more on the NVIDIA Developer Blog >

MATLAB

MathWorks, the makers of MATLAB, announced MATLAB and TensorRT integration through GPU Coder Toolbox. This helps engineers and scientists automatically generate CUDA code with TensorRT integration, from MATLAB code. MATLAB users can now automatically deploy high-performance inference applications for Jetson, DRIVE and Tesla platforms.

Data Center Software

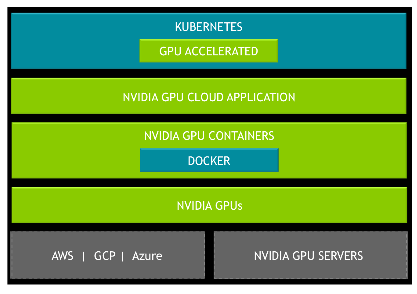

Kubernetes on NVIDIA GPUs

Kubernetes on NVIDIA GPUs enables enterprises to scale up training and inference deployment to multi-cloud GPU clusters seamlessly.

- Enables support for GPUs in Kubernetes using NVIDIA device plugin

- Deploy applications to a heterogeneous GPUs cluster in the cloud or datacenter

- Maximize GPU utilization using GPU sharing

- Monitor health and metrics from GPUs in Kubernetes using datacenter management tools such as NVML and DCGM

Kubernetes on NVIDIA GPUs will be available later this year. Please sign-up on the product page to be notified when it becomes available.

Kubernetes on NVIDIA GPUs will be available later this year. Please sign-up on the product page to be notified when it becomes available.

Learn more >

Robotics

Isaac Robotics SDK

Unveiled at the GPU Technology Conference, the Isaac SDK is a collection of libraries, drivers, APIs and other tools. The SDK will save manufacturers, researchers, startups and developers hundreds of hours by making it easy to add AI into next-generation robots for perception, navigation and manipulation.

- Also provides a framework to manage communications and transfer data within the robot architecture. And it makes it easy to add sensors, manage sensor data and control actuators in real-time.

- Part of the SDK is Isaac Sim, a simulation environment for developing, testing and training autonomous machines in the virtual world. The algorithms trained in simulation are then deployed to NVIDIA Jetson for AI computing at the edge, bringing robots to life.

- NVIDIA also showed off the first reference design based on the Isaac SDK, dubbed Carter — for its handy ability to cart around objects. The delivery robot is on-site this week at GTC in the NVIDIA booth.

Developers can register for early access to the Isaac SDK product page.

Learn more >