Among the many knotty problems that AI can help solve, speech and natural language processing (NLP) represent areas poised for significant growth in the coming years.

Recently, a new language representation model called BERT (Bidirectional Encoder Representations from Transformers) was described by Google Research. According to the paper’s authors, “BERT is designed to pre-train deep bidirectional representations by jointly conditioning on both left and right context in all layers.”

This implementation allows the pretrained BERT representation to serve as a backbone for a variety of NLP tasks, like translation and question answering, where it shows state-of-the-art results with some relatively lightweight fine-tuning.

Using NVIDIA V100 Tensor Core GPUs, we’ve been able to achieve a 4x speedup compared to the baseline implementation. This speedup comes from a combination of optimizations involving Tensor Cores, and using larger batch sizes made possible by the 32GB of super-fast memory on the V100.

The BERT network, as its full name suggests, builds on Google’s Transformer, an open-source neural network architecture based on a self-attention mechanism. NVIDIA 18.11 containers include optimizations for Transformer models running in PyTorch.

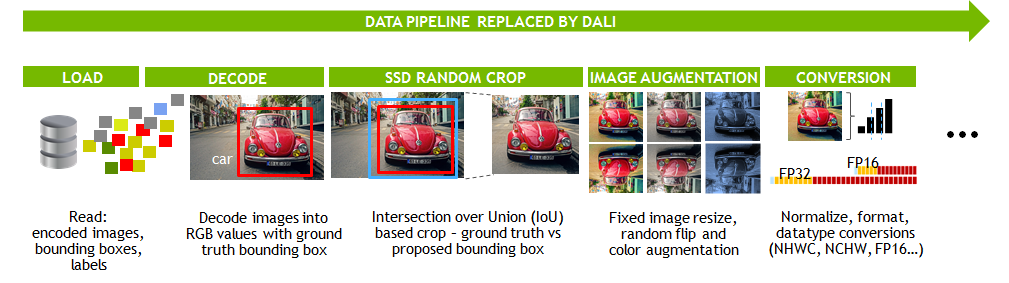

Figure 1 shows all the optimizations done to accelerate BERT.

System config: Xeon E4-2698v4 CPU with 256GB system RAM, single V100 Tensor Core GPU 32GB. Tests run using NVIDIA 18.11 TensorFlow container.

Next, I step through each of these optimizations and the improvements that they enabled.

The BERT GitHub repo started with an FP32 single-precision model, which is a good starting point to converge networks to a specified accuracy level. Converting the model to use mixed precision with V100 Tensor Cores, which computes using FP16 precision and accumulates using FP32, delivered the first speedup of 2.3x.

Tensor Core’s mixed precision brings developers the best of both worlds: the execution speed of a lower precision to achieve significant speedups, and with sufficient accuracy to train networks to the same accuracy as higher precisions. For more information, see Programming Tensor Cores in CUDA 9.

The next optimization adds an optimized layer normalization operation (layer norm), which improves performance by building on the existing cuDNN Batch Normalization primitive, and netted an additional 9% speedup.

Next, doubling the batch size from its initial size of 8 to 16 increased throughput by another 18%.

Finally, the team used TensorFlow’s XLA, a deep-learning compiler that optimizes TensorFlow computations. XLA was used to fuse pointwise operations and generate a new optimized kernel to replace multiple slower kernels. Some of the specific operations that saw speedups include the following:

- A GELU activation function

- Scale and shift operation in Layer Norm

- Adam weights update

- Attention softmax

- Attention dropout

This optimization brought an additional 34% performance speedup. For more information about how to get the most out of XLA running on GPUs, see Pushing the limits of GPU performance with XLA.

An implementation is also available on GitHub of BERT in PyTorch. Be sure to check out the recently released NVIDIA 18.11 container for TensorFlow. The work done here can be previewed in this public pull request to the BERT GitHub repository. Updates to the PyTorch implementation can also be previewed in this public pull request.