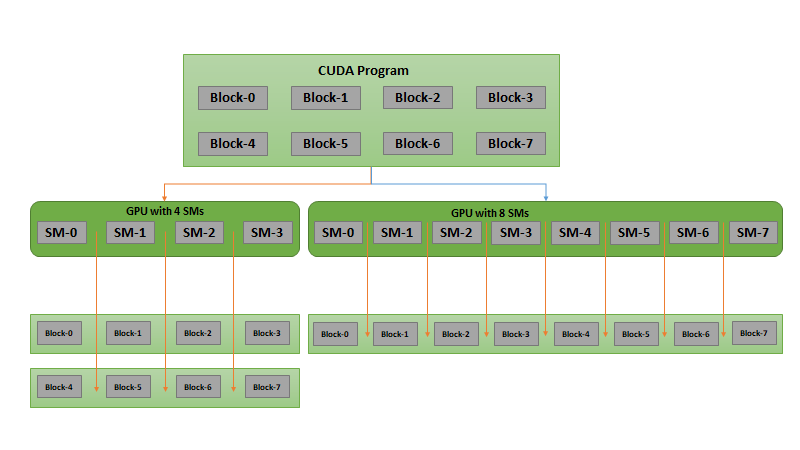

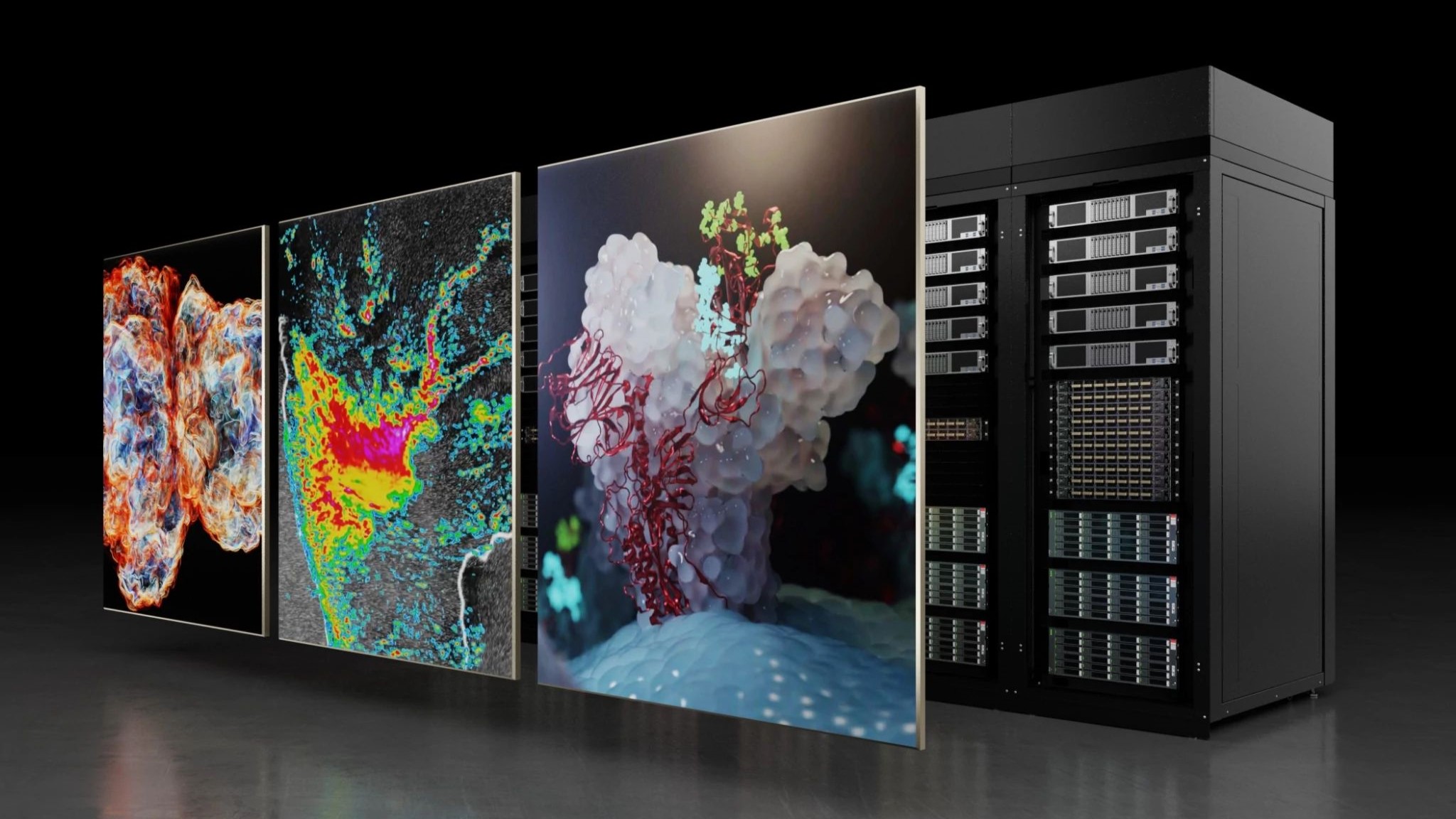

Computationally-intensive CUDA C++ applications in high performance computing, data science, bioinformatics, and deep learning can be accelerated by using multiple GPUs, which can increase throughput and/or decrease your total runtime.

When combined with the concurrent overlap of computation and memory transfers, computation can be scaled across multiple GPUs without increasing the cost of memory transfers.

For organizations and developers using multi-GPU servers, whether in the cloud or on NVIDIA DGX systems, these techniques enable you to achieve peak performance from GPU-accelerated applications.

The NVIDIA Deep Learning Institute (DLI) is offering instructor-led, hands-on training on how to write CUDA C++ applications that efficiently and correctly utilize all available GPUs in a single node, dramatically improving the performance of your applications, and making the most cost-effective use of systems with multiple GPUs.

By participating in this workshop, you’ll learn how to:

- Use concurrent CUDA Streams to overlap memory transfers with GPU computation.

- Utilize all available GPUs on a single node to scale workloads across all available GPUs.

- Combine the use of copy/compute overlap with multiple GPUs.

- Rely on the NVIDIA® Nsight™ Systems Visual Profiler timeline to observe improvement opportunities and the impact of the techniques covered in the workshop.

Classes are taught by DLI-certified instructors who are experts in their fields, delivering industry-leading technical knowledge to drive breakthrough results for organizations. You’ll have access to a GPU-accelerated server in the cloud and can earn an NVIDIA DLI certificate to demonstrate subject matter competency and accelerate your career growth.

Learn more and request this workshop for your organization.

Visit the NVIDIA DLI homepage for a full list of online self-paced and instructor-led training.