The NVIDIA Deep Learning Institute (DLI) has launched two new self-paced courses giving accelerated computing enthusiasts hands-on training on how to scale workloads across multiple GPUs with CUDA C++ and accelerate CUDA C++ applications with concurrent streams.

Each course will give you access to a GPU-accelerated server in the cloud and the opportunity to earn an NVIDIA DLI certificate to demonstrate subject matter competency and accelerate your career growth.

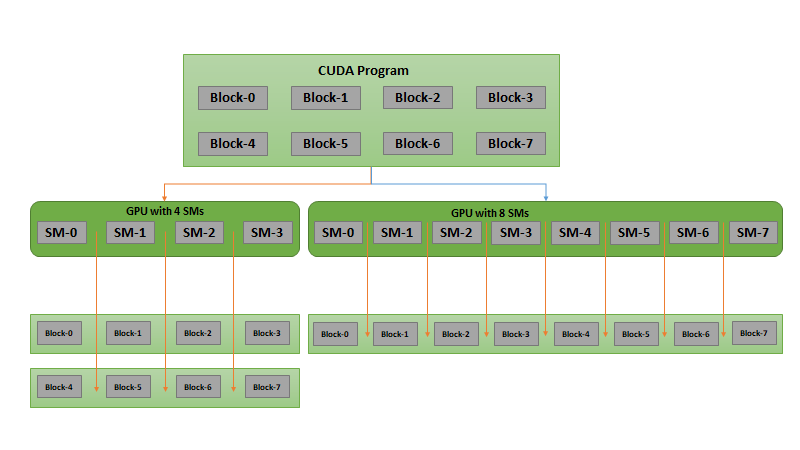

In the course, Scaling Workloads Across Multiple GPUs with CUDA C++, you’ll learn to utilize multiple GPUs on a single node by:

- Launching kernels on multiple GPUs, each working on a subsection of the required work

- Using concurrent CUDA Streams to overlap memory copy with computation on multiple GPUs

Upon completion of this course, you will be able to build robust and efficient CUDA C++ applications that can leverage all available GPUs on a single node.

In the course, Accelerating CUDA C++ Applications with Concurrent Streams, you’ll learn to utilize CUDA Streams to perform copy/compute overlap in CUDA C++ applications by:

- Learning the rules and syntax governing the use of concurrent CUDA Streams

- Refactoring and optimizing an existing CUDA C++ application to use CUDA Streams and perform copy/compute overlap

- Using the NVIDIA® Nsight™ Systems Visual Profiler timeline to observe improvement opportunities and the impact of the techniques covered in this training.

Upon completion, you will be able to build robust and efficient CUDA C++ applications that can leverage copy/compute overlap for significant performance gains.

During the Supercomputing 2020 conference, DLI is offering a free enrollment code to the first 200 accelerating computing enthusiasts who want to take these new courses. Fill out this form to get your unique enrollment code. Codes will be emailed the week of November 23, 2020.

Visit the NVIDIA DLI homepage for a full list of online self-paced and instructor-led training.