From personal assistant applications to helping people with disabilities speak, voice and speech recognition is one of the most researched areas in AI. Last week, researchers from MIT announced a breakthrough, a deep learning based wearable device that can transcribe words people internally verbalize but do not actually speak out loud.

“Our idea was: Could we have a computing platform that’s more internal, that melds human and machine in some ways and that feels like an internal extension of our own cognition?,” Arnav Kapur, the MIT student who led the development of the new system told MIT News.

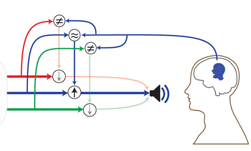

The system, called AlterEgo, uses a wearable Bluetooth device that can be paired with a computer or phone, to access the deep learning algorithm.

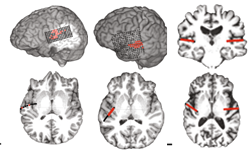

Using NVIDIA TITAN X GPUs and the cuDNN-accelerated TensorFlow deep learning framework, the researchers trained their model on 31 hours of silently spoken text. The system was designed to identify subvocalized words from neuromuscular signals.

The neural network was tested on 15 people and achieved an accuracy level of 92%, on par with state-of-the-art speech recognition systems which require people to lip-sync their words, the researchers said.

“This allows the user to communicate to their computing devices in natural language without any observable action at all and without explicitly saying anything,” the researchers wrote in their research paper. “Users can silently communicate in natural language and receive aural output, thereby enabling a discreet, bi-directional interface with a computing device, and providing a seamless form of intelligence augmentation,”

The team is collecting more data in the hope of building an application with a much more expansive vocabulary.

Read more >