Imagine a robot that can efficiently model clay, push ice cream onto a cone, or mold the rice for your sushi roll. MIT researchers developed a deep learning-based algorithm that improves a robot’s ability to mold materials into shapes, as well as enabling it to interact with liquids and solid objects.

The work draws on inspiration from how humans interact with different objects.

“Humans have an intuitive physics model in our heads, where we can imagine how an object will behave if we push or squeeze it. Based on this intuitive model, humans can accomplish amazing manipulation tasks that are far beyond the reach of current robots,” said Yunzhu Li, a graduate student at MIT. “We want to build this type of intuitive model for robots to enable them to do what humans can do.”

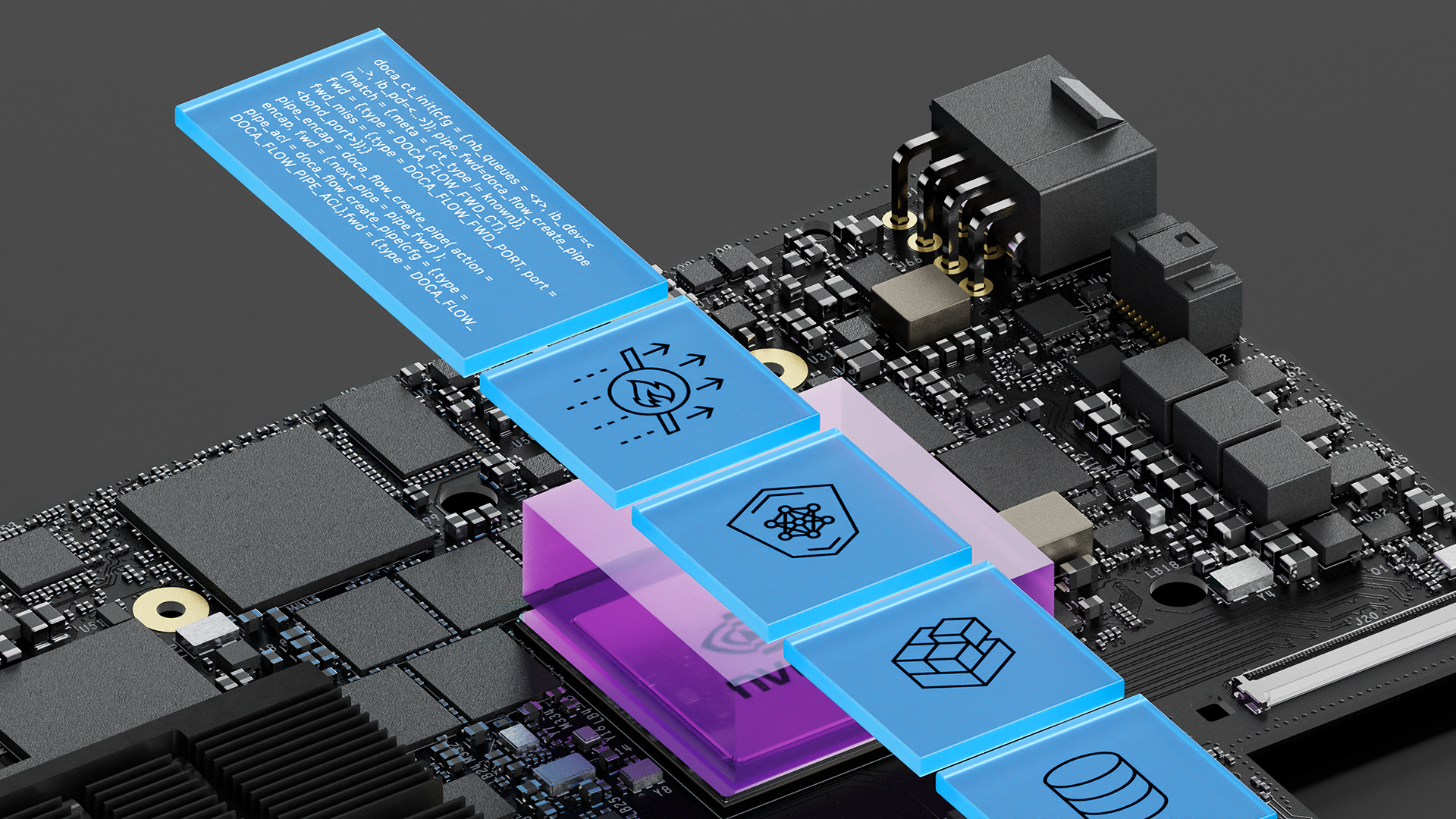

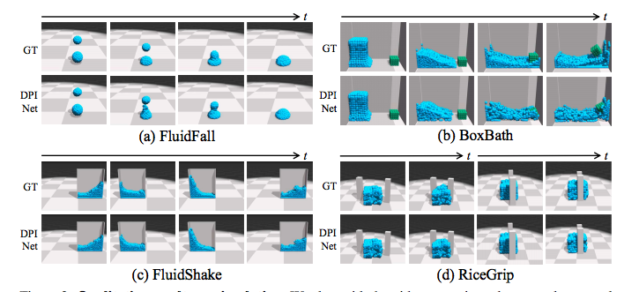

At the crux of the work is a particle interaction network called (DPI-Nets). This network learns how small portions of different materials interact. The system then creates dynamic interaction graphs, which capture the interaction of passing one particle onto another. In the simulations, the particles are represented as small spheres, combined to make up a liquid or deformable object.

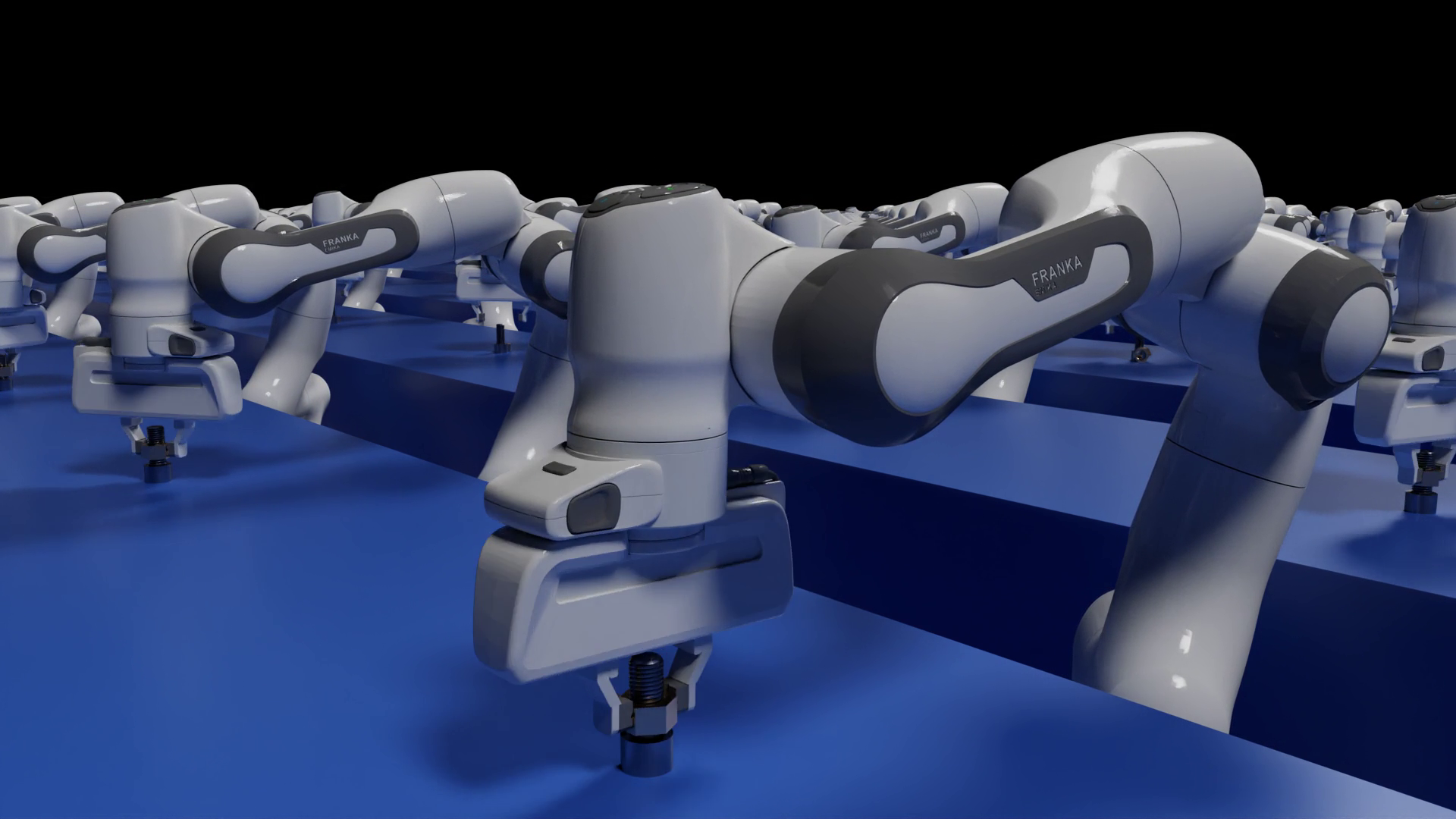

In the video above, a robot named RiceGrip clamps different objects made out of deformable foam. Because the robot was trained to interact with such objects, the gripper knows how much force it should use. If the simulation particles don’t align with the real-world position of the particles, the system sends an error signal to the model, helping it learn how to better handle the material.

“With learned DPI-Nets, our robots have achieved success in manipulation tasks that involve deformable objects of complex physical properties, such as molding plasticine to a target shape,” the researchers stated.

The simulator was built using NVIDIA FleX, a particle based simulation system.

With an NVIDIA TITAN Xp GPU, and the cuDNN-accelerated PyTorch deep learning framework, the team trained the algorithm on thousands of different rollouts, from four different environments, containing different objects and interactions. Once trained, the algorithm relies on the GPU for inference.

“We have demonstrated that a learned particle dynamics model can approximate the interaction of diverse objects, and can help to solve complex manipulation tasks of deformable objects,” the researchers said. “Our system requires standard open-source robotics and deep learning toolkits, and can be potentially deployed in household and manufacturing environment.”