Research efforts in 3D computer vision and AI have been rising side-by-side like two skyscrapers. But the trip between these formidable towers has involved clambering up and down dozens of stairwells.

To bridge that divide, NVIDIA recently released Kaolin, which in a few steps moves 3D models into the realm of neural networks.

Implemented as a PyTorch library, Kaolin can slash the job of preparing a 3D model for deep learning from 300 lines of code down to just five.

Complex 3D datasets can be loaded into machine-learning frameworks regardless of how they’re represented or will be rendered.

A tool like this can benefit researchers in fields as diverse as robotics, self-driving cars, medical imaging, and virtual reality.

Interest in 3D models is booming, and Kaolin can make a significant impact. Online repositories already hold many 3D datasets, thanks in part to an estimated 30 million depth cameras that capture 3D images and are now in use worldwide everywhere from labs to living rooms.

To date, researchers have lacked a good utility to make those models ready for use with deep learning tools, which also are evolving at a rapid pace. Instead, they were forced to spend significant time writing from scratch what should be boilerplate code.

For the broader audience, Kaolin is a software library that supports all kinds of 3D applications. Just as an example, imagine a fun Kaolin inspired app that that takes your picture and turns it into a 3D model.

An Interface to Accelerate Research

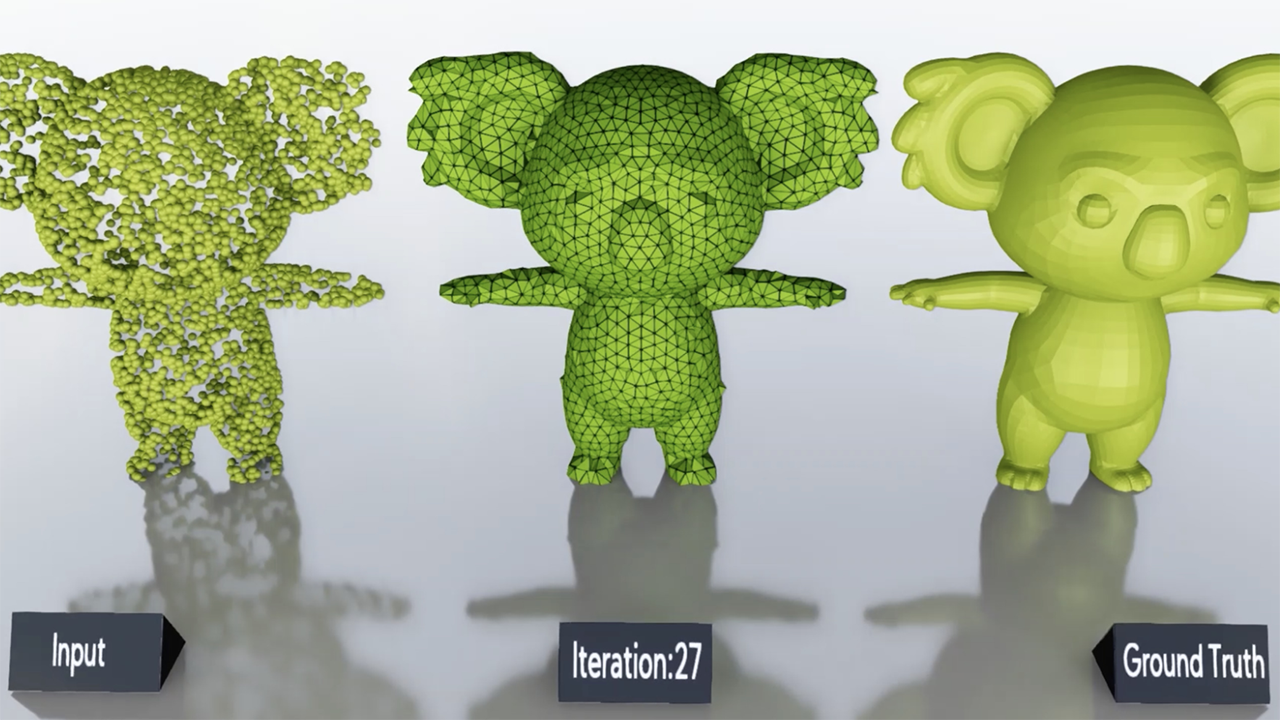

At its core, Kaolin consists of an efficient suite of geometric functions that allow manipulation of 3D content. It can wrap into PyTorch tensors 3D datasets implemented as polygon meshes, point clouds, signed distance functions or voxel grids.

With their 3D dataset ready for deep learning, researchers can choose a neural network model from a curated collection that Kaolin supplies. The interface provides a rich repository of models, both baseline and state of the art, for classification, segmentation, 3D reconstruction, super-resolution and more.

Some examples of real-world applications are:

Classification to identify items in a 3D scene is often the first step of more complex processes illustrated below.

3D part segmentation to automatically identify different parts of a 3D model making it easy to rig a character for animation or to customize the model to generate variants of an object.

Image to 3D constructs a 3D model from an image of a product recognized by the trained neural network. The 3D model, in turn, could be used to search a 3D model database for the most perfect fit from a vendor catalog for instance.

In addition to source code, we’re releasing pre-trained models for these tasks on popular benchmarks. We hope they serve as baselines for future research, easing the job of comparing models.

Kaolin’s modular approach eases users into differentiable rendering, a hot new technique in 3D deep learning. Rather than write an entire renderer from scratch, users can simply modify components of the interface supplies.

Putting AI On Stage With 3D

We do a lot of 3D-related research at NVIDIA. We’d sometimes spend days scraping through open-source code others wrote to find what was best and pull it all into a single library for our internal use.

After writing boilerplate code for a couple of our projects, one of our interns suggested we create something more comprehensive for PyTorch. Researchers have had such utilities for 2D images for a while. One for 3D could enable a wider community.

We named Kaolin after Kaolinite, a form of plasticine often used to sculpt 3D models which are then digitized. We hope it helps many current and new 3D researchers build amazing things using AI.

Researchers can download the library on GitHub.

Learn More about Kaolin by Attending this On-Demand Webinar

Get started with 3D Deep Learning using the Kaolin PyTorch Library.

In this webinar, learn how to curate state-of-the-art 3D deep learning architectures for research.

In this webinar, you’ll learn:

- How researchers use Kaolin to accelerate 3D deep learning research

- An overview of 3D deep learning tasks, such as 3D classification, 3D segmentation, single-image 3D reconstruction, and differentiable rendering

- How to train a neural network for a custom task, like avoiding collisions with JetBot

- Applications and challenges of using Kaolin to curate state-of-the-art 3D renderings