In this edition of our posts about the Jetson Community, we’re featuring two projects and their developers who won the NVIDIA Jetson Project of the Month award recently.

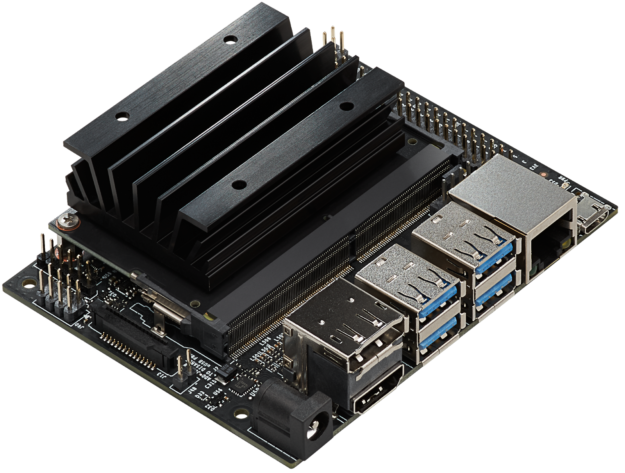

Tomasz Lewicki’s open-source AI fever screening thermometer was awarded the Jetson Project of the Month for June and Maggie Xu’s Self-Navigating Robot for Search and Rescue won the award for July. These projects showcase the use of Jetson Nano for video, sensor data analysis and robotics use-cases. Let’s walk through these projects in detail.

AI Fever Screening Thermometer

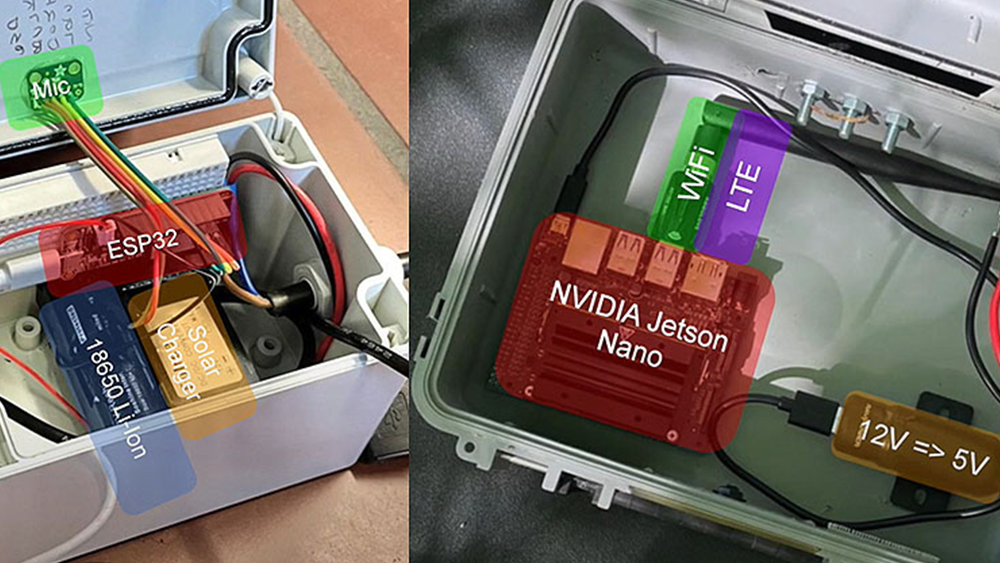

Tomasz’s AI thermometer, powered by Jetson Nano, is able to identify person(s) in its field of view and measure their skin temperature in real-time. Tomasz notes that this thermometer, if used at the entrance of a shared building, can provide an efficient screening mechanism for fever, a common symptom of Covid-19 and other viral illnesses.

The thermometer uses an RGB camera and a thermal (infra-red) camera to detect people and measure their temperature respectively. People are detected using the SSD object detection algorithm (trained on the 91-class COCO dataset) running on Jetson Nano at 9FPS. The output of the thermometer is a side-by-side view of the video streams from the cameras – an RGB view and a heat map view. In the RGB view, the thermometer is able to identify/label the object(s) and list the object’s temperature in Fahrenheit and Celsius.

For developers and users to build their own AI thermometer, Tomasz has open-sourced the thermometer’s enclosure and the source code here.

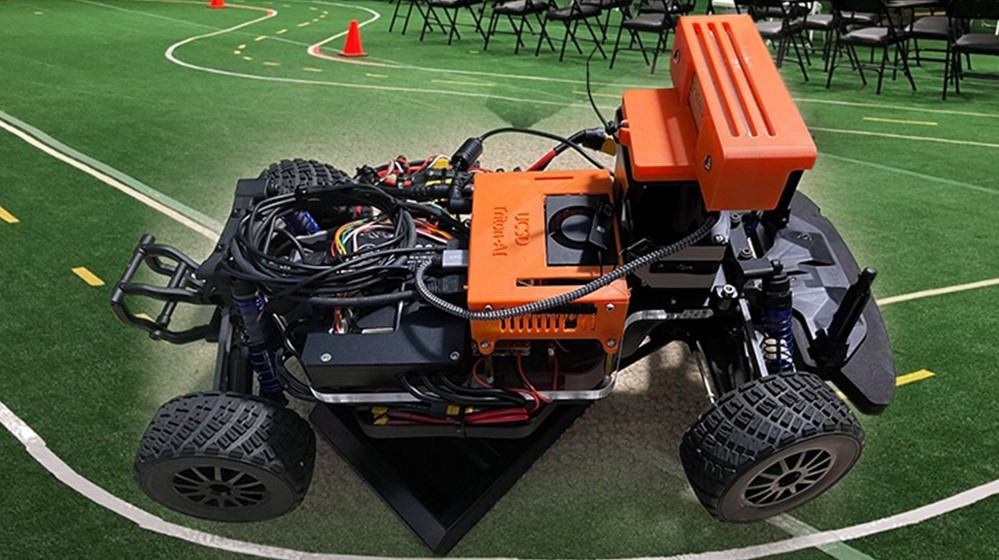

Self-Navigating Robot for Search and Rescue

Maggie’s self-navigating robot can autonomously navigate, find and retrieve an object from a specified search area. The project is built in ROS Melodic running on Jetson Nano. A USB camera and a LIDAR are used for scanning and object detection.

The key robot tasks involved are navigation, object detection and tracking, and grabbing the object. ROS’s gmapping and move_base packages are used for mapping and navigation respectively. OpenCV is used to detect the object and a robotic arm with an Arduino control board is used to grab the object.

This is a great project for ROS beginners to get an overview of key robot tasks. For code and details of the project, go here.