Starting on October 5, this fall’s GPU Technology Conference (GTC) will run continuously for five days, across seven time zones. The conference will showcase the latest breakthroughs in AI, as well as many other GPU technology interest areas.

Here’s a preview of some of the AI sessions at GTC.

Recommendation Systems

A Deep Dive into the Merlin Recommendation Framework: Recommender systems are driving the growth of online giants. Now, with the NVIDIA Merlin application framework and GPU acceleration they’re becoming accessible to everyone. Tune in to learn more about Merlin, GPU-accelerated recommendation framework that scales to datasets and user/item combinations of arbitrary size.

Winning the 2020 RecSys Challenge with Kaggle Grandmasters: Tune in to learn about NVIDIA’s 1st place solution in the RecSys Challenge 2020, which focused on the prediction of Twitter user behavior. The 200 million tweet dataset required significant computation to preprocess, feature engineer, and train. In this session they’ll share how they accelerated the training pipeline entirely on GPUs.

NGC

Accelerating AI Workflows with NGC: AI is going mainstream and is quickly becoming pervasive in every industry. To learn more on how to overcome the challenges of developing and deploying AI applications with NGC, join us for our session at GTC.

Building NLP Solutions with NGC Models and Containers on Google Cloud AI Platform: This session will cover a variety of topics, including: leveraging GPU-optimized NVIDIA NGC containers and pre-trained AI models for a variety of use cases; using the custom container feature on Google Cloud AI Platform; fine-tuning a pre-trained BERT model with custom data using automatic mixed precision on NVIDIA Tensor Cores; optimizing inference with NVIDIA TensorRT; and deploying inference at scale with NVIDIA Triton Inference Server.

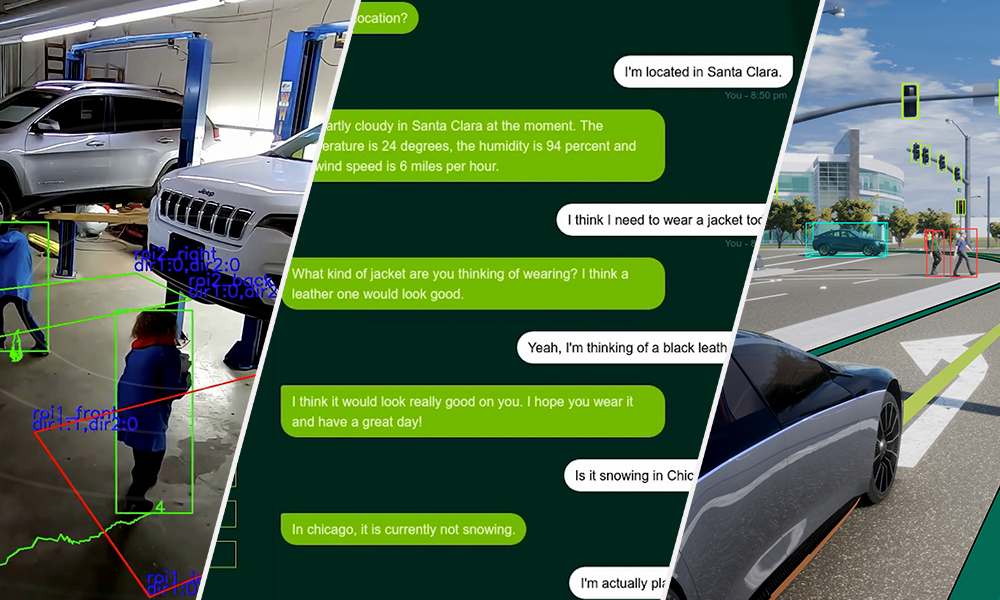

Conversational AI

How the Revolution of Natural Language Processing Is Changing How Companies Understand Text: Text is central to both individual lives and companies. Natural language processing has had its limits, from keyword-based to linguistic approaches. But recently, NLP has made more progress than any other field in machine learning. Due to better compute, large & open text datasets, and transformers, models like BERT and other similarly-architected transformer models have elevated the state of the art. The emergence of libraries like Transformers and Tokenizers by Hugging Face have empowered companies to adopt these new models faster than ever, making these libraries some of the fastest growing open-source products to date.

Learning from Language and Interaction with Human Players in Minecraft: CraftAssist is a research program aimed at building an assistant that can collaborate with human players in the sandbox construction game, Minecraft. Its scientific meta-goal is to facilitate the study of agents that can complete tasks specified through dialogue and learn from interactions with players. We’ll talk about the tools and platform that allow players to interact with agents and record those interactions, as well as the data we’ve collected. We’ll also describe an initial agent from which users can iterate. The platform is intended to support the study of agents that are fun to interact with and useful for tasks specified and evaluated by human participants. To encourage the AI research community to use the CraftAssist platform for their own experiments, we’ve open-sourced the framework, baseline assistant, tools, and data we used to build it.

GPT-3: Language Models are Few-Shot Learners: Humans can generally perform a new language task from just a few examples or simple instructions – something that NLP systems still largely struggle to do. We’ll present and discuss GPT-3, an autoregressive language model with 175 billion parameters, which is 10x more than any previous non-sparse language model, and show that it demonstrates strong few-shot learning, sometimes even reaching competitiveness with prior state of the art fine-tuning approaches. We’ll explore GPT-3’s range of abilities on tasks such as translation, question-answering, and arithmetic, and show examples of GPT-3’s often surprising successes and failures.