How GPUDirect Storage helps data scientists bypass the CPU I/O bottleneck

In the world of data scientists, there’s usually a lot of waiting. That’s because they’re often working with extraordinary amounts of data — petabyte-sized datasets are not uncommon — and it can take a lot of time to move it.

Data scientists have recently discovered that using GPUs for computing can dramatically speed up their workloads. However, there’s a “traffic jam” in their way. In some cases, a data scientist may have to wait literally days for their data to be transferred between storage and compute before they can harness the massive compute power of GPUs.

NVIDIA software engineers have found a way to solve this problem.

NVIDIA is introducing today a way to eliminate CPU I/O bottlenecks called GPUDirect Storage. Offering higher bandwidth and lower latency, it brings data and compute together, accelerating modern, data-hungry enterprise applications.

With this technology, developers can explore new ways to unleash more compute power on their data science and high performance computing applications and services.

Eliminating the “Pit Stop” Between Storage and Compute

GPUDirect Storage revolutionizes computing by rearchitecting how data moves between storage and compute.

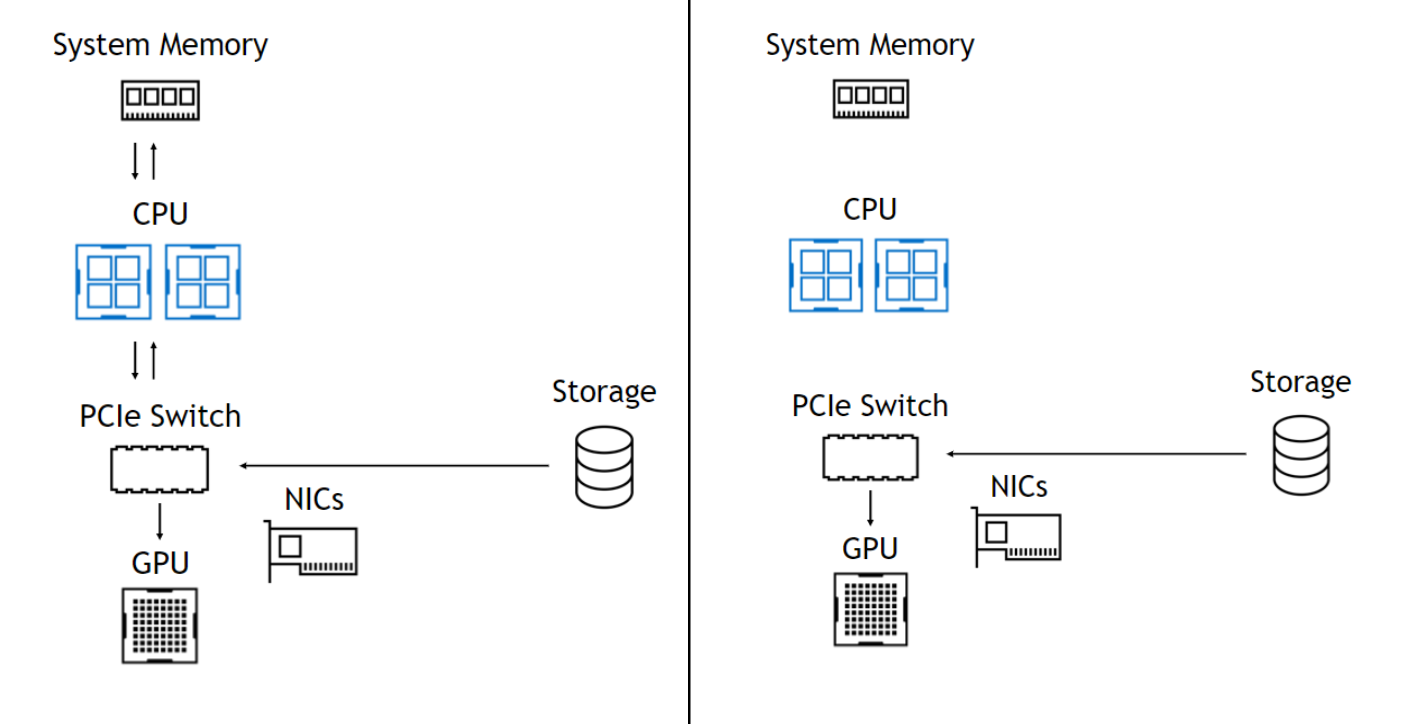

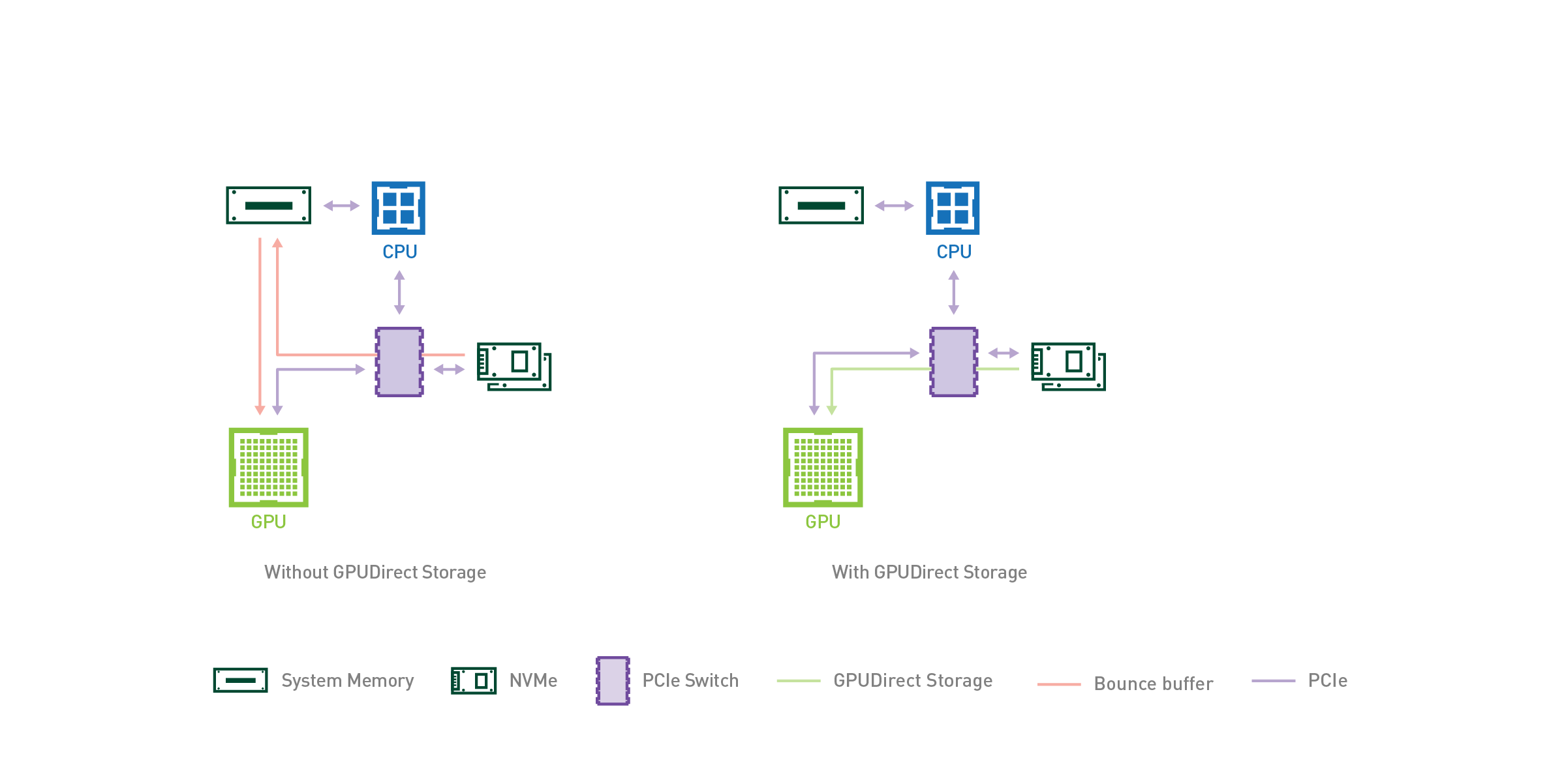

Legacy architectures placed a “bounce buffer” in the CPU’s system memory between storage and GPU computing where copies of data would be used to keep applications on GPUs fed. With GPUDirect Storage, the GPU not only becomes the fastest computing element, but also the one with the highest I/O bandwidth, once all of the computation happens on the GPU.

Figure 1: The direct data path skips the extra copies through the bounce buffer in CPU system memory and moves data directly between storage and GPU memory.

Put Data Science Productivity in the Fast Lane

Data science productivity is fueled by performance. The faster the performance, the more rapid the iteration. And this can speed business for AI enterprises.

GPUDirect Storage bypasses the speed bumps in data pipelines, eliminating time and distance between information and answers.

NVIDIA RAPIDS, a suite of open-source software libraries, on GPUDirect and NVIDIA DGX-2 delivers a direct data path between storage and GPU memory that’s 8x faster than an unoptimized version that doesn’t use GPUDirect Storage. GPUDirect Storage puts your data pipeline in the fast lane for getting record-breaking results from your accelerated computing infrastructure and NVIDIA GPU-accelerated libraries.

Learn how you can re-design and rebalance your system for a more direct path to storage here. And if you’re attending Flash Memory Summit in Santa Clara, California this week, don’t miss a keynote session by NVIDIA’s Chris Lamb, VP Compute Software, on Wednesday, August 7th at 1:00PM where he will talk more about GPUDirect Storage.