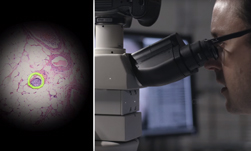

Researchers at Google announced this week they developed a deep learning-based model that uses an augmented reality microscope to help physicians diagnose cancer. The researchers say existing light microscopes found in hospitals and clinics around the world can be easily retrofitted with readily-available components, to mirror this prototype.

The AR system consists of a modified light microscope fitted with a camera that captures the field of view. A deep learning computer then analyzes the image and projects the results back to the microscope in real time via an AR display.

“This digital projection is visually superimposed on the original (analog) image of the specimen to assist the viewer in localizing or quantifying features of interest. Importantly, the computation and visual feedback updates quickly,” the Google researchers wrote in their blog post.

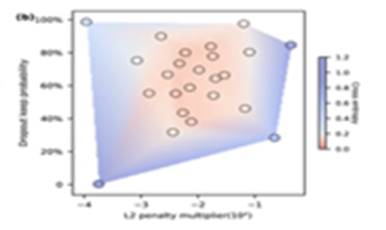

Using an NVIDIA TITAN Xp GPU and the cuDNN-accelerated TensorFlow deep learning framework, the researchers trained their model on hundreds of images obtained from the Cancer Metastases in Lymph Nodes challenge data set. The system was trained to detect breast and prostate cancer.

The Google researchers state the central potential of the AR microscope is in expanding access to AI to users who are not likely to adopt a digital workflow in the near future.

“In principle, the ARM can provide a wide variety of visual feedback, including text, arrows, contours, heatmaps, or animations, and is capable of running many types of machine learning algorithms aimed at solving different problems such as object detection, quantification, or classification,” the Google team wrote.

The proof of concept was presented at the Annual Meeting of the American Association for Cancer Research

Read more>

Google Develops an AR Microscope To Detect Cancer in Real-Time

Apr 17, 2018

Discuss (0)

Related resources

- GTC session: Live from GTC: A Conversation with Startups on End to End Computer Vision

- GTC session: Creating AI-Powered Hardware Solutions for Medical Imaging Applications

- GTC session: Generative AI Theater: 15 Minutes to Change the World: Generative AI in Healthcare

- NGC Containers: Microvolution

- SDK: MONAI Deploy App SDK

- SDK: Falcor