Researchers from the Max Planck Institute for Intelligent Systems, a member of NVIDIA’s NVAIL program, developed an end-to-end deep learning algorithm that can take any speech signal as input – and realistically animate it in a wide range of adult faces.

“There is an extensive literature on estimating 3D face shape, facial expressions, and facial motion from images and videos. Less attention has been paid to estimating 3D properties of faces from sound,” the researchers stated in their paper. “Understanding the correlation between speech and facial motion thus provides additional valuable information for analyzing humans, particularly if visual data are noisy, missing, or ambiguous.”

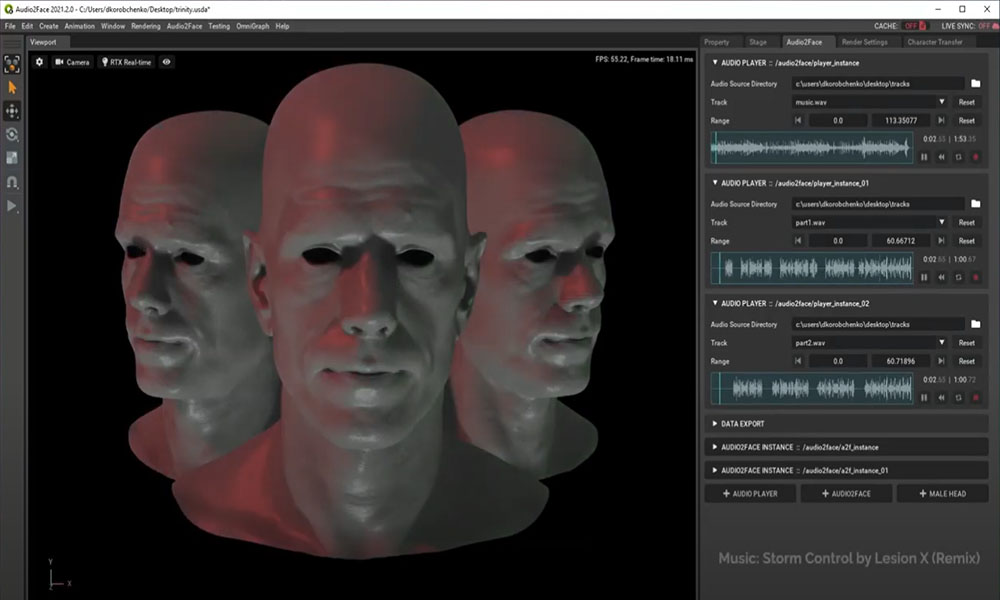

The team first collected a new dataset of 4D face scans together with speech. The dataset is comprised of 12 subjects and 480 sequences of about 3-4 seconds each. Once the data was collected, the team trained a deep neural network model on NVIDIA Tesla GPUs, with the cuDNN-accelerated TensorFlow deep learning framework, called Voice Operated Character Animation (VOCA).

“Our goal for VOCA is to generalize well to arbitrary subjects not seen during training,” the researchers stated. “Generalization across subjects involves both (i) generalization across different speakers in terms of the audio (variations in accent, speed, audio source, noise, environment, etc.) and (ii) generalization across different facial shapes and motion.”

VOCA receives the subject-specific template and the raw audio signal, which is extracted using Mozilla’s DeepSpeech, an open source speech-to-text engine, which relies on CUDA and NVIDIA GPU dependencies for quick inference.

The desired output of the model is a target 3D mesh.

During testing, the researchers generated a wide range of adult faces, with subject labels that allowed the team to synthesize different speaker styles. The algorithm also generalizes well across different speech sources not seen during training, languages, and 3D face templates.

The work was recently presented at the Computer Vision and Pattern Recognition conference in Long Beach, California this month.

The dataset and trained model are available for researchers on GitHub.