By: Mehmet Kocamaz, Senior Computer Vision and Machine Learning Engineer at NVIDIA

Editor’s note: This is the latest post in our NVIDIA DRIVE Labs series, which takes an engineering-focused look at individual autonomous vehicle challenges and how NVIDIA DRIVE addresses them. Catch up on all of our automotive posts, here.

Many cars on the road today equipped with advanced driver assistance systems rely on front and rear cameras to perform automatic cruise control (ACC) and lane keep assist. However, full autonomous driving requires complete, 360-degree surround camera vision.

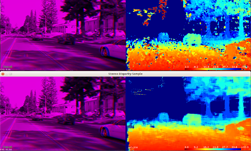

Camera object tracking is an essential component of the surround camera vision (i.e. perception) pipeline of an autonomous vehicle. The software tracks detected objects as they appear in consecutive camera images by assigning them unique identification (ID) numbers.

The accuracy of object tracking plays a critical role in robust distance-to-object and object velocity estimations. It also mitigates missed and false positive object detections and prevents them from propagating into planning and control functions of the self-driving car that make decisions about stopping and accelerating based on these inputs.

Our surround camera object tracking software currently leverages a six-camera, 360-degree surround perception setup that has no blind spots around the car. The software tracks objects in all six camera images, and associates their locations in image space with unique ID numbers as well as time-to-collision (TTC) estimates.

The appearance models of the tracked objects are constructed by feature points which are selected from inside of the object. The feature points which are invariant to rotation and translation are detected by the Harris Corner Detector [1]. Small templates centered on the corner points are tracked by the Lucas-Kanade template tracking algorithm [2] at different image pyramid scales. The surround camera object tracker predicts the object scale change, translation, and TTC using these tracked feature points.

Track initialization latency and ghost tracks removal

Early recognition of an object and its confirmation as a true positive track is crucial to reduce the delay in control functions. Our low track initiation latency, which is currently around 90 milliseconds, enables the car to respond properly to sudden stopping conditions even in high-speed driving. We simultaneously achieve higher precision and recall values than the object detector for objects in the car’s ego path or near-by in the left and right lanes.

Ghost tracks due to objects moving out of the scene are the main cause of false positive braking. Removing them with low latency and without missing any object tracks is a challenging task. Our tracker removes ghost tracks within 30 to 150 milliseconds without causing object track misses.

Object tracker accuracy and robustness under reduced object detection frequency

Running object detection on all six camera feeds for each image frame can increase overall system latency. Depending on the application, developers may choose to reduce object detection frequency, and run the object detector once every three frames, for example. Such a setup would free-up resources for other computations, but would make object tracking more challenging. In our experiments, we have observed that even under reduced object detection frequency, the object tracker still achieves high precision, recall and track identification stability.

The surround object tracking system has been successfully tested for more than 20,000 miles with the ego car driving in autonomous mode. The tests were performed in a variety of seasons, routes, times of the day, illumination conditions, highway, and urban roads. No disengagements have been observed or reported due to object tracking failures.

The NVIDIA DriveWorks SDK contains the object tracking module. Both CPU and CUDA implementations are available and can be scheduled on CPU or GPU depending on user preference. We offer both versions to provide flexibility in the overall resource scheduling for the software pipeline. We have observed that the GPU implementation is seven times faster than the CPU implementation. We have also observed overall latency improvements in our AV software stack by leveraging scheduling flexibility between CPU and GPU processing — for example, having the object tracker of one camera run on CPU, and having it run on the GPU for the other five cameras.

References

- Chris Harris and Mike Stephens (1988). “A Combined Corner and Edge Detector”. Alvey Vision Conference. 15.

- Simon Baker and Iain Matthews. 2004. Lucas-Kanade 20 Years On: A Unifying Framework. Int. J. Comput. Vision 56, 3 (February 2004), 221-255.