Tuned math libraries are an easy and dependable way to extract the ultimate performance from your HPC system. However, for long-lived applications or those that need to run on a variety of platforms, adapting library calls for each vendor or library version can be a maintenance nightmare.

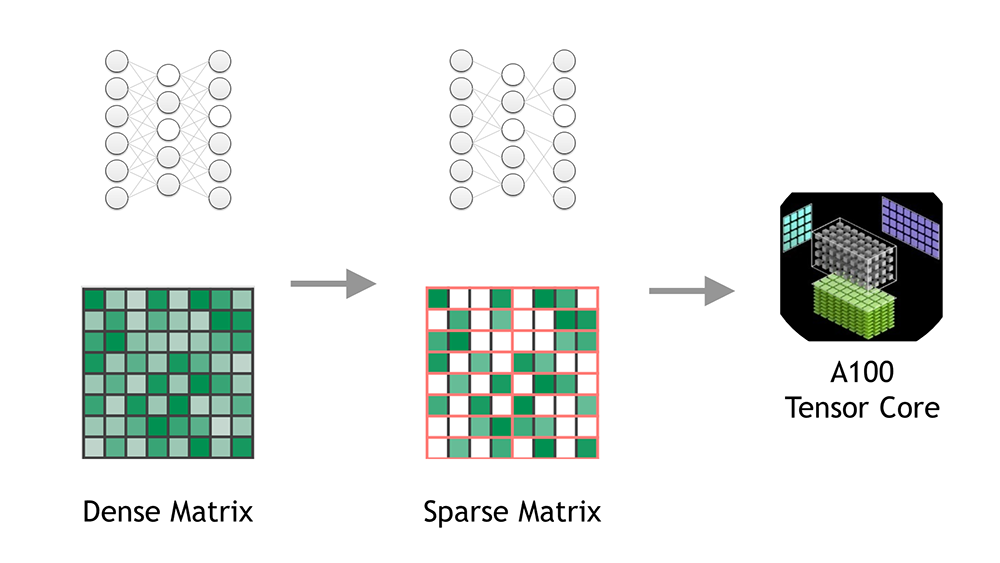

A compiler that can automatically generate calls to tuned math libraries gives you the best of both worlds: easy portability and ultimate performance. In this post, I show how you can seamlessly accelerate many standard Fortran array intrinsics and language constructs on GPUs. The nvfortran compiler enables this acceleration automatically by mapping Fortran statements to the functions available in the NVIDIA cuTENSOR library, a first-of-its-kind, GPU-accelerated, tensor linear algebra library providing tensor contraction, reduction, and element-wise operations.

An easy on-ramp to NVIDIA GPUs

Here’s how standard Fortran array intrinsic functions can map to GPU-accelerated math libraries. At the simplest level, only two Fortran statements are required to take advantage of the outstanding performance provided by the cuTENSOR library:

use cutensorex ... c = matmul(a,b)

The first statement using the cutensorex predefined module includes interfaces to the cuTENSOR library in the form of overloaded Fortran intrinsic procedures, array expressions, and overloaded assignments. The interfaces are written to map only arrays located in GPU device memory. Later in this post, I discuss what that means from the OpenACC and CUDA Fortran programmer point-of-view. With these interfaces defined, the second statement containing the matmul() intrinsic call is automatically mapped to a cuTENSOR function call.

The interfaces implement deferred execution by recognizing and matching several commonly used patterns that can map to a single cuTENSOR kernel invocation. In all cases, multiple cuTENSOR functions are called to set up the handle, descriptor data structures, and work buffers that cuTENSOR requires.

However, only one kernel is launched onto the GPU. It is important for performance reasons to map the entire statement, including the assignment to the left-side array. You don’t want the compiler creating temporary arrays, as is common in Fortran, for either the inputs or results (intermediate or final) of the right-side operation.

Supported standard Fortran operations

The cuTENSOR library contains general permutation and contraction operations. The result of the permutation can optionally be operated on by an elemental function, and optionally scaled.

The nvfortran compiler can recognize and map a variety of Fortran transformational intrinsics and elemental intrinsic functions used in combination with general array syntax to cuTENSOR functionality. A few of the more straightforward translations include the following:

d = transpose(a) d = func(transpose(a)) d = alpha * func(transpose(a) d = reshape(a,shape=[...]) d = reshape(a,shape=[...],order=[...]) d = func(reshape(a,...)) d = alpha * func(reshape(a,...)) d = spread(a,dim=k,ncopies=n) d = func(spread(a,dim=k,ncopies=n)) d = alpha * func(spread(a,dim=k,ncopies=n))

The inputs to matmul() can also be permuted in cuTENSOR, and the result can be scaled and accumulated. That leads to several possible combinations, such as the following statements:

c = matmul(a,b) c = c + matmul(a,b) c = c - matmul(a,b) c = c + alpha * matmul(a,b) d = alpha * matmul(a,b) + beta * c c = matmul(transpose(a),b) c = matmul(reshape(a,shape=[...],order=[...]),b) c = matmul(a,transpose(b)) c = matmul(a,reshape(b,shape=[...],order=[...]))

Using NVIDIA Tensor Cores from standard Fortran

Taking advantage of cuTENSOR and NVIDIA Tensor Cores can be as easy as the following code example when you use features for random number generation that are included in the cutensorex module:

program main use cutensorex integer, parameter :: ni=5120, nj=5120, nk=5120, ntimes=10 real(8), allocatable, dimension(:,:) :: a, b, d allocate(a(ni,nk),b(nk,nj),d(ni,nj)) call random_number(a) call random_number(b) d = 0.0d0 print *,"cutensor" call cpu_time(t1) do nt = 1, ntimes d = d + matmul(a,b) end do call cpu_time(t2) flops = 2.0*ni*nj*nk flops = flops*ntimes print *,"times",t2,t1,t2-t1 print *,"GFlops",flops/(t2-t1)/1.e9 end program

The matmul() intrinsic call is mapped to cuTENSOR calls that seamlessly use Tensor Cores wherever possible. I show some performance results later in this post.

Compiling the program with nvfortran

You may ask how this program uses cuTENSOR, when I stated earlier that the cutensorex interfaces only map operations on GPU device arrays to cuTENSOR calls. The answer lies in how the program is compiled:

% nvfortran -acc -gpu=managed -cuda -cudalib main.f90

Here, I am compiling the program as an OpenACC program and taking advantage of the OpenACC managed memory mode in which all allocatable arrays are allocated in CUDA Unified Memory. With the addition of -cuda that enables CUDA Fortran extensions as well, the arrays are essentially CUDA Fortran–managed arrays. One rule of CUDA Fortran generic interface matching is to prefer the device interface for managed actual arguments, when both host and device interfaces exist.

The nvfortran compiler provides some shortcuts when the declarations, allocations, and use are in the same program unit. In general, it’s better to use OpenACC directives to instruct the compiler to pass device addresses, as in the following code example:

!$acc host_data use_device(a, b, d) do nt = 1, ntimes d = d + matmul(a,b) end do !$acc end host_data

In this case, the -cuda compiler option is not required.

Using cuTENSOR from CUDA Fortran

For CUDA Fortran users, the cutensorex module and Fortran transformational intrinsics become a fast path to high performance and fully portable code. Use the !@cuf sentinel to add lines of code that are interpreted and compiled by the nvfortran CUDA Fortran compiler, or ignored as a comment by a standard Fortran compiler:

program main !@cuf use cutensorex !@cuf use cudafor integer, parameter :: ni=5120, nj=5120, nk=5120, ntimes=10 real(8), allocatable, dimension(:,:) :: a, b, d !@cuf attributes(device) :: a, b, d allocate(a(ni,nk),b(nk,nj),d(ni,nj)) call random_number(a) call random_number(b) d = 0.0d0 print *,"cutensor" call cpu_time(t1) do nt = 1, ntimes d = d + matmul(a,b) end do call cpu_time(t2) flops = 2.0*ni*nj*nk flops = flops*ntimes print *,"times",t2,t1,t2-t1 print *,"GFlops",flops/(t2-t1)/1.e9 end program

On line 6, I declared the arrays with the device attribute, which places them in GPU device memory. However, they could be declared with the managed attribute as well. This program can be compiled and linked with the following command:

% nvfortran -Mcudalib main.cuf

Measured performance on real(8) data

Here’s a look at performance, starting with real(8) (double precision) data as used in the earlier examples. You can measure matrix multiply performance in several ways:

- Single-threaded CPU implementation

- Multi-threaded or multicore CPU implementation

- Naively coded matrix multiply offloaded using directives

- The

matmul()intrinsic mapped to cuTENSOR

To get the best threaded-CPU performance, use the basic linear algebra subprogram (BLAS) library routine DGEMM. The equivalent DGEMM call to the earlier operation is the following command:

call dgemm('n','n',ni,nj,nk,1.0d0,a,ni,b,nk,1.0d0,d,ni)

To get an understanding of what tuned libraries can provide over a naive implementation, use the following OpenACC loop structure to run on the GPU. The loop structure uses no special tiling or hardware instructions.

!$acc kernels do j = 1, nj do i = 1, ni do k = 1, nk d(i,j) = d(i,j) + a(i,k) * b(k,j) end do end do end do !$acc end kernels

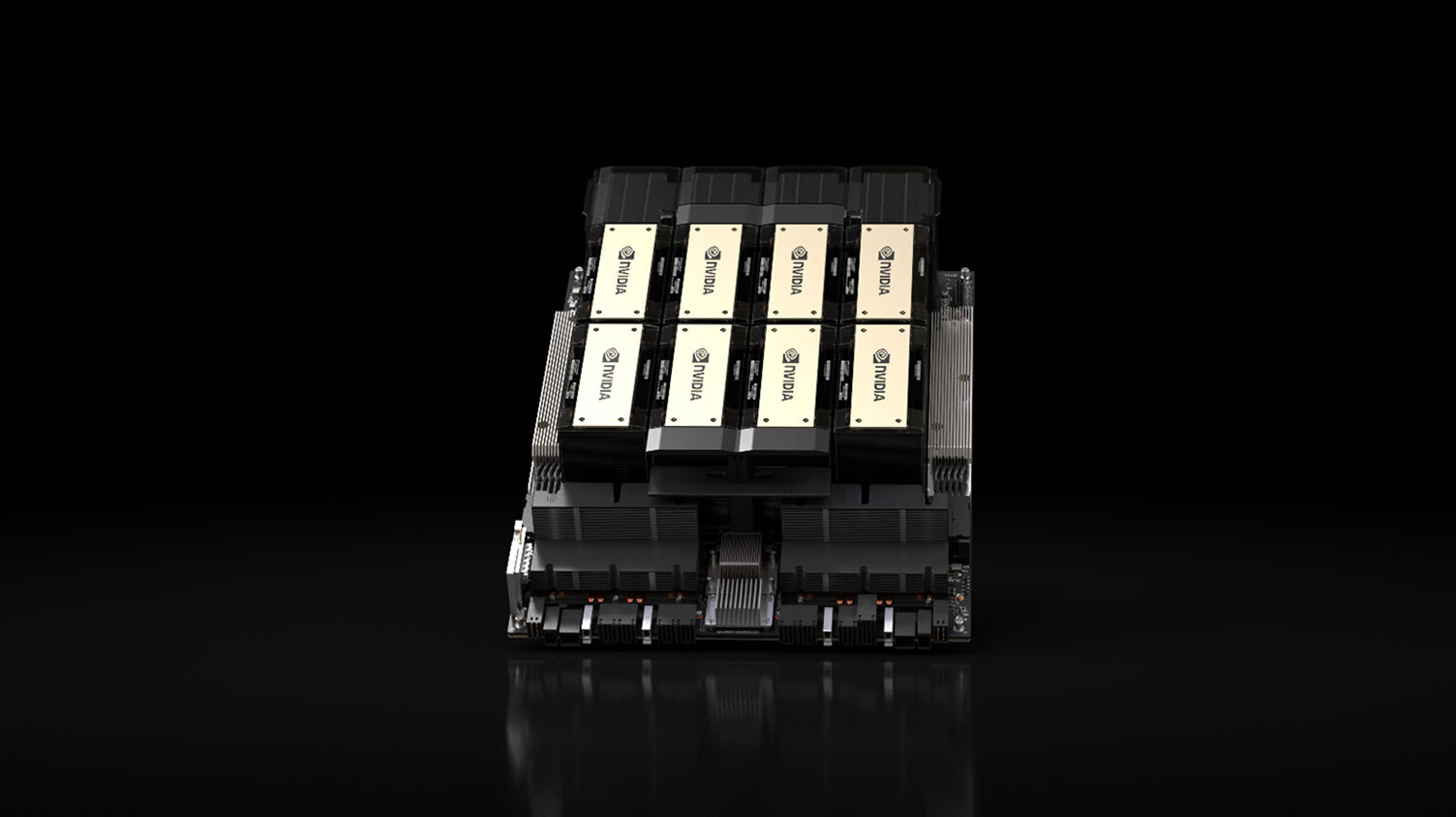

Table 1 shows the real(8) performance attained on one NUMA node of a dual-socket AMD EPYC 7742 Rome CPU-based server, a single NVIDIA V100, and a single NVIDIA A100 GPU.

| Implementation / Processor | TFLOPs |

| nvfortran matmul on a single CPU core | 0.010 |

| MKL DGEMM on 64 CPU cores | 1.674 |

| Naive OpenACC on V100 | 0.235 |

| Naive OpenACC on A100 | 0.447 |

| nvfortran matmul on V100 | 6.866 |

| nvfortran matmul on A100 | 17.660 |

Not only do you get automatic GPU acceleration on V100 and A100 GPUs using the matmul() intrinsic, but on the A100 the mapping of matmul() to cuTENSOR calls gets you automatic use of FP64 Tensor Cores.

Measured performance on real(4) and real(2) data

You can perform the same set of runs using real(4) (single precision) data and calling SGEMM rather than DGEMM. In addition, the CUDA 11.0 cuTENSOR Fortran wrappers can take advantage of the A100 TF32 data type and Tensor Cores. Table 2 shows the performance for those runs.

| Implementation / Processor | TFLOPs |

| nvfortran matmul on a single CPU core | 0.025 |

| MKL SGEMM on 64 CPU cores | 3.017 |

| Naive OpenACC on V100 | 0.460 |

| Naive OpenACC on A100 | 0.946 |

| nvfortran matmul on V100 | 10.706 |

| nvfortran matmul on A100 | 14.621 |

| nvfortran matmul on A100 using TF32 | 60.358 |

Why stop there? The nvfortran compiler supports 16-bit floating-point format (FP16) using the real(2) data type. You can change the types of the arrays in the earlier tests and run timings on half-precision as well.

Tensor Core operations were introduced on V100 for half-precision data, then extended on the A100 GPU to support TF32 and full double-precision DP64 Tensor Cores. While nvfortran supports real(2) and Tensor Cores on V100 and A100, it doesn’t support full and optimized real(2) on CPUs yet and neither do the standard BLAS libraries. In this case, it only makes sense to compare the performance of the GPU-accelerated versions (Table 3).

| Implementation / Processor | TFLOPs |

| Naive OpenACC on V100 | 0.490 |

| Naive OpenACC on A100 | 2.058 |

| nvfortran matmul on V100 | 68.242 |

| nvfortran matmul on A100 | 92.810 |

While the A100 performance is impressive and the code is fully portable, it is significantly below peak for TF32 and FP16. There is fixed overhead: at each call, you create and destroy the cuTENSOR tensor descriptors and create the contraction plan. You must also query and manage the workspace requirements used in the contraction, which may ultimately invoke cudaMalloc and cudaFree. If the overhead is 5–10% for FP64, that becomes closer to 25% for TF32 and around 35% for FP16, for this size of problem.

For developers who need ultimate performance, nvfortran does directly support Fortran interfaces to the C cuTENSOR API in the Fortran cutensor module, also provided within the HPC SDK. You can manage the tensor descriptors, plans, and workspace yourself.

Conclusion

In this post, I’ve shown some simple programs and the types of Fortran intrinsics calls and code patterns that can be automatically accelerated on GPUs. They can even automatically take advantage of Tensor Cores through cuTENSOR. With programs that are almost completely standard Fortran and fully portable to other compilers and systems, you can achieve near-peak performance on NVIDIA GPUs on matrix multiplication, matrix transpose, elemental array intrinsics, and multiple combinations of array syntax.

It’s impossible to predict what you might do or achieve with these new features. I look forward to seeing your feedback and results. NVIDIA continues to add more features that allow you to program NVIDIA GPUs with maximum performance using standard Fortran constructs.