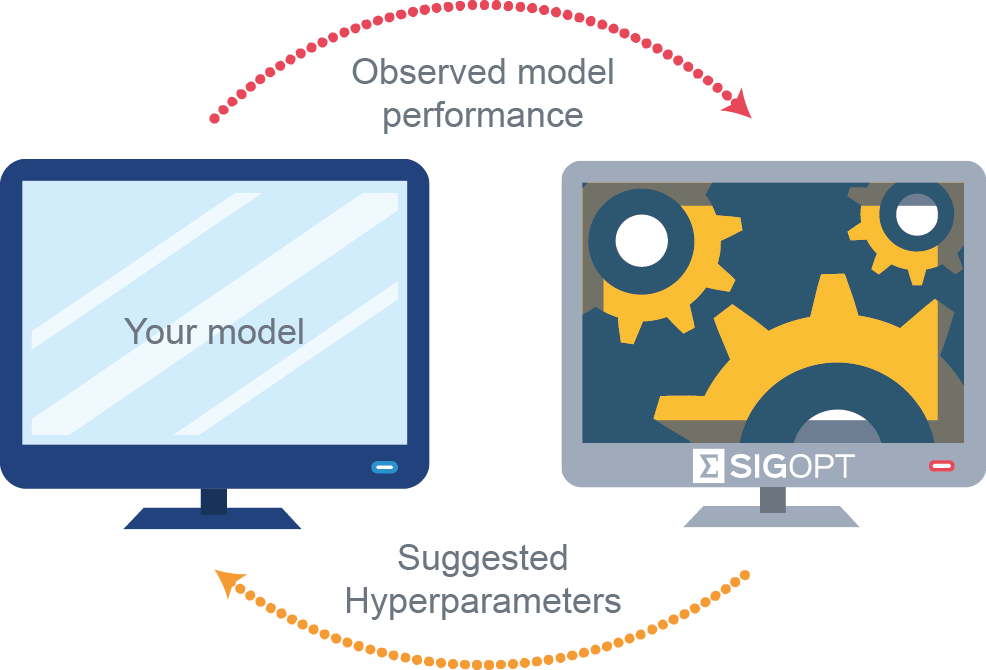

Learn how to use SigOpt’s Bayesian optimization platform to jointly optimize competing objectives in deep learning pipelines on NVIDIA GPUs more than ten times faster than traditional approaches like random search.

Measuring how well a model performs may not come down to a single factor. Often there are multiple, sometimes competing, ways to measure model performance. For example, in algorithmic trading an effective trading strategy is one with high portfolio returns and low drawdown. In the credit card industry, an effective fraud detection system needs to accurately identify fraudulent transactions in a timely fashion (for example less than 50 ms). In the world of advertising, an optimal campaign should simultaneously lead to higher click-through rates and conversion rates.

Optimizing for multiple metrics is referred to as multicriteria or multimetric optimization. Multimetric optimization is more expensive and time-consuming than traditional single metric optimization because it requires more evaluations of the underlying system to optimize the competing metrics.

A new NVIDIA Developer Blog post explores two classification use cases, one using biological sequence data and the other using natural language processing (NLP). Both examples tune stochastic gradient descent (SGD) hyperparameters as well as neural network architecture parameters using both MXNet and TensorFlow. The post compares SigOpt with random search on the time and computational cost it takes to conduct the search as well as the quality of the models produced.

These examples demonstrate how SigOpt enables you to find optimally performing robust configurations to deploy into production, 10x faster than traditional methods.

Read more >

Deep Learning Hyperparameter Optimization with Competing Objectives

Aug 07, 2017

Discuss (0)

Related resources

- DLI course: Deep Learning for Industrial Inspection

- DLI course: Deep Learning for Industrial Inspection

- GTC session: Advances in Optimization AI

- GTC session: Advances in Optimization AI

- NGC Containers: NVIDIA MLPerf Inference

- NGC Containers: NVIDIA MLPerf Inference