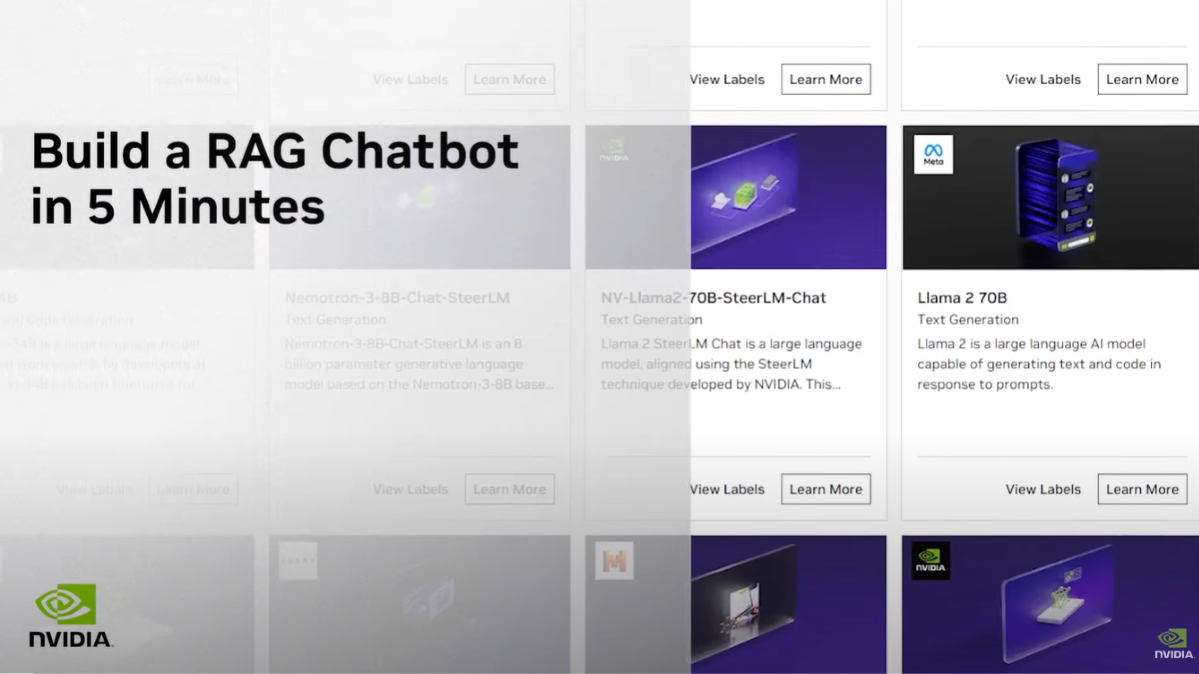

NGC, NVIDIA’s GPU-optimized hub for AI, HPC, and data analytics software accelerates end-to-end workflows. The 100+ models and industry-specific SDKs on NGC, help data scientists and developers build solutions, gather insights, and deliver business value faster than ever before. NGC software can deploy on-premise, cloud, or at the edge.

We have recently added new containers, models and resources to the NGC catalog. Here are a few highlights:

Containers

Deep Learning

- 20.06 deep learning framework container releases for PyTorch, TensorFlow and MXNet are the first releases to support the latest NVIDIA A100 GPUs and latest CUDA 11 and cuDNN 8 libraries. TF32, which is a new precision, is available by default in the containers and provides up to 6X performance improvement out of the box for Deep Learning training when compared to V100 FP32.

- NVIDIA TensorFlow 1.15, with support for A100 GPUs, CUDA 11 and cuDNN 8, has been made available in GitHub and as pip wheels.

- Starting with 20.06, the PyTorch containers have support for torch.cuda.amp, the mixed precision functionality available in Pytorch core as the AMP package. Compared to apex.amp, torch.cuda.amp is more flexible and intuitive. For more details, read our recent blog from PyTorch.

NVIDIA Linux4Tegra (L4T)

- NVIDIA L4T package provides the bootloader, kernel, necessary firmwares, NVIDIA drivers, flashing utilities and a sample filesystem to be used on Jetson systems. The software packages contained in L4T provide the functionality necessary to run Linux on Jetson devices (Xavier, TX2, and Nano).

HPC

- NAMD 3.0 Alpha: NAMD, one of the popular HPC applications, is a parallel molecular dynamics code designed for high-performance simulation of large biomolecular systems. The latest alpha version of NAMD shows 9X speed up on the A100 GPUs. You can now download the NAMD/VMD container from NGC.

- You can also download some of our newly updated HPC containers such as Gromacs, AutoDock 4.2, Chroma, Lammps, Relion and Paraview from NGC.

Additional Resources

- Learn more about how you can use the containers and models used in MLPerf to accelerate your AI application.

- Natural language processing (NLP) is one of the most challenging tasks for AI because it needs to understand context, phonics, and accent to convert human speech into text. Learn how you can leverage a pre-trained BERT-Large model from NGC and deploy it using TensorRT.

Upcoming Webinars

Join us on our live webinars and learn more about how NGC can help accelerate your AI and HPC workflows: