By Mokshith Voodarla, Josh Hejna, Anish Singhani, Rahul Amara

As robots become more integral throughout the world, delivering mail, food, and giving directions, they need to be able to easily navigate through indoor environments. Over the summer four high school NVIDIA interns, Team CCCC (ForeSee), developed a robust, low-cost solution to tackle this challenge.

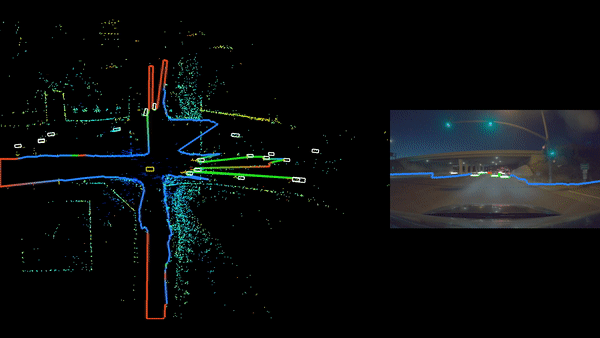

Indoor environments include many hazards not detectable by low-cost distance cameras or 2D LiDARs. This includes mesh-like railings, stairs, and glass walls. Traditionally, this issue has been solved with the aid of 3D LiDAR that uses laser distance scans to detect obstacles and gaps in the floor at any height. However, even low-end products start at $8,000, which limits their use in most consumer applications.

Instead of adding more specialized sensors, which is what’s traditionally been done, the team discovered that advancements in deep learning have made hazard avoidance possible with a single general sensor — the camera.

Essentially, the camera had to be able to detect the traversable plane in front of it to mark safe and unsafe zones. These zones could be obstacles as simple as chairs or walls which a laser range finder can detect or as complex as obstacles such as stairs, railings, and glass walls.

To detect complex obstacles using a single camera, the team trained the DeepLab V3 neural network architecture with ResNet-50 v2 as a feature extractor to segment free space (space not occupied by obstacles) on the ground in front of the robot. This enables the robot to travel through dangerous environments, which couldn’t be done previously with just a 2D LiDAR. The hazardous areas that are detected are then fed into an autonomous navigation system that directs the robot as it moves.

Google’s TensorFlow Visualization tool, TensorBoard, was used to visualize model architecture and monitor network training on NVIDIA Tesla V100 GPUs. This tool has the ability to view every node initialized in TensorFlow and view all model metrics, in this case visualizing model loss.

The interns’ final robot integrates an affordable robot development platform, a low-cost 2D LiDAR, an ordinary webcam, and an NVIDIA Jetson TX2 supercomputer on a module, running the Robot Operating System (ROS) to control it all. The TensorFlow deep neural network was tuned and trained on an NVIDIA Tesla V100 with CUDA and cuDNN accelerated training and execution. These high-performance tools allow for rapid iteration in model selection and hyperparameter tuning.

“Our work highlights how deep learning is critical to robotics development and also becoming more accessible to anyone who wants to take their inspiration and run,” the interns said.

Their code can be found on GitHub.