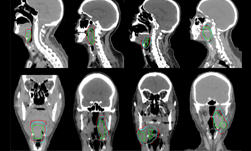

Measuring how tumors react to cancer treatment plays a major role in determining a patient’s outcome. The process, normally performed by trained radiologists, is labor-intensive, subjective and prone to inconsistency. To help alleviate the problem, researchers at the National Institutes of Health, the Ping An Insurance company, and a researcher presently at NVIDIA developed a deep learning-based method that can automatically annotate tumors in cancer patients.

“Measuring tumor diameters requires a great deal of professional knowledge and is time-consuming. Consequently, it is difficult and expensive to manually annotate large-scale datasets,” the researchers stated in their paper.

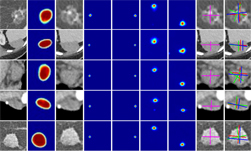

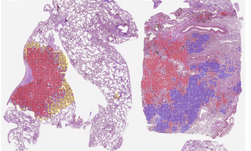

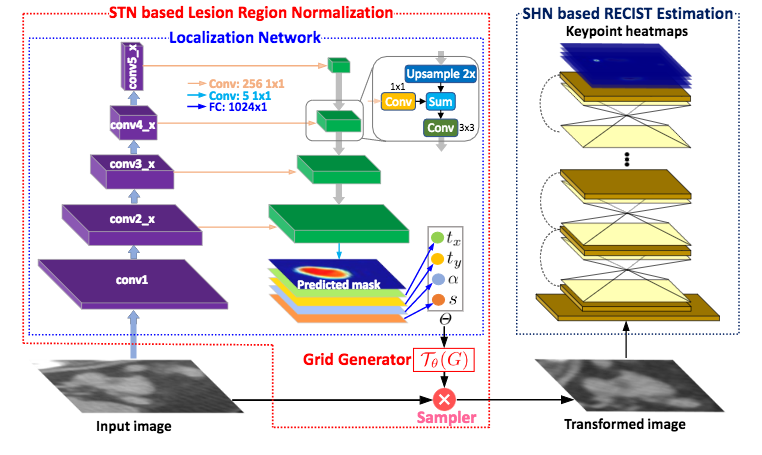

Using NVIDIA TITAN Xp GPUs and the cuDNN-accelerated PyTorch deep learning framework, the team trained their convolutional neural networks to annotate tumors on the RECIST (Response Evaluation Criteria In Solid Tumors) method, which are a set of published rules that define when cancer patients improve. The neural network was trained on thousands of images from the DeepLesion dataset which consists of 32,735 images measured via RECIST.

“Today, the majority of clinical trials evaluating cancer treatments use RECIST as an objective response measurement. Therefore, the quality of RECIST annotations will directly affect the assessment result and therapeutic plan,” the researchers said. “We are the first to automatically generate RECIST marks in a roughly labeled lesion region.”

The team says their neural network is highly effective, producing annotations with less variability than those of human radiologists.

The paper has been accepted and will be presented at the 21st International Conference on Medical Image Computing & Computer Assisterd Intervention (MICCAI) in Granada, Spain later this year.

Read more >

Related resources

- DLI course: Modeling Time-Series Data with Recurrent Neural Networks in Keras

- DLI course: Image Classification with TensorFlow: Radiomics?€?1p19q Chromosome Status Classification

- GTC session: How Artificial Intelligence is Powering the Future of Biomedicine

- GTC session: Creating AI-Powered Hardware Solutions for Medical Imaging Applications

- GTC session: Early Diagnosis of Cancer Cachexia Using Body Composition Index as the Radiographic Biomarker

- NGC Containers: MATLAB