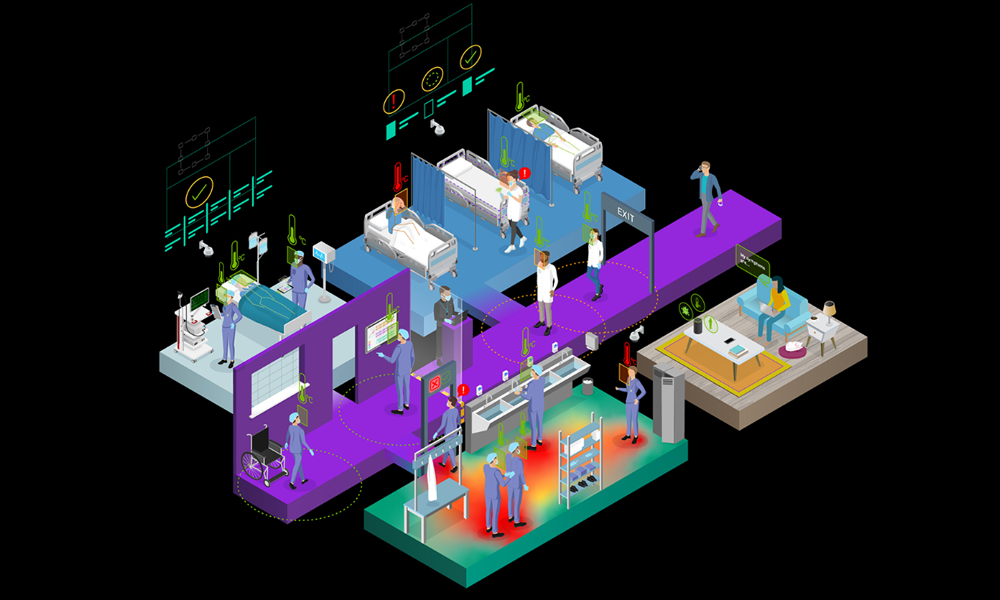

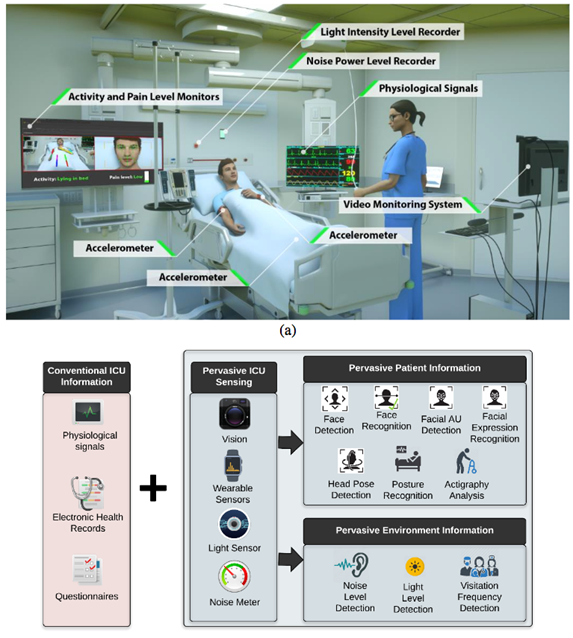

Researchers at the University of Florida developed a deep learning system that analyzes movement and expressions, as well as environmental factors, to better serve hospital patients in the intensive care unit. This study is the first that uses an autonomous system for patient monitoring in the ICU.

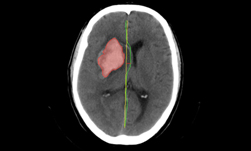

The system can detect elements such as faces, expressions, head pose, posture, visitation frequency, room sound pressure level, light intensity, sleep characteristics and actigraphy in real time.

“It has been shown that self-report and manual observations can suffer from subjectivity, poor recall, a limited number of administrations per day, and high staff workload,” the researchers wrote in their researcher paper. This lack of granular and continuous monitoring can prevent timely intervention strategies.”

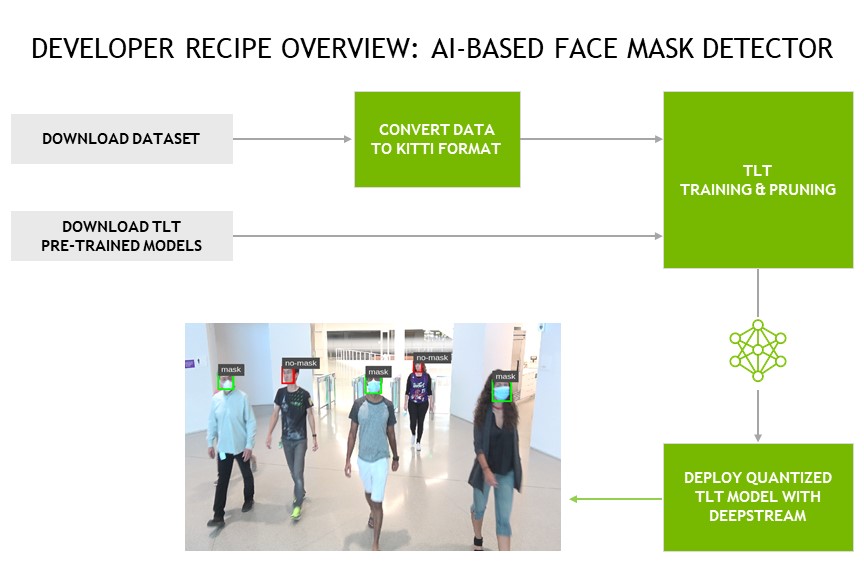

Using NVIDIA TITAN Xp GPUs and the cuDNN accelerated TensorFlow and Caffe deep learning frameworks, the team trained their system on a dataset comprised of over 53 million frames of video, 1,000 hours of accelerometer data, 768 hours of sound pressure, and 456 hours of light intensity data.

“AI technology can assist in administering repetitive patient assessments in real time, thus potentially enabling more timely interventions,” the researchers said. AI in critical care setting also can reduce nurses’ workload, allowing them to spend time on more critical tasks.”

The team used the same GPUs used during training for inference.

The system can be implemented to detect delirium as well as other medical issues that require a quick response. Using off-the-shelf products, the researchers say that a system can be installed for as little as $300 per ICU room.

Read more>

AI Assists Doctors Monitor ICU Patients

May 02, 2018

Discuss (0)

Related resources

- GTC session: Creating AI-Powered Hardware Solutions for Medical Imaging Applications

- GTC session: How Artificial Intelligence is Powering the Future of Biomedicine

- GTC session: How to Create Real-Time AI Medical Devices with Code

- SDK: MONAI Cloud API

- SDK: MONAI Deploy App SDK

- Webinar: Accelerate AI Model Inference at Scale for Financial Services