Manually colorizing black and white video is labor intensive and a tedious process. But now, a new deep learning based algorithm developed by NVIDIA researchers promises to make the process a lot easier — the new framework allows visual artists to simply colorize one frame in a scene and the AI goes to work by colorizing the rest of the scene in real time.

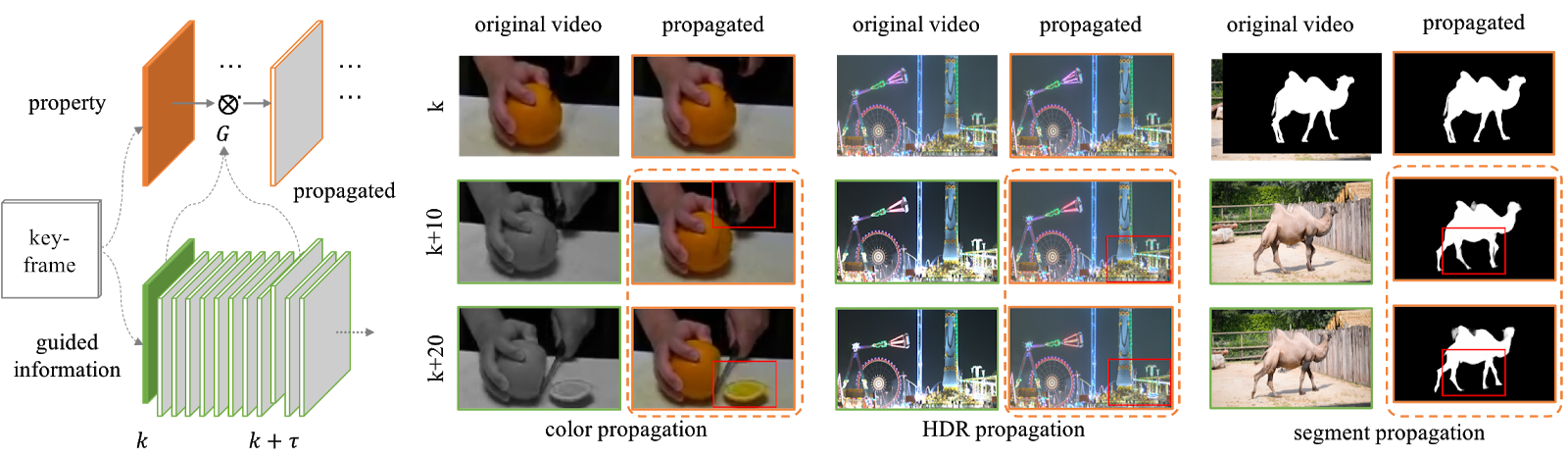

“Videos contain highly redundant information between frames. Such redundancy has been extensively studied in video compression and encoding, but is less explored for more advanced video processing such as colorizing a video,” said Sifei Liu, Researcher at NVIDIA and the author of this paper. “Now, colorizing a full video can be easily achieved by annotating at sparse locations in only a few key-frames,” Liu stated.

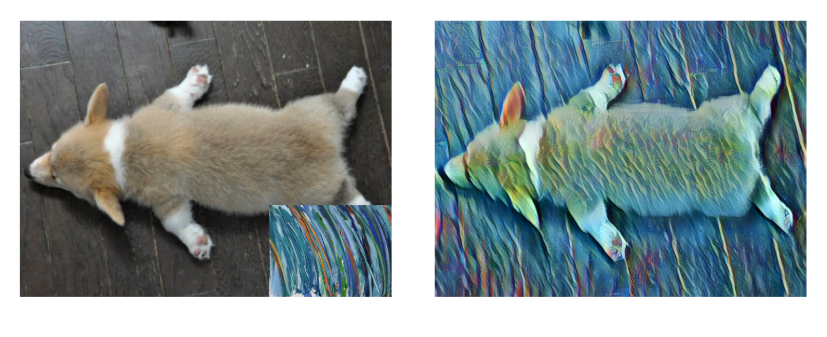

The convolutional neural network infers what the colors should be from just one colorized frame and fills in the color in the remaining frames. What makes this work unique is that the consequent colorization can be achieved via an interactive method in which the user annotates a part of the image, resulting in the finished product.

Using NVIDIA TITAN XP GPUs, Liu and her colleagues trained this hybrid network on hundreds of videos from multiple datasets for color, HDR, and mask propagation. Taking the example of color and mask propagation, she pre-trained the model on synthesized frame pairs generated from the MS-COCO dataset and then fine-tuned the network on the ACT dataset which contains 7260 video sequences with about 600,000 frames.

Liu says the framework is fast and can achieve real time results. The method also produces better quantitative results than several previous state-of-the-art methods, as explained in the work.

“The images have fewer artifacts and the colors are more vibrant,” Liu said. “The STPN provides a general method for propagating information over time in videos. In the future, we will explore how to incorporate mid level and high-level vision cues, such as detection, tracking, semantic/instance segmentation, for temporal propagation.” Liu and the team stated in the paper.

The work will be presented at the European Conference on Computer Vision (ECCV) in Munich, Germany taking place on September 8-14.

You can learn more about NVIDIA’s research at www.nvidia.com/research

Read more>