We sat down with Epic Games to discuss what Unreal Engine 4.22 brings to the table for developers.

- Nick Penwarden, Director of Engineering, Unreal Engine, Epic Games

- Marcus Wassmer, Director of Engineering, Rendering, Epic Games

- Juan Cañada, Lead Ray Tracing Engineer, Epic Games

How will ray tracing change a 3D artist’s content creation workflow?

Juan: Ray tracing not only brings more quality, but is also easier to use, more predictable, and generates less visual artifacts than traditional raster techniques. When using ray tracing, artists can focus more on the artistic side of things and less on understanding the internals of the algorithms.

Nick: Ray tracing allows for artists to light their scenes using area lights and area shadows, the same as you would in the real world. Doing so lets them leverage the decades of experience we have lighting sets for film and photography. It also frees them from having to worry about some of the more technical details when building content. For instance, when using ray tracing for global illumination there is no need to meticulously author lightmap UVs to achieve high-quality results and avoid color bleeding as is necessary for offline solutions

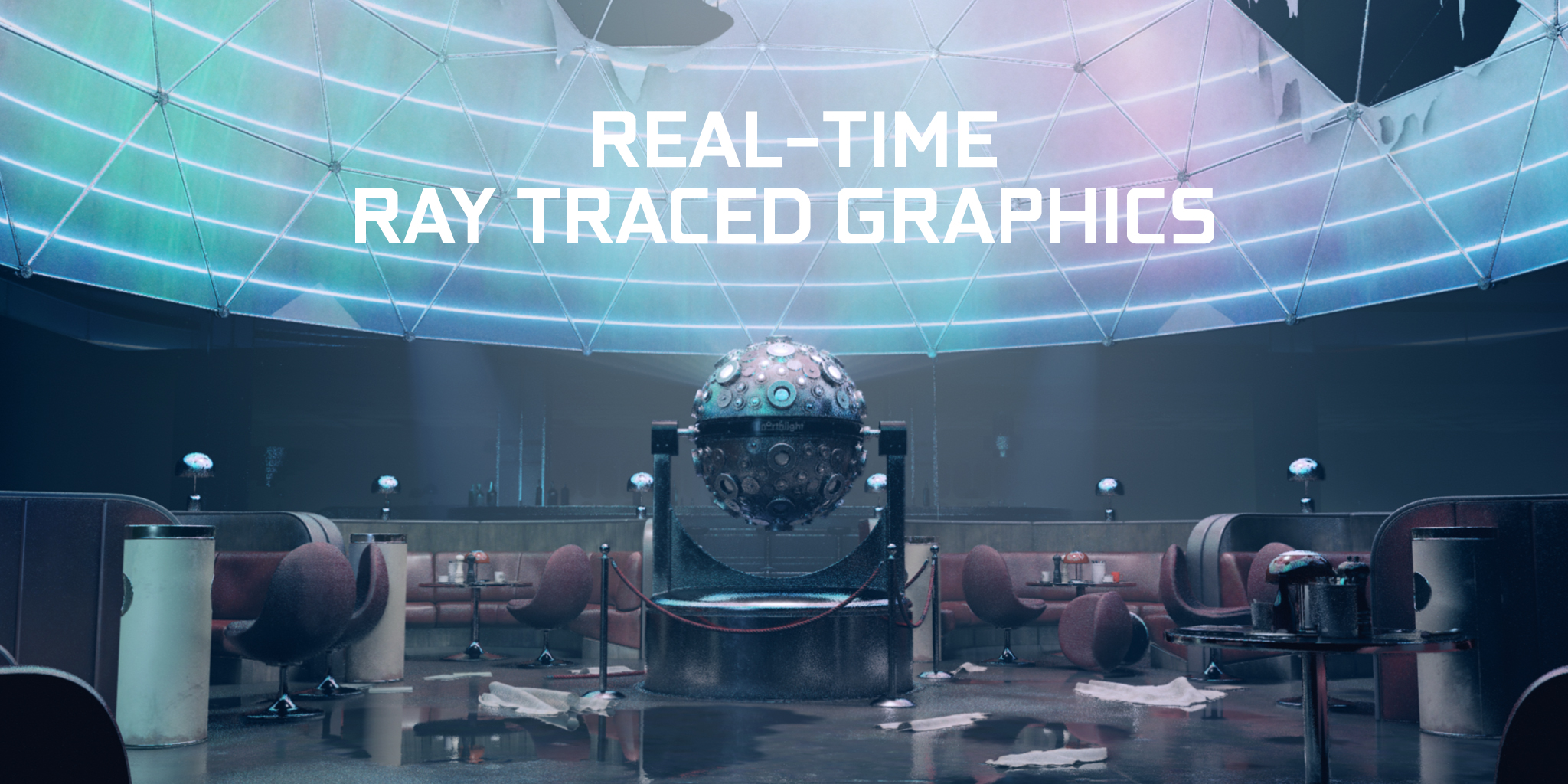

What does real-time ray tracing mean for virtual production in film and broadcast TV pipelines?

Juan: Ray tracing is a standard in film; pretty much all the movies made in the last decade use ray tracing and path tracing to generate images because of the superior quality, predictability, and easiness of use. However, these techniques have been slow, requiring a tremendous amount of time spent in rendering, sometimes even hundreds of hours per frame. That has made it impossible to use ray tracing in TV or film productions that can’t afford the cost of massive render farms. Real-time ray tracing will dramatically alleviate this problem, bringing ray tracing to fields where its cost in time or money was prohibitive until now.

What are the first things a developer should do to get started with ray tracing?

Juan: Developers will need an RTX card and Windows 10 RS5 or later to get started. Download Unreal Engine 4.22 from GitHub, or use one of the latest preview builds available through the Epic launcher, to see ray tracing code in action. We are delighted that developers use Unreal Engine to learn, explore and go beyond what has been possible previously.

What pitfalls might a developer face when they add ray tracing to their pipeline, and how can they avoid them?

Juan: Implementing a low-level DXR layer that allows writing ray-tracing algorithms on top requires knowledge in nontrivial topics such as DX12. This could be a pitfall for developers who have knowledge in ray tracing but not in modern real-time APIs. The way to avoid that is simple: use Unreal Engine 4.22, which does all the hard work under the hood and allows you to start writing ray-tracing code from day one.

Can you provide some guidance on how to get the best results from denoisers?

Juan: Denoisers are a hot topic, and they are changing at such a high speed that it is difficult to give tips that stay valid for more than a few months. Therefore, we have made efforts to encapsulate the difficulty of the denoisers in a way so that the user does not need to worry about what is going on under the hood.

Nick: UE4 also features a plugin API for adding new denoisers. This will allow researchers to experiment with new algorithms directly in Unreal. It also means that as new denoisers are developed, users can quickly add them to UE4 and try them out with their own content!

Of the many rendering updates that have been added to Unreal Engine 4.22, which do you think will be most broadly used, and why?

Marcus: So far, it looks like the biggest bang for buck is with ray-traced area light shadows. There’s no good solution for these types of shadows in rasterization, and shadow rays are some of the cheapest rays to use in DXR due to ray coherence and most objects not requiring material evaluation. This feature opens the door to artists lighting scenes as they do on real film sets. Ray-traced global illumination is also very promising, but quite expensive at the moment.

Epic has always been an early adopter of cutting-edge technology going back to programmable shading. As a developer, what are the advantages of being an early adopter?

Juan: Being an early adopter helps you to acquire knowledge in state-of-the-art techniques that usually become mainstream a few years later. That gives you a competitive advantage in your professional life. Also, it is fun!

Marcus: Early adoption also lets us have a close relationship with people working on the hardware and writing the spec. There are often non-obvious issues that crop up only when a group starts using a new technology in earnest. Good collaboration at the start helps us correct problems early, sometimes avoiding problems that could last for years otherwise.

Let’s talk a bit about the “Troll” demo. What were you aiming to demonstrate with this work?

Juan: In 2018 we showed two ray-tracing demos that were considered watershed moments in the history of rendering. Real-time ray tracing with cinematographic quality was considered impossible very few years ago. However, these demos were inaccessible for standard UE4 users because they used expensive hardware not available to the general public. Also, these demos used prototype code that was not part of the UE4 public code base.

Our goal this year was to make a demo using the code available in 4.22 using a single GPU that people can buy at reasonable price. That is the key idea of Troll. This demo is also a big leap in UE4 aspects beyond rendering because it has helped us to improve very significantly areas that are key for real-time cinematography, such as UE4’s alembic pipeline, geometry cache plugin, and Sequencer cinematic editor.

What technical hurdles did you face in the creation of “Troll,” and how did you overcome them?

Juan: Our previous ray-tracing demos looked great but were simpler scenarios: just a few objects and two or three lights with a much more limited set of materials. Troll is a short movie that happens in a remarkably more complex environment with an order of magnitude more geometry, virtual humans, vegetation, effects, clothes, skin, hair — and real-time ray tracing.

There were many challenges that we solved case by case thanks to the extraordinary talent and dedication of the team. Troll has been instrumental to implement a more mature ray-tracing technology in Unreal Engine 4.22 that is capable of producing cinematographic results in real time.

In what instances should ray tracing replace rasterization, and in which cases should a developer stick with a raster solution?

Juan: It all depends on the nature of the project. Raster will keep being used for primary visibility in the majority of the cases for a long time. Games that need high frames per second will probably continue to use raster techniques for quite a long time, and will only add ray tracing for things such as shadows that add a great a quality boost with very good speed. Other ray-tracing effects, such as reflections and global illumination, are more expensive and so for some time we will see people using raster or hybrid techniques with sophisticated heuristics that fall back to ray tracing or raster appropriately in each scenario. Fields that do not need such a high amount of frames per second, like film and TV, will quickly adopt real-time ray tracing as the de facto solution