Five teams of developers gathered at the Silicon Valley Virtual Reality (SVVR) headquarters in California last month to learn about the new features of IBM Watson’s Visual Recognition service, like the ability to train and retrain custom classes on top of the stock API, that allow the service to have new and interesting use cases in VR when combined with the Watson Unity SDK. Staff from IBM, NVIDIA and SVVR were on-site for the event to help developers gain the best experience.

The Watson Developer Cloud Unity SDK makes it easy for developers to take advantage of modern AI techniques through a set of cloud-based services with a simple REST APIs. These APIs can be accessed from the Unity development environment by using the Watson Unity SDK, available on GitHub, so anyone can take the code and improve upon it.

This was the first experience building training sets for image recognition for many of the developers, but the Watson API made things relatively simple. Teams were able to get started within a few minutes.

After two days of hacking, the winning team was Watson and Waffles, an intriguing adventure game which required the player to sketch game objects using the Vive controller. Watson identified the objects, and then the game manifested them for the player to use.

The game was innovative — combining VR adventuring with room scale sketching using motion controllers. The winning team received an NVIDIA TITAN X GPU and an HTC Vive VR setup.

“It was fantastic to experiment with the new AI image recognition technologies in all new ways in VR, and now my mind is running wild with ideas of how AI could become an essential part of a game developer’s toolbox — allowing us to rapid prototype things that would otherwise require hours of entangled webs of logic,” said Michael Jones, developer on the Watson and Waffles team.

The runner-up teams built a game based around recognizing playing cards with the Vive’s front-facing camera and a VR panoramic photo viewing application that allowed uses to take a virtual journey through their memories.

“I really wanted to do a Watson VR hackathon focusing on a single service — in this case visual recognition — to see what creative and interesting new use cases developers could come up with, and thanks to the high quality of participants and the support of great partners like NVIDIA, HTC and SVVR, we were blown away by the results,” said Michael Ludden, Product Manager, Developer Relations at IBM Watson who also mentioned they have plans of hosting similar hackathons in the future.

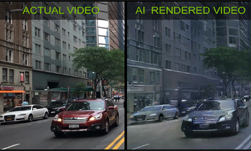

Watson uses GPU-acceleration to power components of the image recognition API. Image recognition can be used in a variety of Virtual and Augmented reality applications, and besides being able to recognize images, Watson has services for interactive speech, natural language processing, translation, speech-to-text, text-to-speech and data analytics, among others.

Download the Watson Developer Cloud Unity SDK and click here for more information on how start building with Watson APIs.

Using Virtual Reality at the IBM Watson Image Recognition Hackathon

Sep 13, 2016

Discuss (0)

Related resources

- GTC session: Computer Vision for Industrial Inspection

- GTC session: Bootstrapping Computer Vision Models with Synthetic Data

- GTC session: Bootstrapping Computer Vision Models With Synthetic Data

- Webinar: Isaac Developer Meetup #2 - Build AI-Powered Robots with NVIDIA Isaac Replicator and NVIDIA TAO

- Webinar: Transforming Warehouse Operation Management Using Computer Vision and Digital Twins

- Webinar: Bringing Generative AI to Life with NVIDIA Jetson