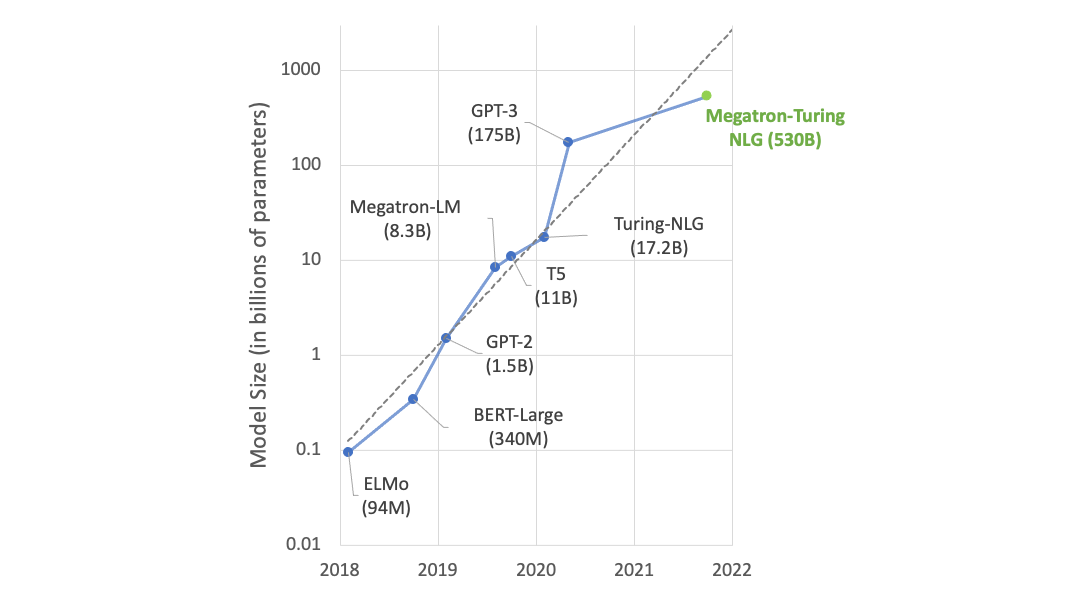

Microsoft today announced a breakthrough in conversational AI, training the largest transformer-based language generation model with 17 billion parameters, using NVIDIA DGX-2 systems. Also today, Microsoft open-sourced DeepSpeed, a deep learning library that can help developers with latency and inference.

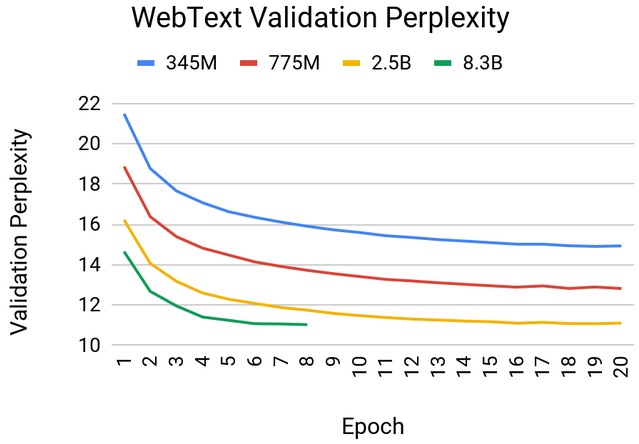

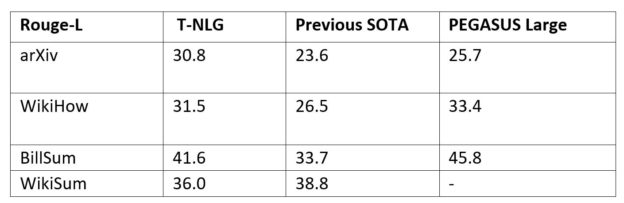

Named Turing Natural Language Generation (T-NLG), the model is the largest transformer model available that achieves state-of-the-art results on a range of natural language processing tasks.

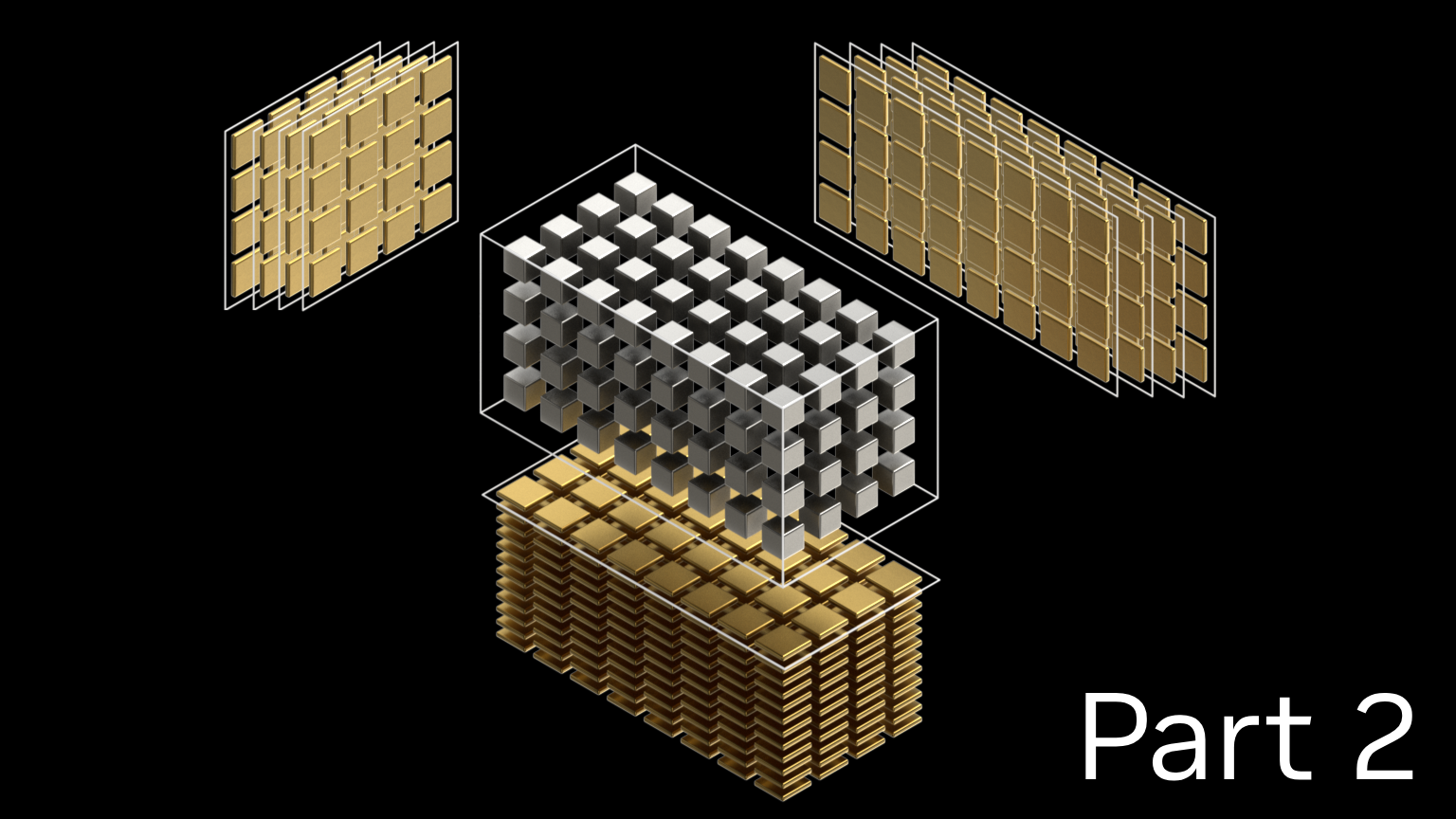

To do this, the team used Microsoft’s DeepSpeed distributed optimizer together with NVIDIA’s Megatron parallel language models and trained their T-NLG using 16 NVIDIA DGX-2 systems, comprised of 16 NVIDIA V100 Tensor Cores GPUs each, interconnected via Mellanox’s InfiniBand.

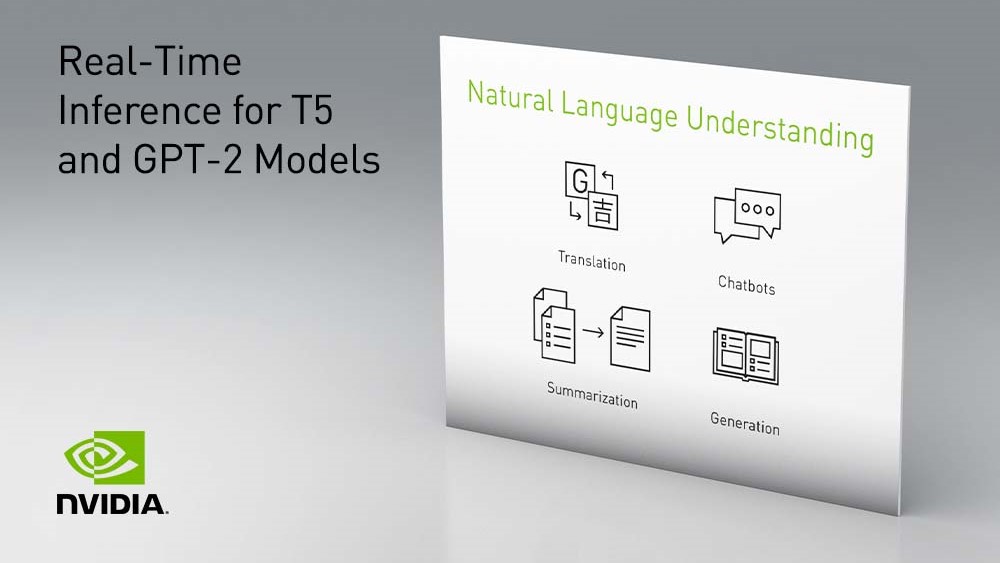

The model is designed to assist natural language processing (NLP) systems with question answering, conversational agents, and document understanding.

“Better natural language generation can be transformational for a variety of applications, such as assisting authors with composing their content, saving one time by summarizing a long piece of text or improving customer experience with digital assistants.

“Generative models like T-NLG are important for NLP tasks since our goal is to respond as directly, accurately, and fluently as humans can in any situation,” the Microsoft researchers stated in a blog, Turing-NLG: A 17-billion-parameter language model by Microsoft.

“Previously, systems for question answering and summarization relied on extracting existing content from documents that could serve as a stand-in answer or summary, but they often appear unnatural or incoherent. With T-NLG we can naturally summarize or answer questions about a personal document or email thread.”

For more details, read the Megatron blog post and two blogs published by Microsoft, Turing-NLG: A 17-billion-parameter language model by Microsoft, and ZeRO & DeepSpeed: New system optimizations enable training models with over 100 billion parameters. Also, see Microsoft’s GitHub repository.