To simulate how subatomic particles interact, or how haze affects climate, scientists from Stanford University and the University of Oxford developed a deep learning-based method that can speed up simulations by billions of times.

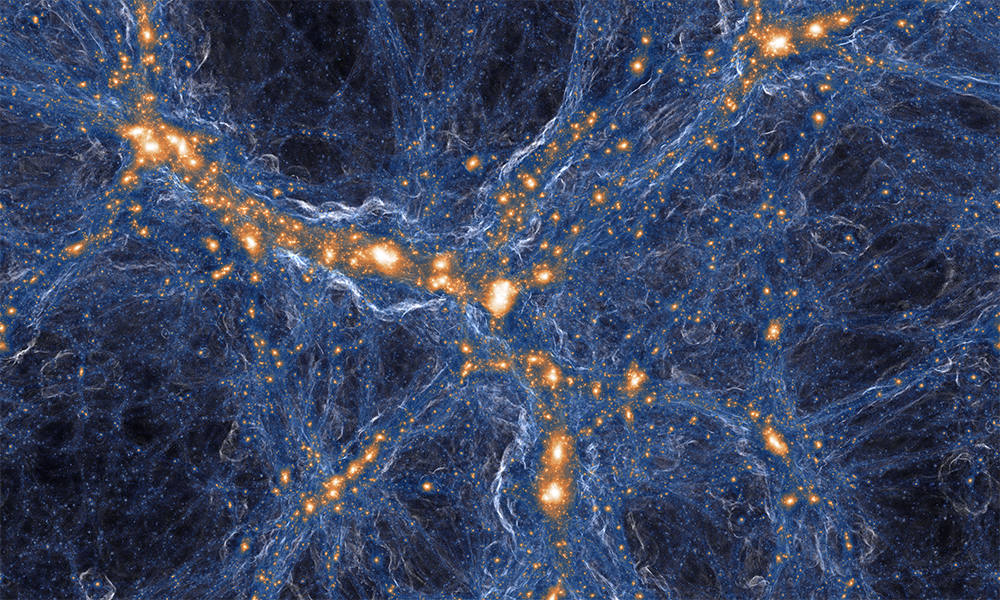

Normally, a typical computer simulation calculates how physical forces affect atoms, clouds, galaxies, or whatever else is being modeled. This approach often requires hours on the world’s fastest supercomputers.

Instead of relying on physics-based simulations, AI-powered emulators quickly approximate these detailed simulations, offering an AI shortcut that has the potential to help scientists make the best of their time at experimental facilities.

“This is a big deal,” Donald Lucas stated in a Science article about the work, From models of galaxies to atoms, simple AI shortcuts speed up simulations by billions of times.

Lucas runs climate simulations at the Lawrence Livermore National Laboratory and was not involved in the work. However, he says if the new research holds up to peer review, “it would change things in a big way.”

The new emulator is based on a technique called Deep Emulator Network Search (DENSE). The model relies on a general neural architecture search co-developed by Melody Guan, a computer scientist at Stanford, and Muhammad Kasim, a physicist at the University of Oxford.

“The method successfully accelerates simulations by up to 2 billion times in 10 scientific cases including astrophysics, climate science, biogeochemistry, high energy density physics, fusion energy, and seismology, using the same super-architecture, algorithm, and hyperparameters,” the researchers stated in their paper, Up to two billion times acceleration of scientific simulations with deep neural architecture search.

“Our approach also inherently provides emulator uncertainty estimation, adding further confidence in their use,” the researchers added.

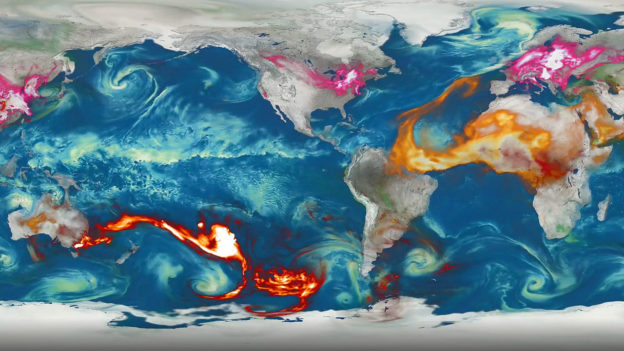

Specifically, for a global aerosol-climate modeling simulation that used a general circulation model (GCM), the team first tested the simulation using CPU-only. This took around 1150 CPU-hours. When using an NVIDIA TITAN X GPU, the simulation was accelerated by over two billion times.

In one comparison, the astronomy simulation’s results were more than 99.9% identical to the results of the full supercomputer-based simulation.

Kasim, the lead author of the study, says that he initially thought DENSE would need tens of thousands of training examples per simulation to achieve these levels of accuracy. However, he achieved this with just a few thousand, and in the aerosol case only a few dozen.

The researchers are hopeful that DENSE will allow other scientists to interpret data on the fly,

“We anticipate this work will accelerate research involving expensive simulations, allow more extensive parameter exploration, and enable new, previously unfeasible computational discovery,” the researchers stated.