Many robots rely entirely on geometry to plan a collision-free path. However, geometric reasoning can cause autonomous machines to think that they can’t traverse through tall grass or bumpy terrain to reach their goal.

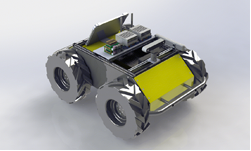

To help solve this problem, UC Berkeley researchers Greg Kahn, Pieter Abbeel, and Sergey Levine developed the Berkeley Autonomous Driving Ground Robot (BADGR). The system is an end-to-end autonomous machine that can be trained with self-supervised data gathered in real-world environments and without any simulation or human supervision.

In contrast to the geometry-only approach, BADGR learns from experience.

“Our approach can learn to navigate in real-world environments with geometrically distracting obstacles, such as tall grass, and can readily incorporate terrain preferences, such as avoiding bumpy terrain, using only 42 hours of data,” the authors stated in their paper, BADGR: An Autonomous Self-Supervised Learning-Based Navigation System, submitted to the preprint ArXiv server.

At the core of the system is a neural network that takes as input the current camera sensor observations and a sequence of future planned actions. With this data, the robot predicts possible obstacles or collisions, or if it will drive over bumpy terrain.

Inside the system is an NVIDIA Jetson TX2, which is “ideal for running deep learning applications,” the researchers said.

The Jetson TX2 processes information from the onboard camera, six-degree-of-freedom inertial measurement unit sensor, GPS, and a 2D lidar sensor.

“The key insight behind BADGR is that by autonomously learning from experience directly in the real world, BADGR can learn about navigational affordances, improve as it gathers more data, and generalize to unseen environments,” Greg Kahn, the project’s lead researcher stated in the Berkeley Artificial Intelligence Research post, BADGR: The Berkeley Autonomous Driving Ground Robot.

Although the team believes BADGR is a promising step towards a fully automated, self-improving navigation system, there are still problems that need to be solved, including how the robot safely gathers data in new environments, and how it can cope with humans walking around it.

“We believe that solving these and other challenges is crucial for enabling robot learning platforms to learn and act in the real world,” Kahn said.

The researchers have published their Anaconda, ROS, TensorFlow implementation in the badgr GitHub repo.