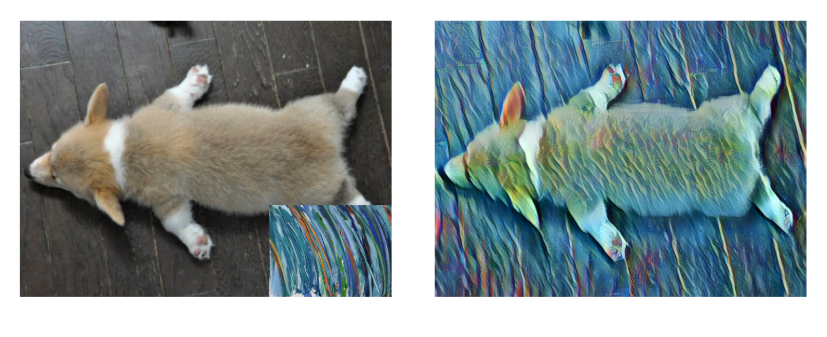

Researchers from the University of Washington and Facebook recently released a paper that shows a deep learning-based system that can transform still images and paintings into animations. The algorithm called Photo Wake-Up uses a convolutional neural network to animate a person or character in 3D from a single still image.

“Our method works with a large variety of whole-body, fairly frontal photos, ranging from sports photos to art, and posters,” the researchers stated in their paper. “In addition, the user is given the ability to edit the human in the image, view the reconstruction in 3D, and explore it in AR.”

To demonstrate the power of the algorithm the team used images of graffiti, cartoon characters, NBA star Stephen Curry, and Picasso paintings.

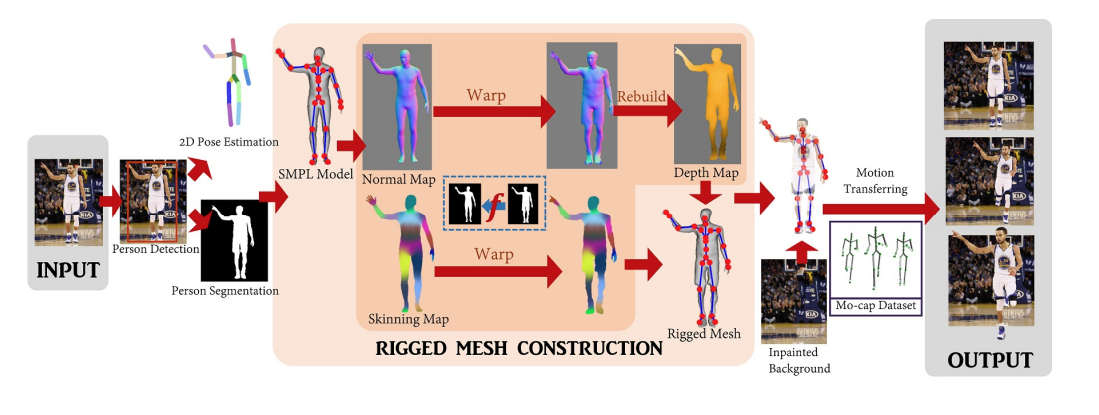

At the crux of the work is a unique approach that allows the researchers to more closely warp a 2D cutout of a person in a still image, enabling the algorithm to produce a realistic 3D animated mesh that matches the character in the image.

Using NVIDIA TITAN GPUs and the cuDNN-accelerated PyTorch deep learning framework the researchers based their software on a pre-trained model called SMPL, which was first developed by a team at Microsoft and the Max Planck Institute for Intelligent Systems in Germany.

As shown in the graphic above, the software first segments the human body from the image and superimposes a 3D mesh onto the shape. The mesh can then be animated to bring the photo or painting to life.

“We believe the method not only enables new ways for people to enjoy and interact with photos but also suggests a pathway to reconstructing a virtual avatar from a single image while providing insight into the state of the art of human modeling from a single photo,” the researchers said.

The work was recently published on ArXiv and also published on the team’s website.

Read more>

Transforming Paintings and Photos Into Animations With AI

Jan 08, 2019

Discuss (0)

Related resources

- GTC session: Generative AI Theater: AI Decoded - Generative AI Spotlight Art With RTX PCs and Workstations

- GTC session: Create Your Artistic Portrait with Multimodal Generative AI and NVIDIA Technologies

- GTC session: Unlocking Creative Potential: The Synergy of AI and Human Creativity

- Webinar: Isaac Developer Meetup #2 - Build AI-Powered Robots with NVIDIA Isaac Replicator and NVIDIA TAO

- Webinar: Accelerated Creative AI – Using NVIDIA-optimized image generation for Media and Entertainment

- Webinar: Building Generative AI Applications for Enterprise Demands