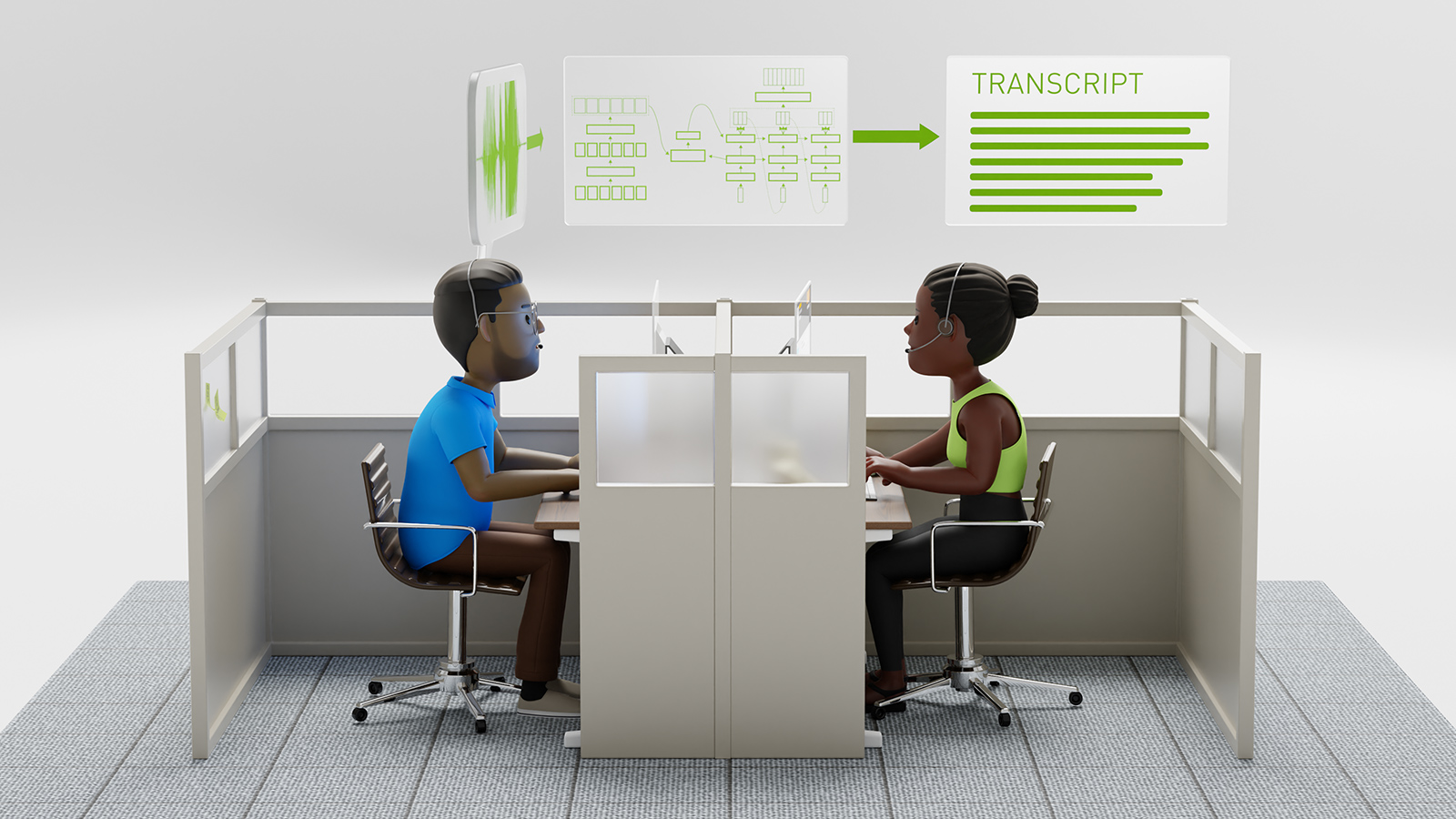

AI-enabled services such as speech recognition and natural language processing are increasing in demand. To help developers manage growing datasets, latency requirements, customer requirements, and more complex neural networks, we are highlighting a few AI speech applications that rely on NVIDIA’s inference platform to solve common AI speech challenges.

From Amazon’s Alexa Research group enhancing emotion detection, Microsoft’s MT-DNN shattering records in the top NLP benchmark, to researchers in academia using speech as the primary input for animating avatars, these are just a few examples of what’s possible.

In the video below, we highlight five of these inference use cases.

5 – Amazon Improves Speech Emotion Detection with Adversarial Training

Developers from Amazon’s Alexa Research group have just published a developer blog and published a paper describing how they are using adversarial training to recognize and improve emotion detection.

“A person’s tone of voice can tell you a lot about how they’re feeling. Not surprisingly, emotion recognition is an increasingly popular conversational-AI research topic,” said Viktor Rozgic, a senior applied scientist in the Alexa Speech group stated.

The work was completed in collaboration with Srinivas Parthasarathy, a doctoral student in the Electrical Engineering Department at The University of Texas at Dallas who previously interned at Amazon.

4 – AI Model Can Generate Images from Natural Language Descriptions

To potentially improve natural language queries, including the retrieval of images from speech, Researchers from IBM and the University of Virginia developed a deep learning model that can generate objects and their attributes from natural language descriptions. Unlike other recent methods, this approach does not use GANs.

“We show that under minor modifications, the proposed framework can handle the generation of different forms of scene representations, including cartoon-like scenes, object layouts corresponding to real images, and synthetic images,” the researchers stated in their paper.

Named Text2Scene, the model can interpret visually descriptive language to generate scene representations.

3 – DeepZen Uses AI to Generate Speech for Audiobooks

Almost 1,000,000 books are published every year in the United States, however, only around 40,000 of them are converted into audiobooks, primarily due to costs and production time.

To help with the process, DeepZen, a London-based company, and a member of NVIDIA’s Inception program, developed a deep learning-based system that can generate complete audio recordings of books and other voice related applications that are human-like and filled with emotion.

“The traditional process is taking too long and costing too much money, said Taylan Kamis, the company’s Co-Founder and CEO. “If you think about it, we need to find a narrator, arrange a studio, and do a lot of recordings with that person. It’s quite a lengthy, taking anywhere from three weeks to months, and can cost up to $5,000 per book. We are hoping to give people more options.”

2 – Microsoft Announces New Breakthroughs in AI Speech Tasks

Microsoft AI Research just announced a new breakthrough in the field of conversational AI that achieves new records in seven of nine natural language processing tasks from the General Language Understanding Evaluation (GLUE) benchmark.

Microsoft’s natural language processing algorithm called Multi-Task DNN, first released in January and updated this month, incorporates Google’s BERT NLP model to achieve groundbreaking results.

“For each task, we train an ensemble of different MT-DNNs (teacher) that outperforms any single model, and then train a single MT-DNN (student) via multi-task learning to distill knowledge from these ensemble teachers,” reads a summary of the paper “Improving Multi-Task Deep Neural Networks via Knowledge Distillation for Natural Language Understanding.”

1 – Generating Character Animations from Speech with AI

Researchers from the Max Planck Institute for Intelligent Systems, a member of NVIDIA’s NVAIL program, developed an end-to-end deep learning algorithm that can take any speech signal as input – and realistically animate it in a wide range of adult faces.

“There is an extensive literature on estimating 3D face shape, facial expressions, and facial motion from images and videos. Less attention has been paid to estimating 3D properties of faces from sound,” the researchers stated in their paper. “Understanding the correlation between speech and facial motion thus provides additional valuable information for analyzing humans, particularly if visual data are noisy, missing, or ambiguous.”