Drones are becoming an artist’s best friend, helping amateurs and experienced filmmakers create smooth and aesthetically pleasing videos. However, the use of drones for cinematography is extremely challenging as it requires a skilled drone operator and a safe trajectory planned in advance. To solve the problem and allow filmmakers more space for creativity, researchers from the Carnegie Mellon University and Yamaha Motor in Japan developed a deep learning-based system that can create smooth, safe, and occlusion-free trajectories for aerial filming automatically.

“Aerial vehicles are revolutionizing the way both professional and amateur filmmakers capture shots of actors and landscapes, increasing the flexibility of narrative elements and allowing the composition of aerial viewpoints which are not feasible using traditional devices such as hand-held cameras and dollies,” the researchers stated in their paper.

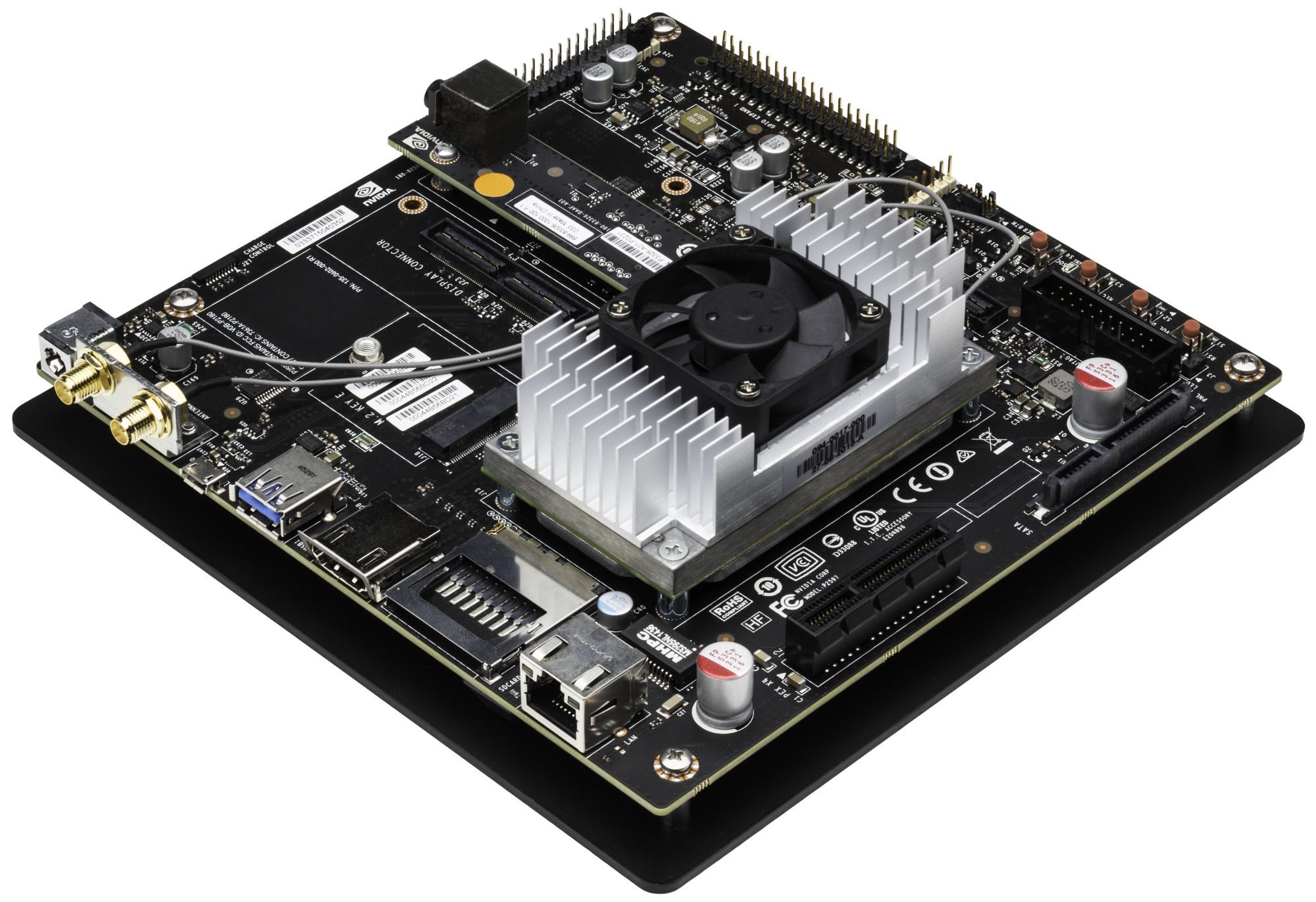

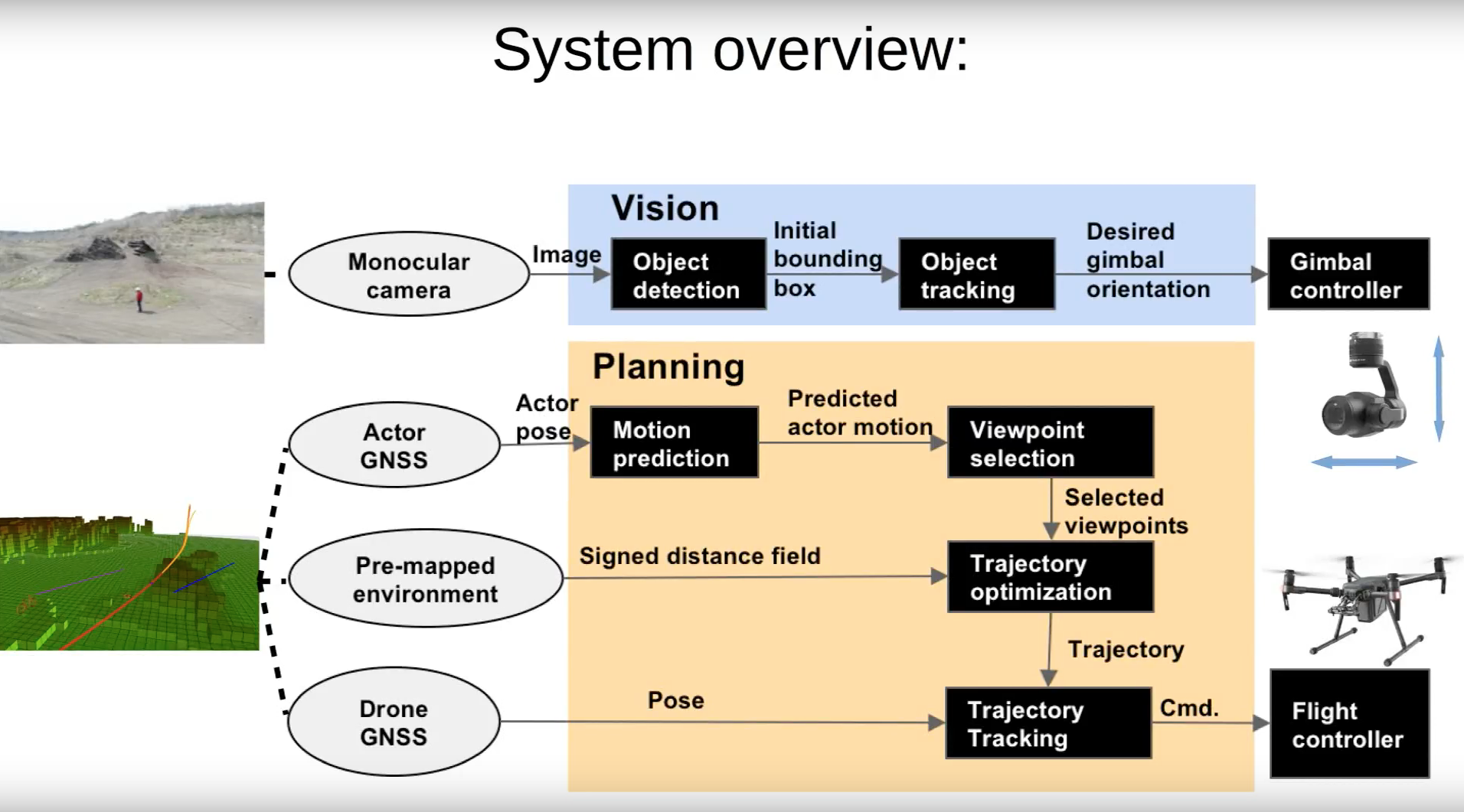

Using NVIDIA GeForce GTX 1080 GPUs with the cuDNN-accelerated PyTorch deep learning framework, the team trained a convolutional neural network on about 70,000 images from several datasets. Once trained, an off-the-shelf drone, equipped with an NVIDIA Jetson TX2, forecasts an actor’s motion and plans a smooth, collision-free trajectory while avoiding occlusion.

In the video above, the team shows the robustness of the drone and neural network in real-world conditions with different types of shots and shot transitions, actor motions, and obstacle shapes. The drone reasons about artistic guidelines such as the rule of thirds, scale, relative angles, and performs object or person tracking.

“Unlike previous works that operate either with high-accuracy indoor motion capture systems or precision RTK GPS outdoors, we use only conventional GPS, resulting in high noise for both drone localization and actor motion prediction. Therefore, we decided to decouple the motion of the drone and the camera,” the researchers said. “The camera is mounted on a 3-axis independent gimbal and can place the actor on the correct screen position by visual detection, despite errors in the drone’s position.”

The work will be presented at the International Symposium on Experimental Robotics (ISER), 2018 in Buenos Aires, Argentina.

Read more>

This Drone Uses AI to Automatically Create the Perfect Cinematic Shots

Sep 05, 2018

Discuss (0)

Related resources

- GTC session: Generative AI Theater: Generative AI Can Take You Anywhere

- GTC session: Generative AI Theater: AI Decoded - Generative AI Spotlight Art With RTX PCs and Workstations

- GTC session: Transforming 2D Imagery into 3D Geospatial Tiles With Neural Radiance Fields

- NGC Containers: Synthetic Data Generation

- SDK: CloudXR

- Webinar: Isaac Developer Meetup #2 - Build AI-Powered Robots with NVIDIA Isaac Replicator and NVIDIA TAO