NVIDIA OptiX is an API for optimal ray tracing performance on the GPU that is used for film and video production as well as many other professional graphics applications.

OptiX SDK 7.2 is the latest update to the new OptiX 7 API. This version introduces API changes to the OptiX denoiser to support layered AOV denoising, a new library for demand loading sparse textures, and some incredibly useful compilation and safety features, such as Validation Mode and launch parameter Specialization. Last but not least, a major convenience change to the OptiX API when using either instancing or motion blur — OptiX will now automatically compute your instance and motion bounding boxes for you, so you don’t have to worry about it.

Layered AOV Denoising

The OptiX AI denoiser is a neural network based denoiser designed to process rendered images to remove Monte Carlo noise. The OptiX denoiser can be especially important for interactive previews in order to give artists a good sense for what their final images might look like.

What are AOV layers?

AOV stands for Arbitrary Output Variable, meaning any arbitrary image data layers that are output by your renderer, in addition to the color image (or as it’s known in the film industry, the “beauty pass”). Most professional film & game renderers have the ability to output these extra layers for using in compositing or special effects, for example Unity, Blender, V-Ray, Arnold, and RenderMan all support AOVs, among many others. Examples of AOV layers might include separate diffuse and specular layers, or a layers that contains surface normals at every pixel, or perhaps a layer for only materials that emit light.

OptiX 7.2 has added the ability to denoise multiple AOV layers simultaneously along with the color image in a single denoising pass. The benefit of denoising all the layers together is that the denoiser will filter all the image layers in the exact same way, ensuring that a composited set of layers still looks correct. This is especially important when denoising layers that have a lot of black background in them, because this kind of imagery can get badly blurred and look wrong when you denoise each layer separately.

Layered AOV denoising can even be used seamlessly with the tiled denoising features that shipped with OptiX 7.1, there is nothing tricky that needs to happen when combining these features.

Performance-wice, each extra AOV layer adds between 10 and 20 percent of the time it takes to denoise the beauty image, so adding extra layers is relatively inexpensive. Here are some example timings of AOV denoising a 1080p frame using different GPU generations.

| GPU | beauty | per AOV | AOV / beauty |

| Ampere | 5.48 ms | 0.52 ms | 9.5% |

| RTX6000 | 8.44 ms | 1.46 ms | 17.3% |

| GV100 | 9.18 ms | 0.90 ms | 9.8% |

| P6000 | 34.00 ms | 2.40 ms | 7.1% |

Note: beauty denoising without AOVs is ~10-20% faster.

Demand-loaded Sparse Textures

The new OptiX Demand Loading library allows hardware-accelerated sparse textures to be loaded on demand, which greatly reduces memory requirements, bandwidth, and disk I/O compared to preloading textures. Scenes with up to 4 TB of textures are now supported in OptiX 7.2.

The size of film-quality texture assets has been a longstanding obstacle to rendering final-frame images for visual effects shots and animated films. It’s not unusual for a hero character to have 50-100 GB of textures, and many sets and props are equally detailed (sometimes for no good reason, except that it would be more expensive to author at a more appropriate resolution).

Film models are usually painted at extremely high resolution in case of extreme closeup. A character’s face is typically divided into several regions (e.g. eye, nose, mouth), each of which is painted at 4K or 8K resolution. In addition, numerous layers are often used, including coarse and fine displacement and various material layers such as dirt, each of which is a separate collection of textures.

It’s not practical to preload entire film-quality textures into GPU memory. Besides wasting GPU memory, it also requires time and bandwidth to read the textures from disk and transfer them to GPU memory. Nor is it possible to downscale the images, since the level of detail that is required depends on the distance of the textured object from the camera. Simply clamping the maximum resolution can lead to a loss of detail that is not acceptable in a film-quality renderer.

To make matters worse, it’s not unusual for a production shot to have thousands of textured objects that are outside the camera view or completely occluded by other objects. It’s generally not possible to know a priori which textures are required without actually rendering a scene.

All of these factors make it difficult to load textures into GPU memory before rendering. Our approach is a multi-pass one: the initial passes identify missing texture data, which is loaded from disk on demand and transferred to GPU memory between passes.

The OptiX Programming Guide provides a detailed technical introduction to the new OptiX Demand Loading library. Although it is currently focused on texturing, much of the library is generic and can be adapted to load arbitrary data on demand, such as per-vertex data like colors or normals. It is delivered as a standalone CUDA library that is distributed as open-source code in the OptiX SDK. Although it is part of the OptiX SDK, the Demand Loading library has no OptiX dependencies, and it is technically not part of the OptiX API.

Validation Mode

OptiX 7.2 has added a much-requested validation mode that gives you some extra safety checks when you’re starting or debugging an OptiX application. This nice feature is quick and easy to enable, it’s just a one-liner, and it automatically turns on a whole bunch of extra device side checks to look for common errors: It will enable debug exceptions automatically, and check the CUDA stream status for every API call, for example. Validation mode even checks your shader binding table headers for some common problems.

Specialization

The new specialization feature in OptiX 7.2 adds a new way to toggle features at run time, and have your shaders compile out all the unused features. For example if you turn off shadows, you’d like all the code related to shadowing to be skipped completely, so that it doesn’t affect performance at all.

OptiX specialization lets a developer mark portions of their launch parameters to be compiled as if they were constant, much like the ideas behind C++ template specialization. The set of launch parameters is a block of memory that is sent from the CPU to the GPU before rendering a frame, and it is used for any global values you might need in your OptiX shaders.

A developer can use specialization to replace uses of compiler directives like #ifdef, or to replace build tricks that modify source code or PTX at run-time. One great benefit of this system is that it allows you to ship an application with a single version of PTX code, rather than multiple versions that are compiled with different features enabled.

optixBoundValues is a new OptiX SDK sample that demonstrates specialization. This sample is based on the optixPathTracer SDK sample, and specializes the number of light samples used per frame. Interactive toggles are included in the sample to enable or disable specialization, and change the number of light samples. Specializing the number of light samples to 1 allows the compiler to remove code associated with looping over the light samples, which results in the sample running about two times faster. (Keep in mind this is a contrived example just meant to illustrate).

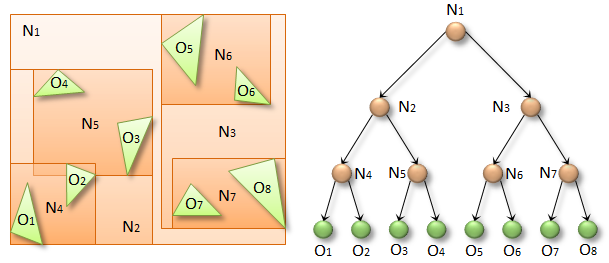

Automatic Bounding Boxes

Before Optix 7.2, the API for instances and motion blur required the user to provide pre-computed bounds for each object, potentially bounds that encapsulated complex transforms combined with moving objects. OptiX 7.2 now takes care of bounds computation for you, automatically computing the bounding boxes for you in these difficult cases involving transforms and motion!

Getting OptiX

The OptiX 7.2 SDK is free for commercial use, and has already been integrated into the world’s top content creation and rendering applications, and the SDK is available to developers now!