Today, NVIDIA released TensorRT 6 which includes new capabilities that dramatically accelerate conversational AI applications, speech recognition, 3D image segmentation for medical applications, as well as image-based applications in industrial automation.

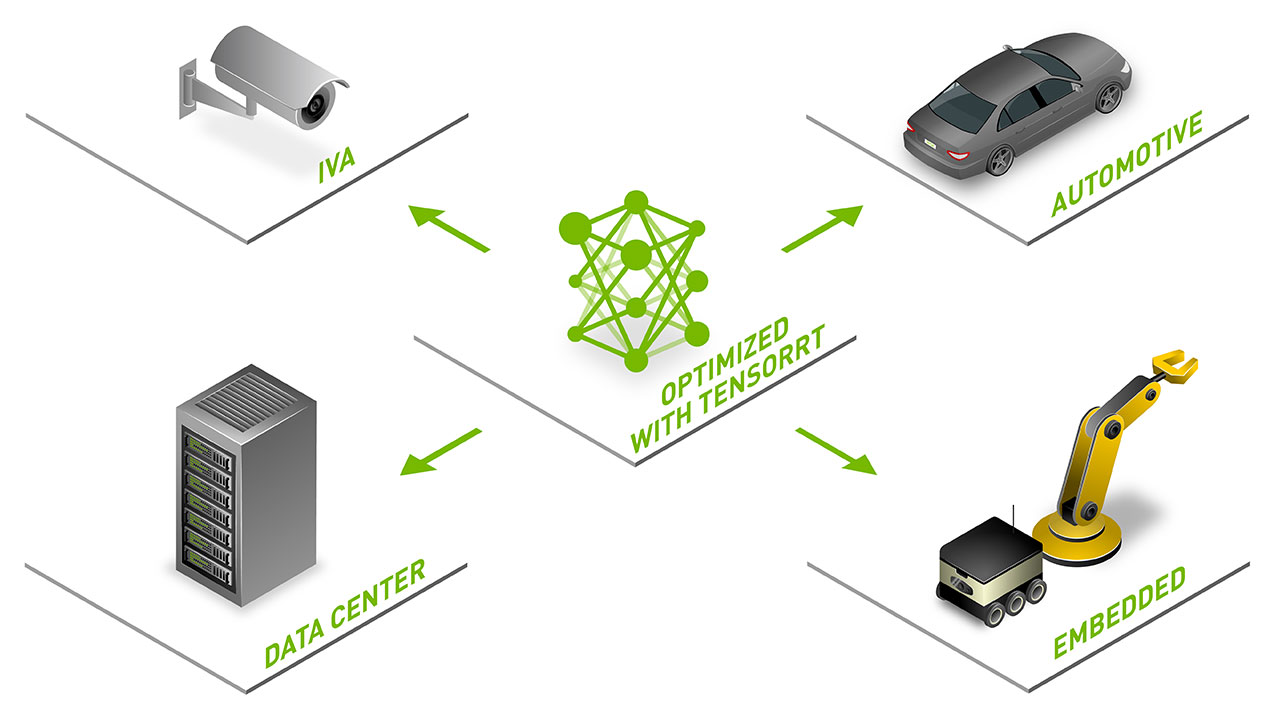

TensorRT is a high-performance deep learning inference optimizer and runtime that delivers low latency, high-throughput inference for AI applications.

With today’s release, TensorRT continues to expand its set of optimized layers, provides highly requested capabilities for conversational AI applications, delivering tighter integrations with frameworks to provide an easy path to deploy your applications on NVIDIA GPUs.

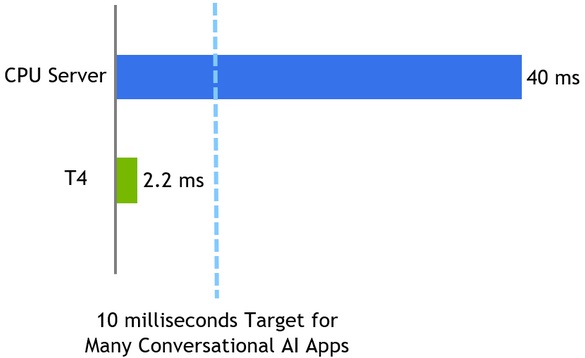

In TensorRT 6, we’re also releasing new optimizations that deliver inference for BERT-Large in only 5.8 ms on T4 GPUs, making it practical for enterprises to deploy this model in production for the first time.

Bidirectional Encoder Representations from Transformers (BERT) based solutions are being explored across the industry for language-based services because of the ability to reuse weights across applications and high accuracy. We recently released TensorRT optimizations to perform BERT-Base inference, the new version runs inference in 2 ms. See complete inference results on the Deep Learning performance page.

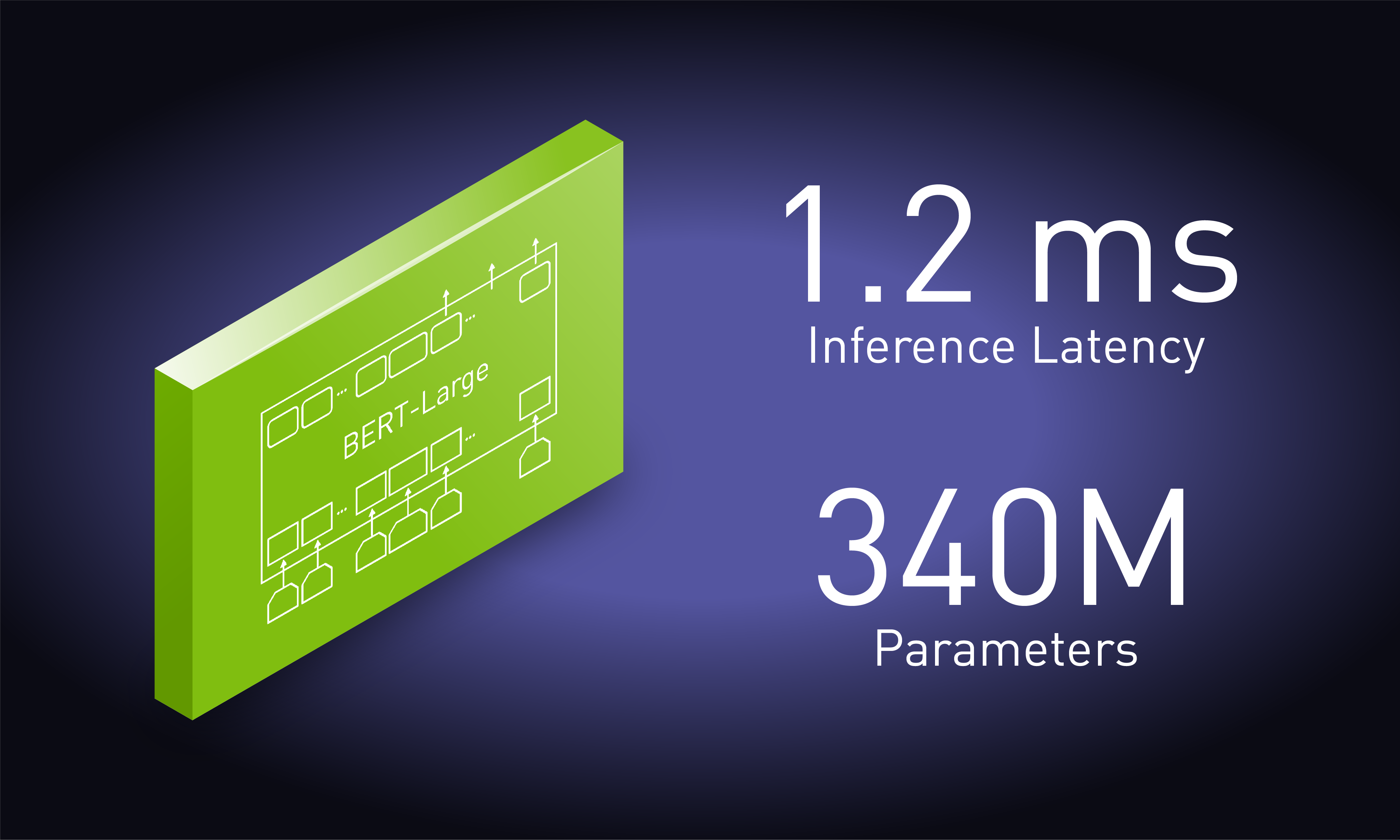

To offer an engaging experience, natural language understanding (NLU) models like BERT need to execute in less than 10 ms. BERT-Base uses 110M parameters and delivers high accuracy for several QA tasks, BERT-Large uses 340M parameters and achieves even higher accuracy than the human baseline for certain QA tasks. Higher accuracy translates to a better user experience for language-based interactions for enterprise customers and higher revenues for organizations deploying them.

We also released several new samples in the TensorRT Open Source Repo to make it easy to get started with accelerating applications based on language (OpenNMT, BERT, Jasper), images (Mask-RCNN, Faster-RCNN) and recommenders (NCF) with TensorRT. The latest version of Nsight Systems tools can be used to further tune and optimize you deep learning applications.

TensorRT 6 Highlights:

- Achieve superhuman NLU accuracy in real-time with BERT-Large inference in just 5.8 ms on NVIDIA T4 GPUs through new optimizations

- Accelerate conversational AI, speech and image segmentation apps easily using new API and optimizations for dynamic input shapes

- Accelerate apps with fluctuating compute needs such as online services efficiently through support for dynamic input batch size

- Up to 5x faster inference vs CPU for image segmentation in medical applications through new layers for 3D convolutions

- Accelerate industrial automation apps through optimizations for 2D U-Net

TensorRT 6 is available for download from the TensorRT product page.