To help autonomous machines better sense transparent objects, Google researchers, in collaboration with Columbia University and Synthesis AI, developed ClearGrasp, an algorithm that can accurately estimate the 3D data of clear objects, like a glass container or plastic utensil, from standard RGB images.

“Enabling machines to better sense transparent surfaces would not only improve safety but could also open up a range of new interactions in unstructured applications — from robots handling kitchenware or sorting plastics for recycling, to navigating indoor environments or generating AR visualizations on glass tabletops,” the Google researchers stated in a post published on the Google AI blog, Learning to See Transparent Objects.

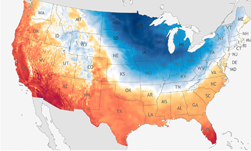

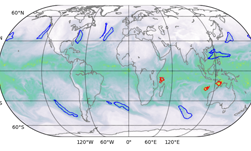

“When you get these heat waves or cold spells, if you look at the weather map, you are often going to see some weird behavior in the jet stream, abnormal things like large waves or a big high-pressure system that is not moving at all,” said Rice’s Pedram Hassanzadeh. He is the co-author of the system study, along with Ashesh Chattopadhyay and Ebrahim Nabizadeh. The study, Analog forecasting of extreme‐causing weather patterns using deep learning[JW1] , was recently published in the American Geophysical Union’s Journal of Advances in Modeling Earth Systems.

ClearGrasp relies on three different neural networks: a network to estimate surface normals, one for occlusion boundaries, and another that masks transparent objects. The mask removes all pixels that belong to a transparent object so that it can be filled in.

All of the networks are trained on synthetic and real data on NVIDIA V100 GPUs in the Google Cloud Platform with the cuDNN-accelerated PyTorch deep learning framework.

“We created our own large-scale dataset of transparent objects that contains more than 50,000 photorealistic renders with corresponding surface normals (representing the surface curvature), segmentation masks, edges, and depth, useful for training a variety of 2D and 3D detection tasks,” the researchers stated. “Each image contains up to five transparent objects, either on a flat ground plane or inside a tote, with various backgrounds and lighting.”

At first, all of the networks performed well on real-world transparent objects. However, when tested on other surfaces such as walls or fruits, the results were poor.

“This is because of the limitations of our synthetic dataset, which contains only transparent objects on a ground plane,” the team explained. “To alleviate this issue, we included some real indoor scenes from the Matterport3D and ScanNet datasets in the surface normals training loop,”

After doing this, the models performed well on all surfaces in their test set.

“Despite being trained on only synthetic transparent objects, we find our models are able to adapt well to the real-world domain — achieving very similar quantitative reconstruction performance on known objects across domains.”

When tested on a UR5 robot arm, with an NVIDIA GPU handling inference, the researchers saw significant improvements in the grasping success rates of transparent objects, jumping from a 12% baseline to 74%, and from 64% to 86% with suction.

To help drive further research on data-driven perception models for transparent objects, the Google team released their dataset. They also released more examples on the ClearGrasp project website, and GitHub.