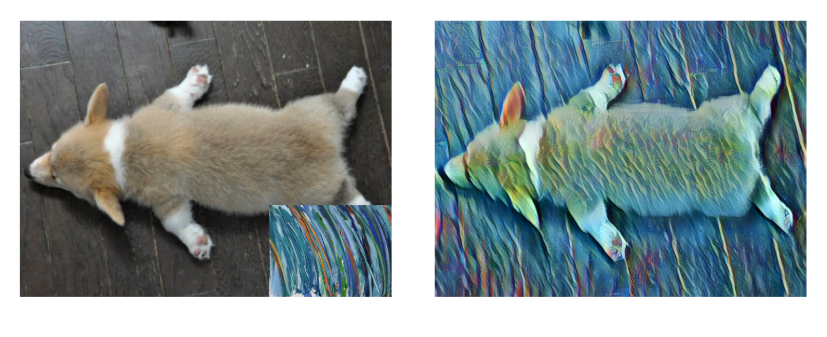

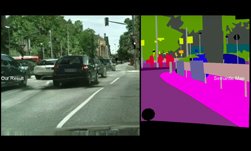

GANs have captured the world’s imagination. Their ability to dream up realistic images of landscapes, cars, cats, people, and even video games, represents a significant step in artificial intelligence.

Over the years, NVIDIA researchers have contributed several breakthroughs to GANs.

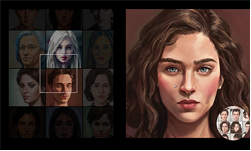

This new project called StyleGAN2, presented at CVPR 2020, uses transfer learning to generate a seemingly infinite numbers of portraits in an infinite variety of painting styles. The work builds on the team’s previously published StyleGAN project.

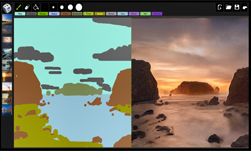

Shown in this new demo, the resulting model allows the user to create and fluidly explore portraits. This is done by separately controlling the content, identity, expression, and pose of the subject. Users can also modify the artistic style, color scheme, and appearance of brush strokes.

The model was trained using an NVIDIA DGX system comprised of eight NVIDIA V100 GPUs, with the cuDNN-accelerated TensorFlow deep learning framework.

“Overall, our improved model redefines the state of the art in unconditional image modeling, both in terms of existing distribution quality metrics as well as perceived image quality,” the researchers stated in their paper, Analyzing and Improving the Image Quality of StyleGAN.

Because of its interactivity, the resulting network can be a powerful tool for artistic expression, the researchers stated in the video.

The implementation and trained models are available on the StyleGAN2 GitHub repo. If you are attending CVPR 2020, you can also watch a talk by the authors.