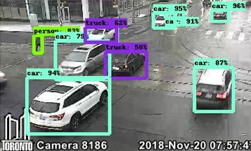

To help monitor traffic conditions, locate missing vehicles, and possibly help find lost children, University of Toronto and Northeastern University researchers developed a deep learning-based vehicle image search engine that can be used to narrow down the location of a vehicle.

“We developed the Vehicle Image Search Engine (VISE) to support the fast search of highly similar vehicles given an existing image of a vehicle in question,” the researchers stated in their paper. “Through a simple and intuitive user interface, our demonstration will let users select an image of a vehicle, and obtain a ranked list of similar vehicle images located by traffic cameras from a large search index containing more than approximately 600,000 identified vehicles per day.”

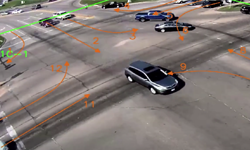

What makes this work unique is that users can refine the search result based on time, location of cameras, and the travel distance from the initial location of the vehicle, the researchers explained.

In the backend, the tool was trained using a region-based fully convolutional network (RFCN) for object detection and a ResNet-50-based CNN model to extract the vehicle’s features on multiple NVIDIA GPUs, and with the cuDNN-accelerated TensorFlow deep learning framework. To help increase retrieval precision, the classifiers were trained using XGBoost on labeled pairs of images of objects from traffic camera footage.

For the dataset, the researchers used publicly available traffic camera footage from the Toronto Open Data Portal.The models are also using GPUs for inference, the researchers stated.

“When object detection was performed on each image using [solely] a CPU, it took up to 5 seconds per image to run an inference. We optimized the graph inference by using a GPU, which took up to about 0.10 second per image,” the team explained. “Furthermore, we were able to optimize it by adopting TensorRT, a high performance neural network inference optimizer and runtime engine, to about 0.07 second per image.”

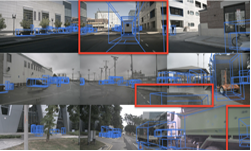

The VISE search engine for vehicles consists of three major components: the image processing pipeline, the search server, and the web-based user interface.

For the dataset, the researchers used publicly available traffic cameras from the Toronto Open Data Portal. The images are from 281 cameras and sampled every two minutes, the researchers said. The images and metadata are first stored in a SQLite4 database, and then sent to an object detection and feature extraction system running on TensorFlow.

Though the work is still a proof of concept, the model could easily be implemented into a city’s current traffic camera system.