GPU parallel computing is delivering high performance to autonomous vehicle evaluation.

In a research paper presented at the Computer Vision and Pattern Recognition Conference (CVPR) this week, NVIDIA GPUs were found to drastically reduce the time it takes to evaluate perception models using a new sophisticated evaluation metric, named Planning Kullback-Leibler Divergence (PKL).

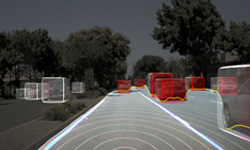

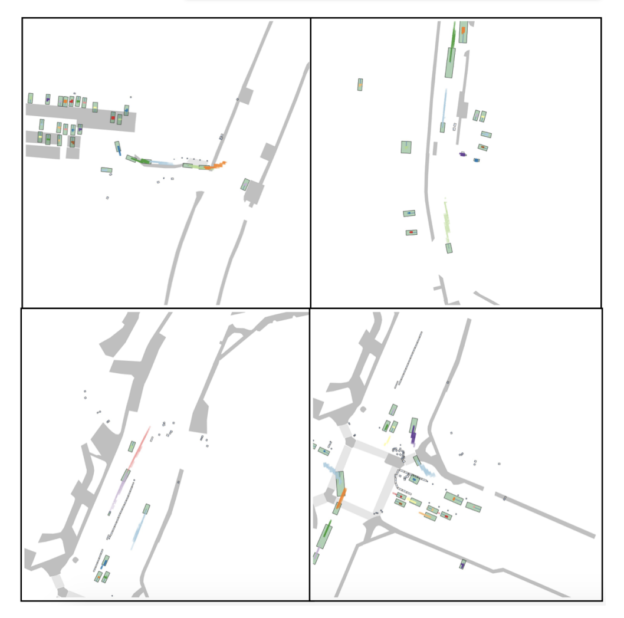

PKL evaluates the ability of a self-driving car to perceive the world by using a neural network to simulate how a car would drive when running different perception algorithms in real world scenarios.

The key to validating the deep neural network(s) (DNN) responsible for autonomous operation is tracking salient evaluation statistics over vast amounts of driving data. A fleet of just 50 vehicles driving six hours a day generates about 1.6 petabytes of sensor data a day. If all that data were stored on standard 1GB flash drives, they’d cover more than 100 football fields.

Processing such a massive amount of data requires a high-performance computer, and even then, it can take days to complete. However, with GPU technology, developers can harness unparalleled performance for streamlined autonomous vehicle training and testing.

Researchers observed a significant improvement when leveraging GPUs to calculate the PKL evaluation metric. Evaluating the PKL metric on the Aptiv nuScenes dataset took three hours running on a CPU but only five minutes on a NVIDIA TITAN GPU — a 36x improvement.

A Trove of Driving Data

Aptiv and researchers from NVIDIA are collaborating to include PKL as a new evaluation metric featured on the nuScenes leaderboards. NuScenes is a public, large-scale training dataset that enables developers to run autonomous vehicle perception algorithms in challenging urban driving scenarios.

The dataset is composed of 1,000 different scenes from Boston and Singapore roads, encompassing 360-degree camera, radar and lidar sensor modalities — about 10 times larger than traditional public training repositories. Each scenario is 20 seconds long, covering a wide array of daily driving situations.

PKL will be added as an additional evaluation metric to the nuScenes public leaderboards this summer. The leaderboards have received 50 submissions from automakers, suppliers and startups in the last year.

Leveled Up Performance

Evaluating the PKL metric on the large nuScenes dataset can typically take about three hours if a GPU is not used. While a few hours may not seem cumbersome for a single dataset, when scaled up for the petabytes of data needed for comprehensive autonomous vehicle testing, this processing rate could create major bottlenecks in development.

To learn more about the research, read the full paper here. See a complete list of all of the NVIDIA research presented at CVPR here.