Numba is the Just-in-time compiler used in RAPIDS cuDF to implement high-performance User-Defined Functions (UDFs) by turning user-supplied Python functions into CUDA kernels – but how does it go from Python code to CUDA kernel? In this post we’ll take a look at Numba’s compilation pipeline.

If you enjoy diving into Numba’s internals, check out the accompanying notebook that shows each stage in more depth, with code provided to get at the internal representations with each pipeline stage, along with the linking, loading, and kernel launch process.

The Challenge, and an Overview of the Pipeline

Compiling Python source code to machine code is challenging because the two representations are quite different. Some of the differences are:

| Python | Machine code | |

| Typing | No type information; dynamic; “duck typing” | Every instruction and value has a type |

| Abstraction level | Very expressive: classes, objects, methods, comprehensions, etc. | Simple instructions: 2 or 3 operations, mostly a single operation per instruction |

| Target | Runs on any machine on which the Python interpreter runs | Highly specific to one architecture |

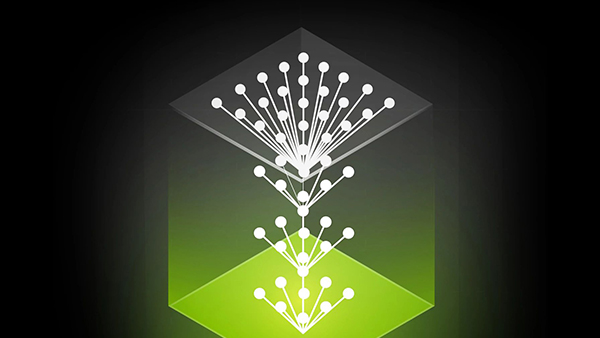

This comparison is not exhaustive, but it highlights the problem – Numba needs to take a very expressive, dynamic language, and translate it into one that uses very simple, specific instructions specialized for particular types. Numba’s pipeline does this with a sequence of stages, each moving the representation further from the Python source, and closer to executable machine code:

The Numba compilation pipeline starts with Python source code and takes it through the following stages to generate PTX code for CUDA GPUS. We’ll walk through seven stages of the pipeline:

- Bytecode Compilation

- Bytecode Analysis

- Numba Intermediate Representation (IR) Generation

- Type Inference

- Rewrite Passes

- Lowering Numba IR to LLVM IR

- Translating from LLVM to PTX with NVVM

Each step from Python source code to kernel launch is detailed with code exposing the internals at each step of the way in the RAPIDS Medium Blog, The Life of a Numba Kernel, here.